|

||||||||||||

| HOW TO MAKE (ALMOST) ANYTHING MIT MEDIA LAB |

|

PROJECTS | ABOUT | CONTACT | ||||||||

|

|

|||||||||||

| FINAL PROJECT - week1 AN INTERACTIVE ENVIRONMENT FOR 2+ PERFORMERS AND 1+ OBSERVER | ||||||||||||

In the course of thinking about it this week, my idea has already modified slightly. I will outline the first one and then elaborate on how the second one could compliment it or replace it. The first idea is to create an interactive system among sound, visual and movement. The movement is initiated by a dancer, whose movement is tracked either by a webcam or another device the dancer is wearing. This information of motion is interpreted into visual display, resembling diagramatic notation in music composition. This notation (aka 'energy diagram') is projected in the space and then interpreted by a live musician. |

||||||||||||

|

||||||||||||

| interactive system flow (clock-wise) | ||||||||||||

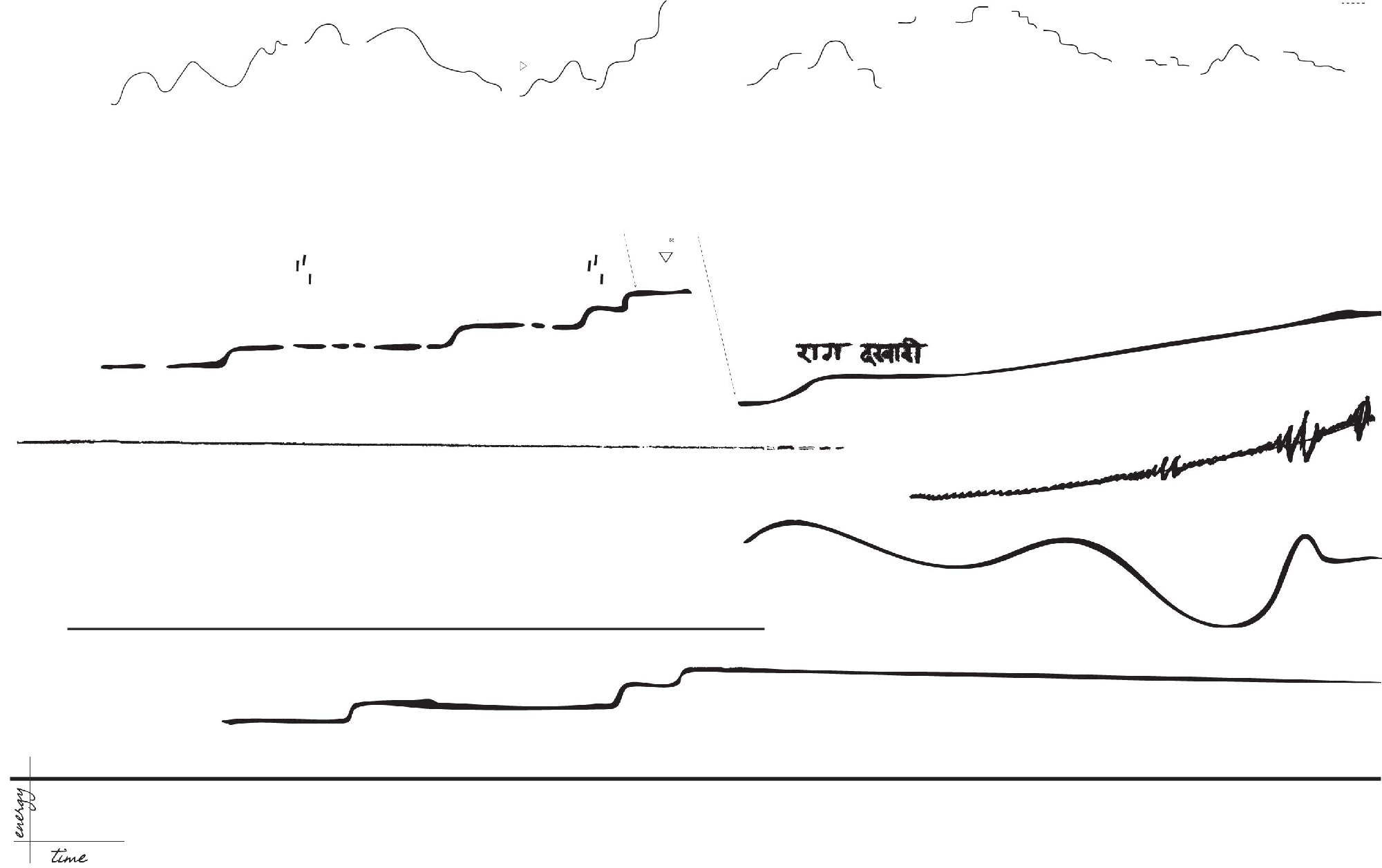

| The Energy Diagram | ||||||||||||

| The conception for diagramatic notation comes from a long tradition of visual notation (Cage, Cardew) in music. Taking x-axis as time, and y-axis as energy. The time axis is relative, there is no defined duration. The various lines are to be followed by various instruments. If fewer instruments than lines are present, then the musician(s) can choose which line to start on, but must finish one before jumping to the next. Rises and falls in energy can be interpreted by any combination/permutation subsets of {tempo, timbre, volume, pitch, dynamics, texture, structure, extended techniques, other}. The technical way in which the information from movement would actually be interpreted into these "energies" and then projected onto a screen at this point seems magical, but hopefully the class will teach me to explore some options. | ||||||||||||

|

||||||||||||

| The Laban Cube | ||||||||||||

| One way I have considered for sonic information from the live musician to be fed back into instruction for dance was inspired by a movement taught by dancer and choreographer Jill Johnson. Coming from the Laban tradition for notating dance, a dancer can envision dancing in a space with 26 points around her, as pictured below. Such a cube could be built with 26 small speakers that would generate either clicks or noises (to not interfere with pitched sonic content coming from the musician). These speakers would ideally be protruding laterally on adjustable "arms" from a see-through cube.

The interaction then works like this: pitch content is taken from the live musician and sent to stimulate certain speakers that would generate clicks or noises. The programming of which speaker corresponds to which pitch range could be changed in the live performance. Similarly, the source of stimulation could change from pitch to frequency of pitch shifts, to overtones, or volume, or other parameters to be explored... probably based on work that has already been done in this.

|

||||||||||||

|

||||||||||||

| Idea 2 - BREATH | ||||||||||||

| After thinking about the real source of communication among performers, one thing that is incredibly important and often isn't audible or visible is the breath. For a dancer, a given movement must often coincide with a breath in or out, and what would interesting to observe is how 1-this information can be relayed to an observer and 2-to what extent the breathing patterns might be in sync between a dancer and a musician (not wind instrument). The aim, then, would be - via contact mic's or especially designed wearable bands - to detect the breathing in the performance, amplify it in the space. Process the sound into a composition live (via something like Ableton) and then, if time in this project allows, connect it with the whole interactive triangle above. It might be, however, that the display of energy diagrams would be less a composition and more an aesthetic display of "energy" passed between performers via detection and amplification of breath... |

||||||||||||

| Copyright 2013 by Anya Yermakova | ||||||||||||