My goal for this week was to create a cheap wide FOV augmented reality display. Also, I wanted it to look cool like the solid-eye from MGS4.

My approach was to make a google-cardboard clone which only covered 1 eye. That way your brain can merge the virtual and real images from your two eyes. I tried it out by modifying a google cardboard, and the results weren’t terrible.

The advantage of this would be that one could take advantage fo teh wide FOV of VR with the pass-through ability of AR.

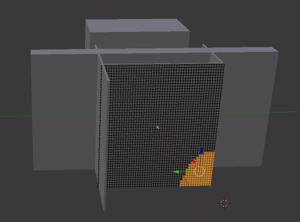

Started by cuttign out a grid for the nose mount, but realized that using constructive solid geometry would be better.

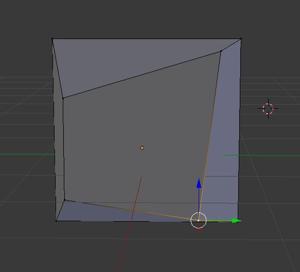

I implemented the cool front-face by playing with the vertices of a cube in blender. Dope.

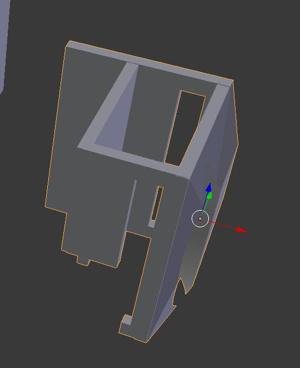

First final design, built to hold a phone

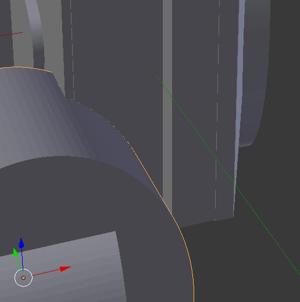

The edge is not good enough for 3d printing, the rise is too steep. Redesigned using a cylindrical cutout.

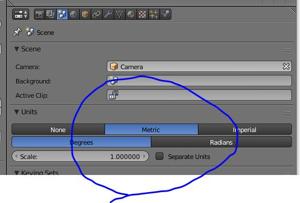

Made my first mistake by sending it to Tom with the wrong units. Needed to go back to blender and set the units to metric (before there were no units). That’s when I found out that I wouldn’t have the printing time to be able to build my thing. I would have to fit it into 2” x 2” x 2”

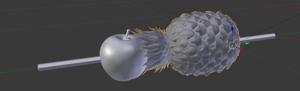

Back to the drawing board. I decided to make a pen-pineapple-apple-pen.

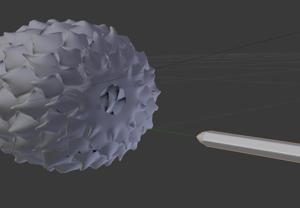

I had to make sure the model was water-tight. Notice that at the bottom of the pineapple there is a big gaping hole. This was fixed by unioning with a sphere.

The finished product. The 3D print was done on a printer which produces 2 diferent types of material, one is water soluble. The support structures were made from this material and removed by submerging and rubbing the piece in the sink. It was interesting that the support material covered the entire piece, this is likely due to the small details all over the pineapple.

For my 3d scanning task, I was a little upset that the 3D scanning procedure was as simple as pressing a button and swinging the scanner around the object. Also, im not creative enough to come up with an interesting object to scan.

I wanted to explore 3D scannign a non-object. Here I 3D scanned a sound-field, by moving a 3D tracked microphone and measuring the volume output.

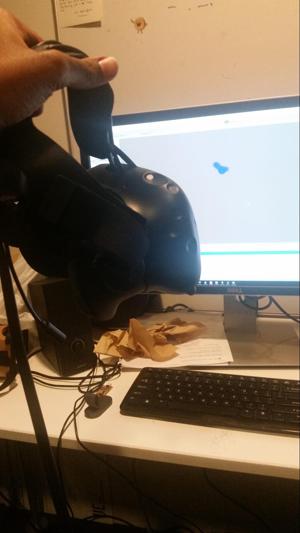

I utilized a Vive Headset (easily tracked in 6DOF) and the microphone on the headset to scan out the sound-field. The data was recorded using a unity app I wrote, then visualized in matlab.

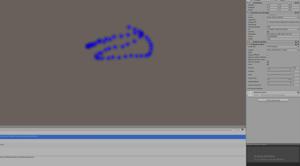

Screenshot of the unity app. The blue indicates low sound, and the red means stronger sound. The most tedious part was moving the headset around in an even enough manner to cover the sound field.

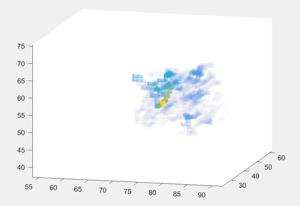

Final sound-field image. You can see there are areas of high intensity.

The sound field I produced was a 15khz tone which was broadcast from 2 speakers which were right next to each other. The result should have been a field with oscillating peaks and troughs. I guess you can sort of see that, but it’s difficult to be sure. I should measure just 1 speaker and verify the method.

Informally, sticking the device closer to the sound source does make the value go up.