function daub(x)

# Takes the daubechies wavelet transform of a signal

c = [(1 + sqrt(3)) / (4 * sqrt(2)),

(3 + sqrt(3)) / (4 * sqrt(2)),

(3 - sqrt(3)) / (4 * sqrt(2)),

(1 - sqrt(3)) / (4 * sqrt(2))]

N = length(x)

# make sure we got a power of 2 vector length

assert(log2(N) == int(log2(N)))

X = zeros(N)

tmp1 = zeros(N)

tmp1[:] = x[:]

tmp2 = zeros(N)

M = N / 2

while true

for i in 1:(M-1)

#println(i)

tmp2[i] = c[1]*tmp1[2i-1] + c[2]*tmp1[2i] + c[3]*tmp1[2i+1] + c[4]*tmp1[2i+2]

X[M+i] = c[4]*tmp1[2i-1] - c[3]*tmp1[2i] + c[2]*tmp1[2i+1] - c[1]*tmp1[2i+2]

end

# handle the wraparound case

tmp2[M] = c[1]*tmp1[2M-1] + c[2]*tmp1[2M] + c[3]*tmp1[1] + c[4]*tmp1[2]

X[2M] = c[4]*tmp1[2M-1] - c[3]*tmp1[2M] + c[2]*tmp1[1] - c[1]*tmp1[2]

M = M / 2

if M <= 1

X[1:2] = tmp1[1:2]

break

end

for i in 1:(M-1)

tmp1[i] = c[1]*tmp2[2i-1] + c[2]*tmp2[2i] + c[3]*tmp2[2i+1] + c[4]*tmp2[2i+2]

X[M+i] = c[4]*tmp2[2i-1] - c[3]*tmp2[2i] + c[2]*tmp2[2i+1] - c[1]*tmp2[2i+2]

end

# handle the wraparound case

tmp1[M] = c[1]*tmp2[2M-1] + c[2]*tmp2[2M] + c[3]*tmp2[1] + c[4]*tmp2[2]

X[2M] = c[4]*tmp2[2M-1] - c[3]*tmp2[2M] + c[2]*tmp2[1] - c[1]*tmp2[2]

M = M / 2

if M <= 1

X[1:2] = tmp2[1:2]

break

end

end

return X

end

function idaub(X)

# Takes the inverse daubechies wavelet transform of a signal

c = [(1 + sqrt(3)) / (4 * sqrt(2)),

(3 + sqrt(3)) / (4 * sqrt(2)),

(3 - sqrt(3)) / (4 * sqrt(2)),

(1 - sqrt(3)) / (4 * sqrt(2))]

N = length(X)

assert(log2(N) == int(log2(N)))

x = zeros(N)

tmp1 = zeros(N)

tmp1[:] = X[:]

tmp2 = zeros(N)

M = 2

# when we access the upper half of the wavelet domain vector we pull from the original

# given data. When we pull from the lower half we use what we calculated in the last

# pass.

while M < N

tmp2[1] = c[1]*tmp1[1] + c[4]*X[M+1] + c[3]*tmp1[M] + c[2]*X[2M]

tmp2[2] = c[2]*tmp1[1] - c[3]*X[M+1] + c[4]*tmp1[M] - c[1]*X[2M]

for i in 2:(M-1)

#println(i)

tmp2[2i-1] = c[3]*tmp1[i] + c[2]*X[M+i] + c[1]*tmp1[i+1] + c[4]*X[M+i+1]

tmp2[2i] = c[4]*tmp1[i] - c[1]*X[M+i] + c[2]*tmp1[i+1] - c[3]*X[M+i+1]

end

M = 2M

if M >= N

return tmp2

end

tmp1[1] = c[1]*tmp2[1] + c[4]*X[M+1] + c[3]*tmp2[M] + c[2]*X[2M]

tmp1[2] = c[2]*tmp2[1] - c[3]*X[M+1] + c[4]*tmp2[M] - c[1]*X[2M]

for i in 2:(M-1)

#println(i)

tmp1[2i-1] = c[3]*tmp2[i] + c[2]*X[M+i] + c[1]*tmp2[i+1] + c[4]*X[M+i+1]

tmp1[2i] = c[4]*tmp2[i] - c[1]*X[M+i] + c[2]*tmp2[i+1] - c[3]*X[M+i+1]

end

M = 2M

if M >= N

return tmp1

end

end

end

# 12.2

using PyPlot

X = zeros(2^12)

X[1] = 1

X[30] = 1

x = idaub(X)

plot(x)

# 12.3-C

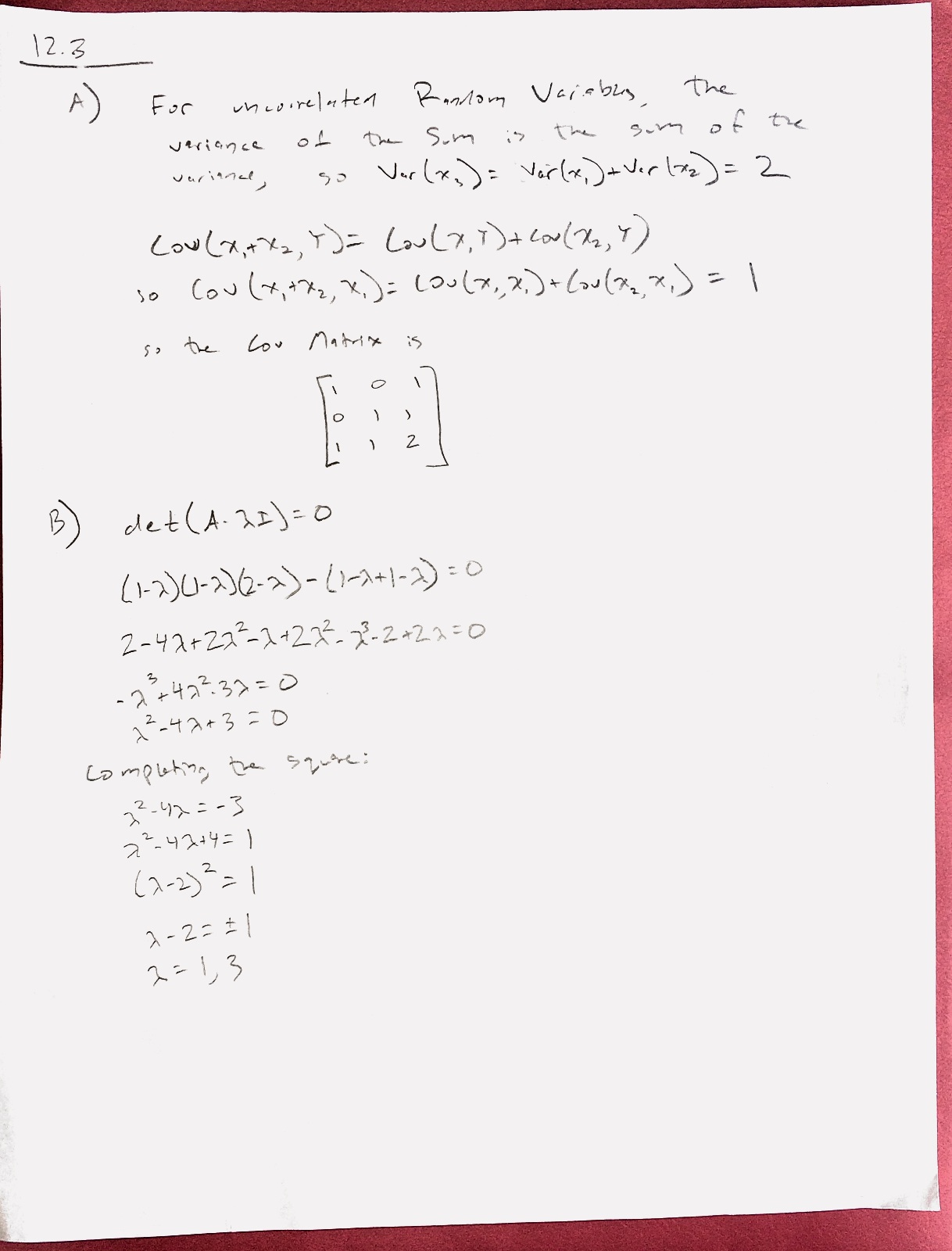

x1 = randn(1, 100000)

x2 = randn(1, 100000)

X = vcat(x1, x2, x1+x2)

C = cov(X')

ev = eigfact(C)

println("\n\nCovariance:")

show(C)

println("\n\nEigenvalues:")

show(ev.values)

println("\n\nEigenvectors:")

show(ev.vectors)

# Use the transpose of the eigenvectors to map into the Y space

Y = ev.vectors[:,2:3]' * X

# as a check, see how much error there is when we project back in the original space

println("\n\nProjection Error:")

X2 = ev.vectors[:, 2:3] * Y

show(sum((X2-X) .* (X2-X), 2) ./ size(X2, 2))

Cy = cov(Y')

println("\n\nCovariance of Y:")

show(Cy)

# 12.4 A

S = rand(2, 1000)

scatter(S[1,:], S[2,:])

# 12.4 B

A = [1 2; 3 1]

X = A * S

scatter(X[1,:], X[2,:])

# 12.4 C

X2 = X .- mean(X, 2)

C2 = cov(X2')

ev = eigfact(C2)

Y = (ev.vectors' * X2) ./ sqrt(ev.values)

scatter(Y[1,:], Y[2,:])

cov(Y')

# 12.4 - D

# this is the punchline - TODO.