Machine Building Week

This week our group built a robot that follows you using computer vision and shows a phone scrolling social media content to “rot” your brain. It is designed after the Dalek robot from Star Trek.

My role was to help design the drive train mechanism.

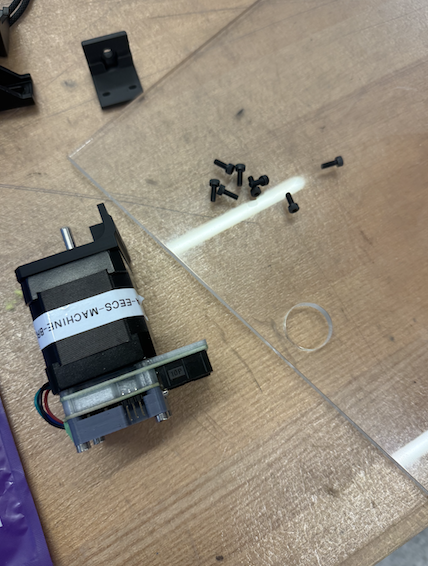

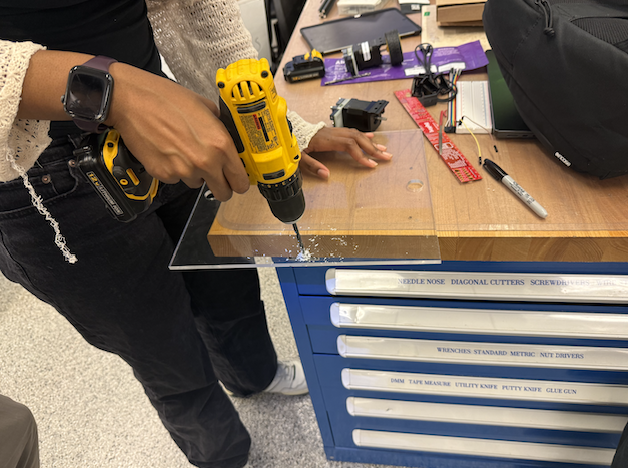

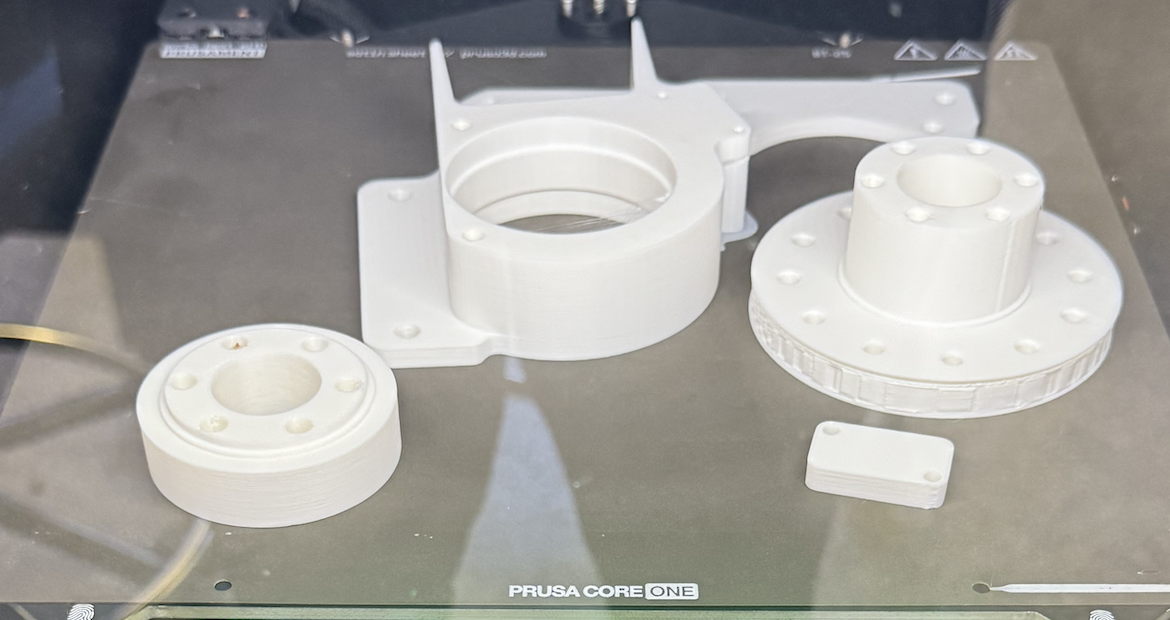

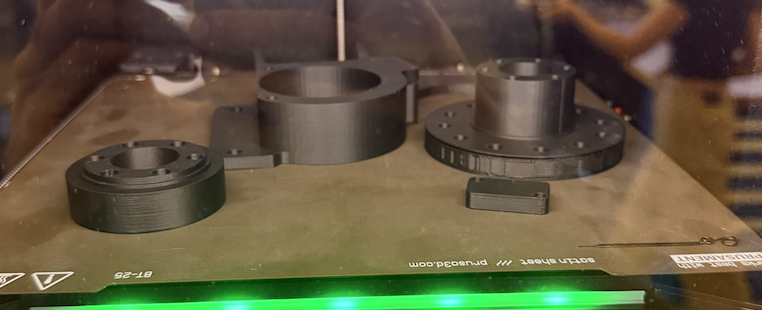

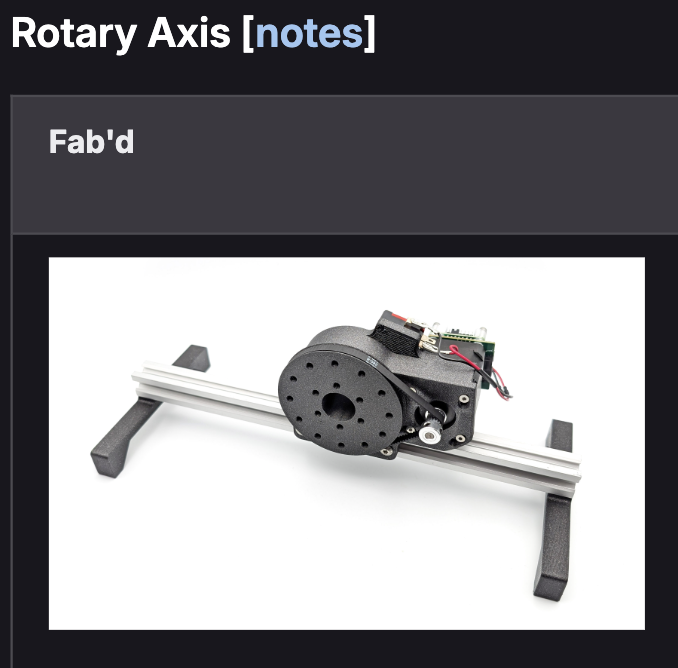

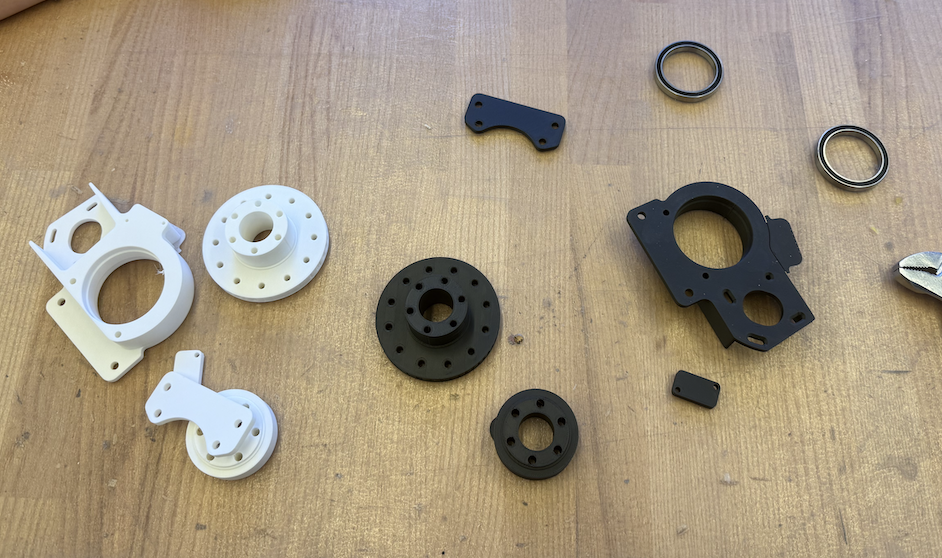

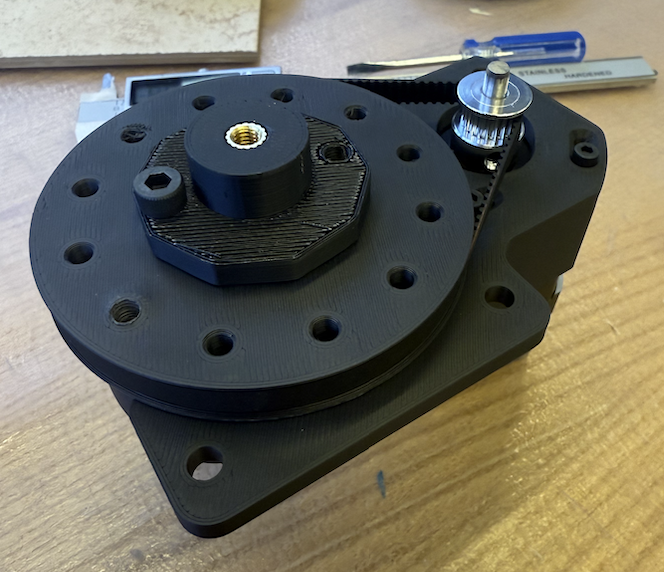

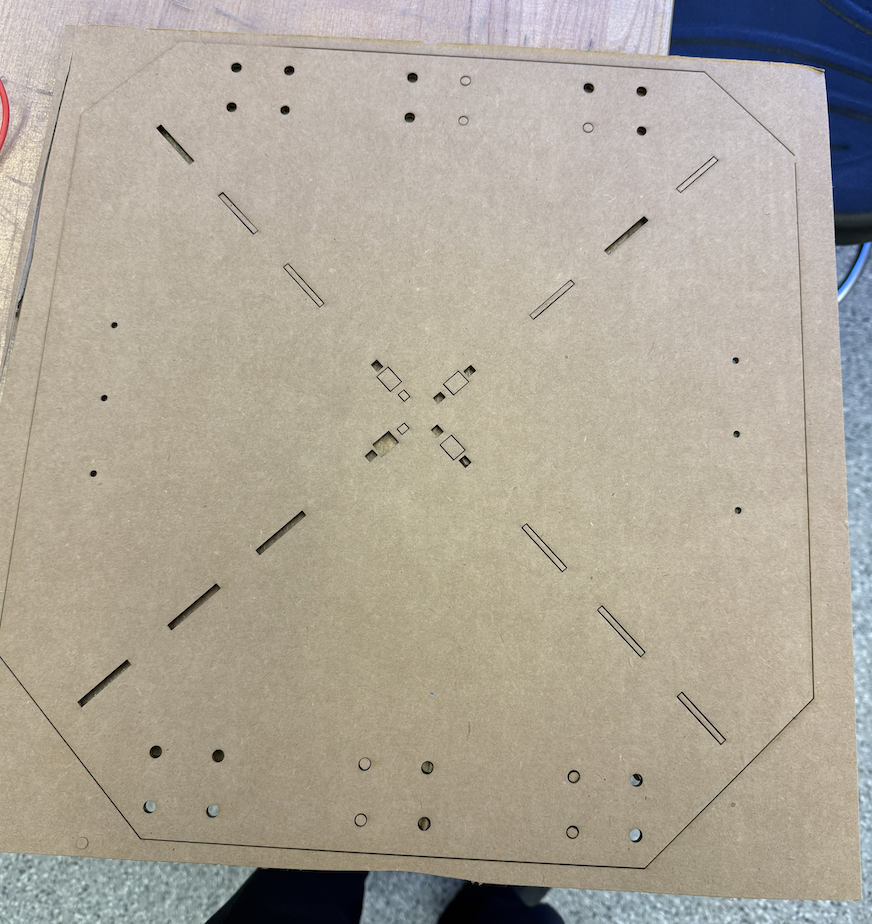

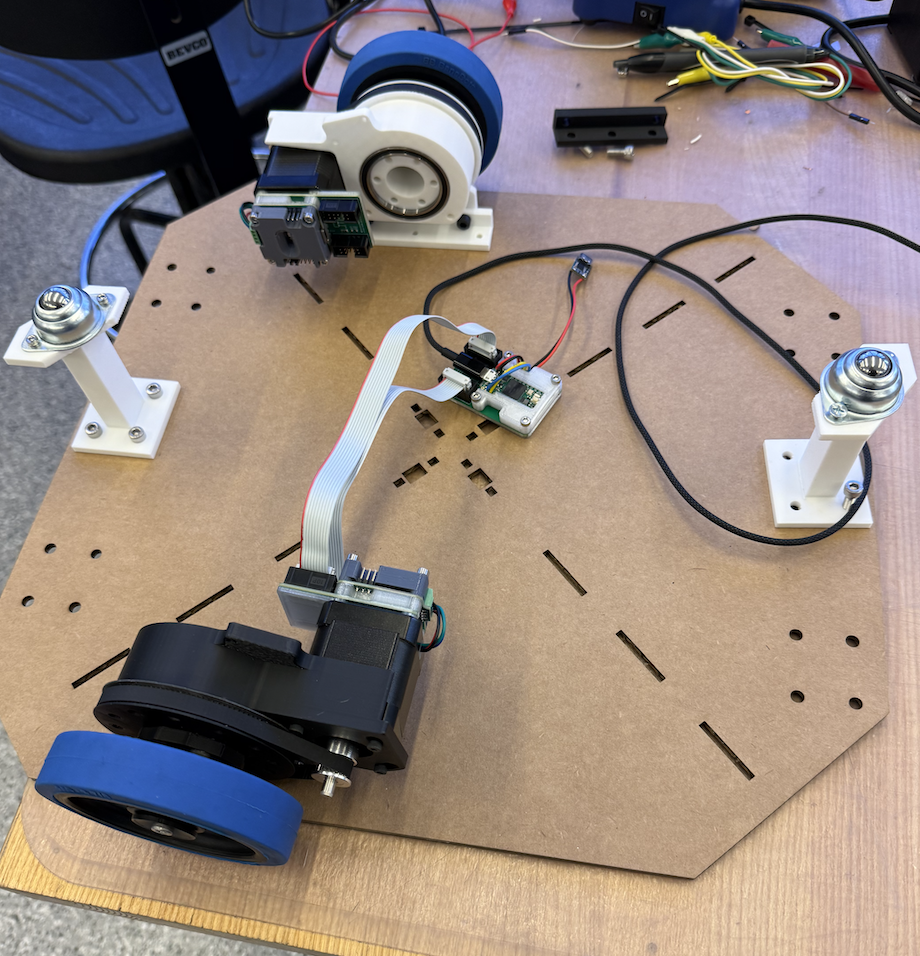

Eghosa and I started trying to figure out how to mount the stepper motors provided in the kit to the acrylic board that we decided will be the base of the material. That didn’t work that well, and then Quentin told use we would also need more torque for the wheels, so Claire put in 2 sets of prints for the this part that we had files provided for. It was originally designed to mount on an aluminum rod, but we were able to work around that later.

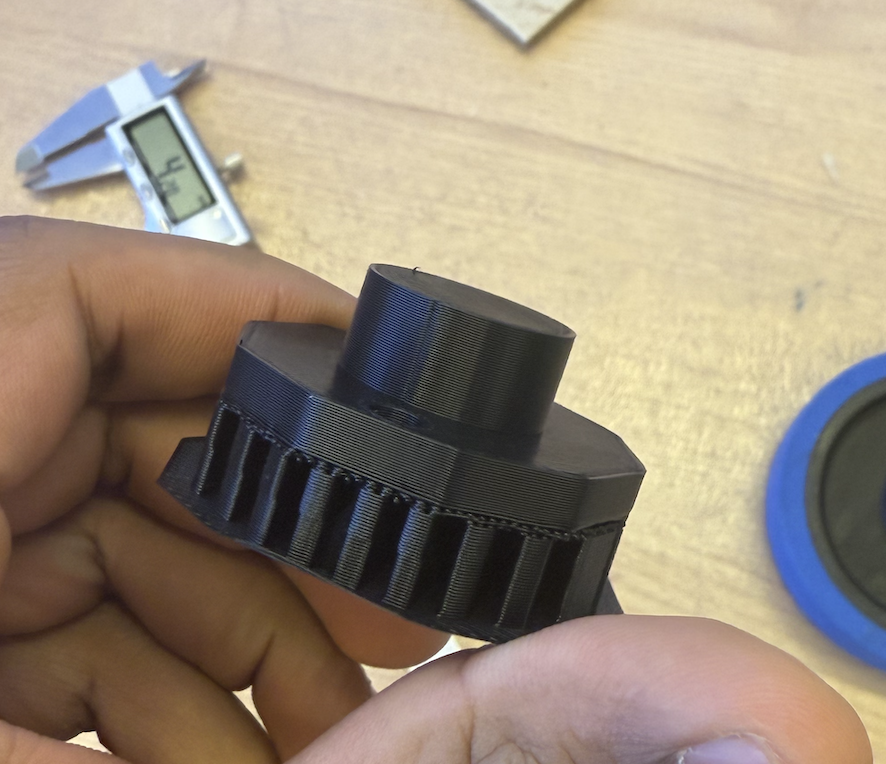

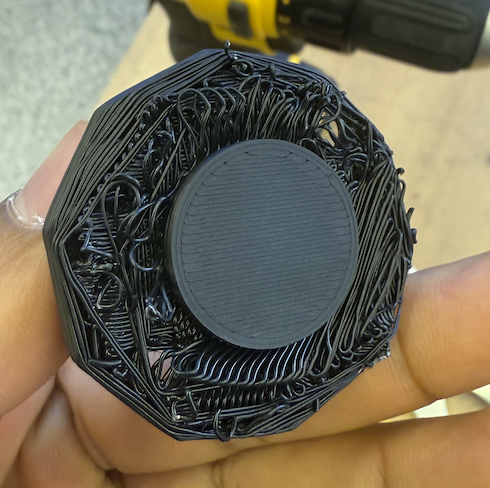

The next day I arrived to find both sets successfully printed.

I then got the ball bearings and belts from the machine kit and started to assemble this.

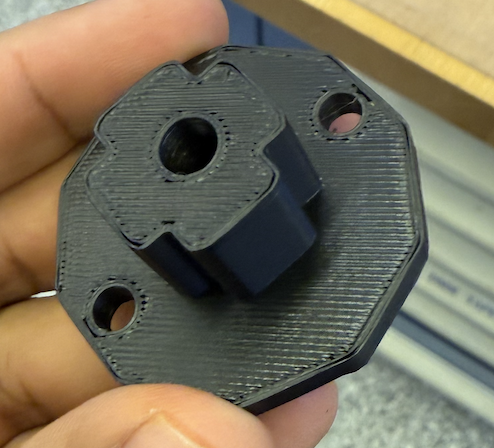

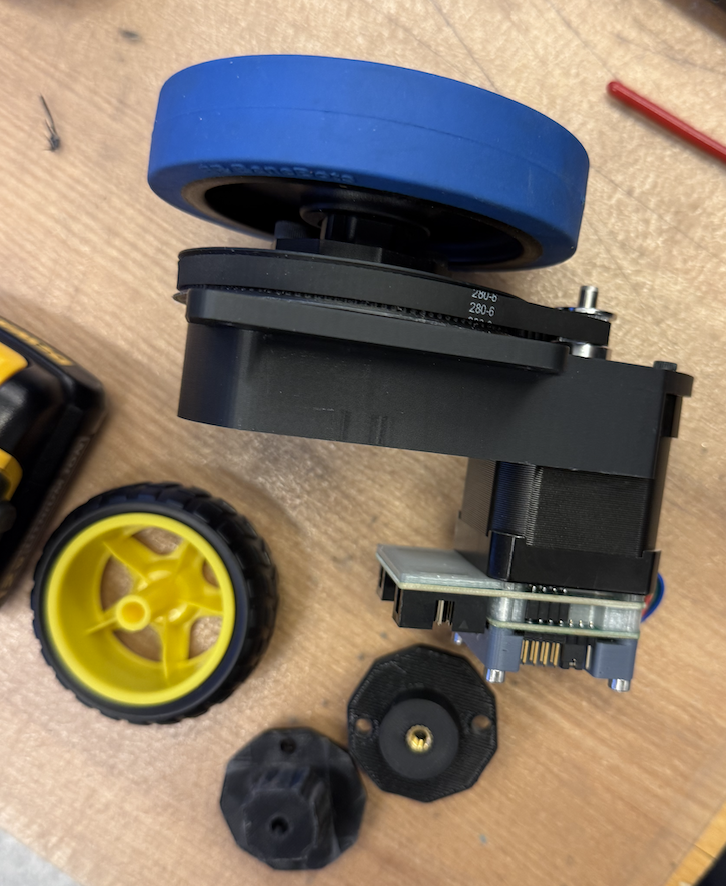

After I finished I decided to use these large wheels since they had a large enough diameter to be wider than the rotary part. The problem was there was no straight forward way to attach them.

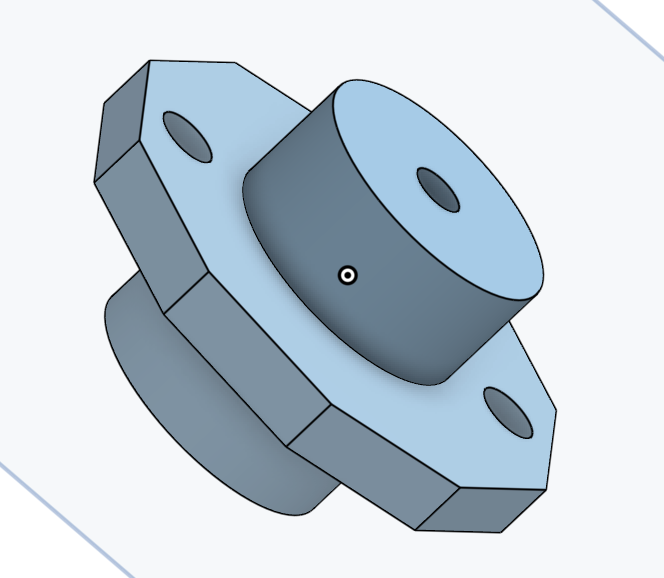

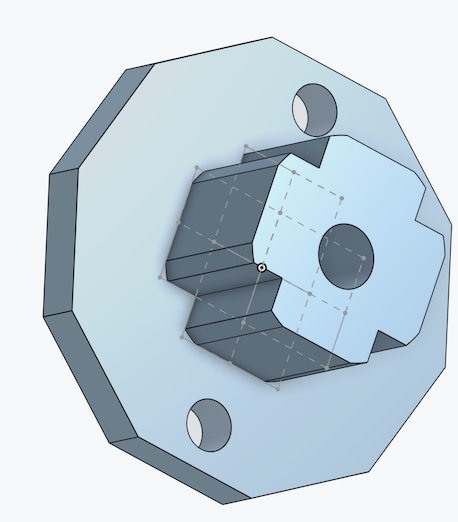

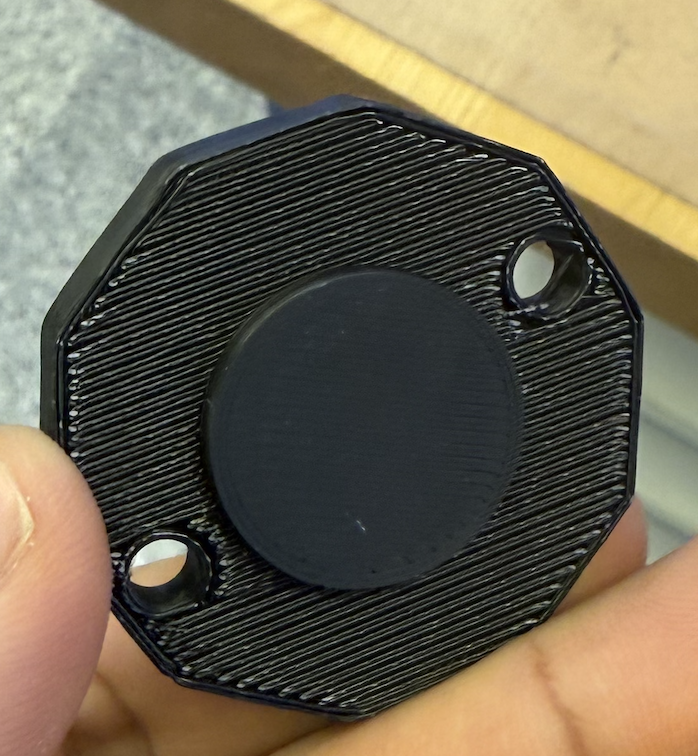

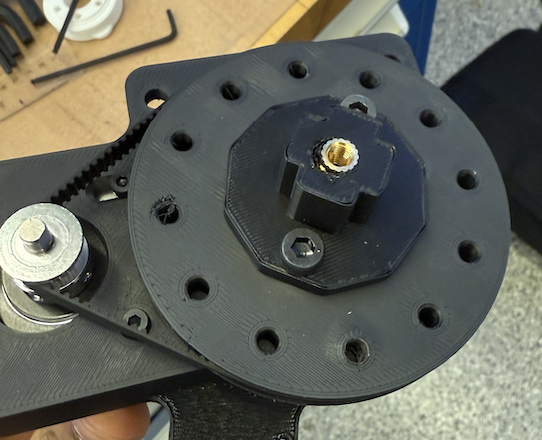

Anthony showed me how to add a heat set insert using the soldering iron. This is for the bolt that will clamp the wheel to this adapter. Anthony also showed me how to manually thread the plastic - that took a good amount of elbow grease. However, there was a flaw in this design, the wheel’s adapter didn’t fit and I decided to redesign the adapter with a square-ish face that can lock into the square side of the wheel.

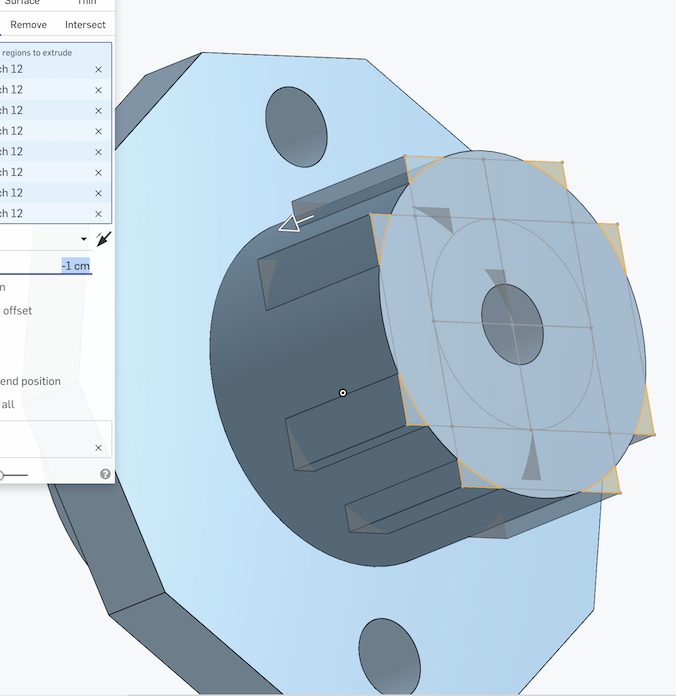

Unfortunately, I made some mistake and the dimensions were to large to fit inside the wheel. In addition, I forgot to add supports.

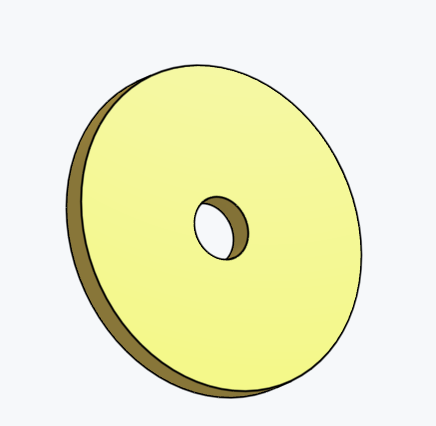

I also designed a simple large washer that will press the other side of the wheel into the adapter via a bolt.

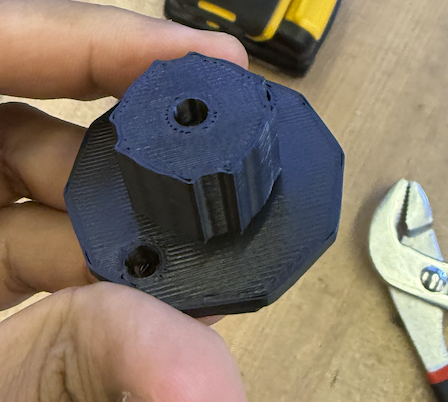

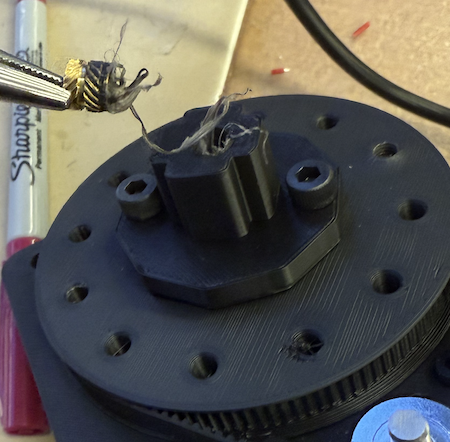

This was the fixed version (third time is the charm). I messed up the heat set insert the first time.

Then there was still enough plastic to try it again, and I tried to make it as centered as possible.

This was the complete system! Claire designed a bracket for the base and we were surprised it was quite sturdy on cardboard. I helped laser cut the first version of the base.

Then with the exterior design team we saw how well the systems fit together.

Initially Eghosa and Quentin wrote some code to do the velocity computation and how much each stepper should move based on the goal. I then converted this to code that the ESP32 can locally run since Quentin helped us get the stepper motor control working on the ESP.

https://gitlab.cba.mit.edu/classes/863.25/EECS/eecs-machine/-/commit/21c349dabc91bd535705850221176cf259a850fe.

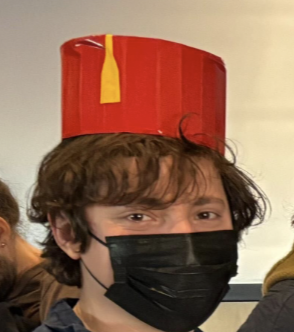

Then Tuesday night we were having trouble with the yolo model, and I then with ChatGPT created a open-cv red blob detection code that will allow the robot to follow a red hat that Katherine made.

import cv2

import numpy as np

from collections import deque

cap = cv2.VideoCapture(0)

last_box = None

frames_missing = 0

MAX_MISSING = 10

MAX_MOVE = 25 # max px movement/frame

MAX_SIZE_CHANGE = 30 # max px size change/frame

# store past widths/heights (≈1 second at 30 FPS)

size_history = deque(maxlen=30)

while True:

ret, frame = cap.read()

if not ret:

break

hsv = cv2.cvtColor(frame, cv2.COLOR_BGR2HSV)

# Slightly loosened HSV for red

lower_red1 = np.array([0, 135, 135])

upper_red1 = np.array([9, 255, 255])

lower_red2 = np.array([171, 135, 135])

upper_red2 = np.array([180, 255, 255])

mask1 = cv2.inRange(hsv, lower_red1, upper_red1)

mask2 = cv2.inRange(hsv, lower_red2, upper_red2)

mask = cv2.bitwise_or(mask1, mask2)

mask = cv2.medianBlur(mask, 5)

contours, _ = cv2.findContours(mask, cv2.RETR_EXTERNAL, cv2.CHAIN_APPROX_SIMPLE)

largest_box = None

largest_area = 0

for cnt in contours:

area = cv2.contourArea(cnt)

if area > largest_area and area > 500:

x, y, w, h = cv2.boundingRect(cnt)

largest_area = area

largest_box = (x, y, w, h)

if largest_box is not None:

frames_missing = 0

if last_box is None:

last_box = largest_box

lx, ly, lw, lh = last_box

size_history.append(max(lw, lh))

else:

lx, ly, lw, lh = last_box

x, y, w, h = largest_box

# 1) Motion smoothing

dx = np.clip(x - lx, -MAX_MOVE, MAX_MOVE)

dy = np.clip(y - ly, -MAX_MOVE, MAX_MOVE)

# 2) Size smoothing

dw = np.clip(w - lw, -MAX_SIZE_CHANGE, MAX_SIZE_CHANGE)

dh = np.clip(h - lh, -MAX_SIZE_CHANGE, MAX_SIZE_CHANGE)

new_w = lw + dw

new_h = lh + dh

# 3) Size stabilizer (bias toward max)

size_history.append(max(new_w, new_h))

max_recent_size = max(size_history)

# Don't shrink too quickly: enforce min size = 70% of max_recent_size

MIN_SIZE_RATIO = 0.7

min_allowed = int(max_recent_size * MIN_SIZE_RATIO)

new_w = max(new_w, min_allowed)

new_h = max(new_h, min_allowed)

new_x = lx + dx

new_y = ly + dy

last_box = (new_x, new_y, new_w, new_h)

else:

frames_missing += 1

if frames_missing > MAX_MISSING:

last_box = None

# Draw box

if last_box is not None:

x, y, w, h = map(int, last_box)

cv2.rectangle(frame, (x, y), (x + w, y + h), (0, 255, 0), 2)

cv2.putText(frame, "Hat", (x, y - 7),

cv2.FONT_HERSHEY_SIMPLEX, 0.6, (0, 255, 0), 2)

cv2.imshow("Mask", mask)

cv2.imshow("Tracking", frame)

if cv2.waitKey(1) & 0xFF == ord('q'):

break

cap.release()

cv2.destroyAllWindows()I had to work with GPT to implement some filtering and moving averaging to make the detections robust.

That is Anthony wearing the hat.

Initially the logic was too complex for the stepper motor commands so Claire and I simplified down the logic.

This snippet is from : https://gitlab.cba.mit.edu/classes/863.25/EECS/eecs-machine/-/blob/main/firmware/connected_motor_control/connected_motor_control.ino?ref_type=heads

if (v1 - v2 >= 0.15){

s1 = -4;

s2 = -4;

}

if (v1 - v2 <= -0.15){

s1 = 4;

s2 = 4;

}

if (v1 - v2 >= -0.15 && v1 - v2 <= 0.15){

s1 = 4;

s2 = -4;

}

if (v1 == 0.0 && v2 == 0.0){

// s1 = -4;

// s2 = 4;

s1 = 0;

s2 = 0;

}

This works well - what it does is check if the desired velocity difference is effectivley making the robot vear right or left, just rotate in place instead until the person is centered again and then go forward if that’s the case.

Files