Input

Devices

Giving the machine ears. Implementing digital I²S MEMS microphone for voice interaction, transforming the SmartPi from a passive display into an interactive voice assistant.

- Input Devices: Measure something: add a sensor to a microcontroller board that you have designed and read it.

01 · From Push to Pull: Voice Input

For my assignment, I chose to implement audio input using a digital I²S MEMS microphone as part of my final project, the SmartPi Agentic Assistant. While the initial design focused on displaying LLM-generated summaries on an LED matrix (a "push" model where information flows one way from cloud to device), adding a microphone transforms it into an interactive assistant that supports a "pull" model — users can ask questions and request specific information.

The Push Model (Current)

In the initial "push-only" design, the n8n workflow in the cloud decides what to display:

- The cloud workflow checks your calendar, email, and weather APIs

- An LLM summarizes the important information

- The summary gets pushed to the SmartPi display automatically

- You're a passive receiver — the device tells you what it thinks is important

The Pull Model (With Microphone)

Adding a microphone enables active queries:

- "What's my next meeting?"

- "Do I need a jacket today?"

- "Any urgent emails?"

- "Show me my afternoon schedule"

This transforms the SmartPi from a passive display into an interactive voice assistant. Voice is the most natural interface for several reasons:

- Hands-free operation: Ask questions while cooking, exercising, or working

- Quick queries: Faster than pulling out your phone or opening an app

- Context-specific: Ask only what you need, when you need it

- Ambient integration: The device sits on your desk like a smart assistant should

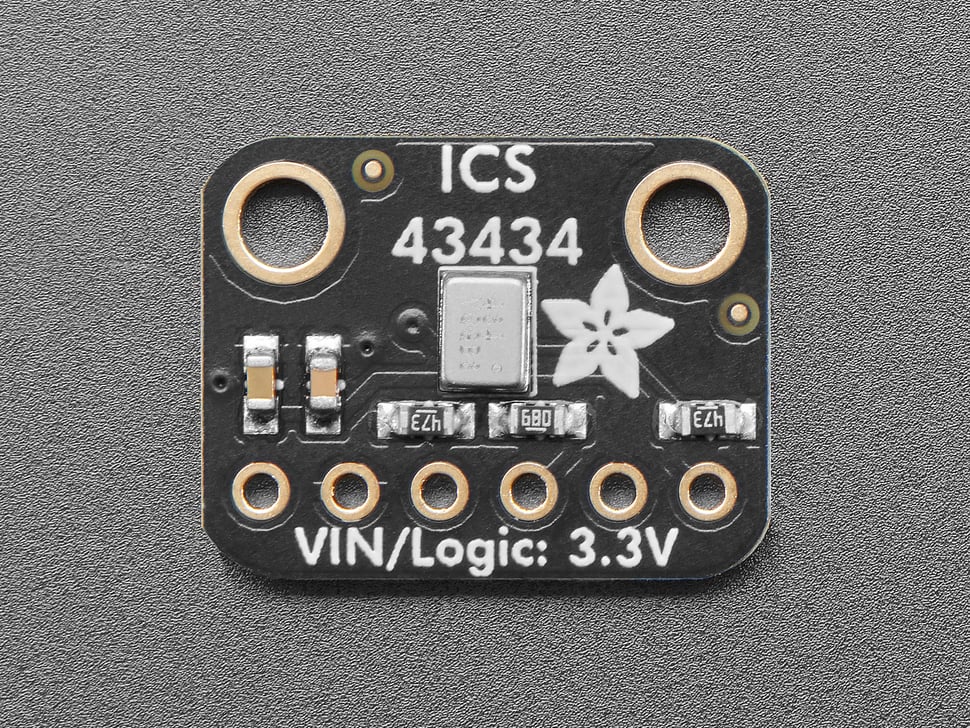

02 · Hardware: ICS-43434 I²S MEMS Microphone

Why Digital I²S Audio Input?

The Raspberry Pi Pico W doesn't have a built-in ADC suitable for high-quality audio capture. While you could use an analog microphone with an external ADC, I chose to implement a fully digital audio input path using the I²S (Inter-IC Sound) protocol for the same reasons I chose digital I²S for audio output.

Digital I²S microphones offer several key advantages:

- No external ADC needed: The microphone handles analog-to-digital conversion internally

- Noise immunity: Digital transmission isn't affected by electrical interference on the PCB

- Consistent quality: No gain staging or amplifier tuning required

- Simplified PCB routing: Digital logic levels are much easier to route than sensitive analog signals

- High fidelity: Clean audio capture suitable for voice recognition

Component Selection: ICS-43434

The Adafruit ICS-43434 is a digital I²S MEMS (Micro-Electro-Mechanical Systems) microphone designed specifically for embedded voice applications. Unlike analog microphones that output a small voltage signal requiring amplification, the ICS-43434 captures sound using a tiny mechanical structure and converts it directly into digital I²S audio data.

Technical Specifications

- Output: Digital I²S audio (WS, SCK, SD)

- Sensitivity: -26 dBFS (high sensitivity for voice)

- SNR: 65 dB (good signal-to-noise ratio)

- Supply Voltage: 1.62 V to 3.6 V (3.3 V nominal)

- Sample Rates: Supports up to 48 kHz

- Dynamic Range: 65 dB suitable for voice recognition

- Footprint: Tiny breakout board, easy to integrate

Why ICS-43434 is Ideal

Analog microphones require careful circuit design — you need the right amplifier gain, proper biasing, filtering to remove noise, and careful PCB layout to avoid picking up interference from nearby digital circuits. The ICS-43434 eliminates all of this:

- No amplifier needed: The ADC is built into the microphone package

- Digital output: Immune to the electrical noise that plagues analog signals

- Minimal external components: Just power supply decoupling capacitors

- Compact: Small enough to fit anywhere in the SmartPi enclosure

- Low power: Less than 1 mA operating current

For voice recognition applications, you don't need audiophile-grade performance — you need clean, intelligible speech capture. The ICS-43434 delivers exactly that.

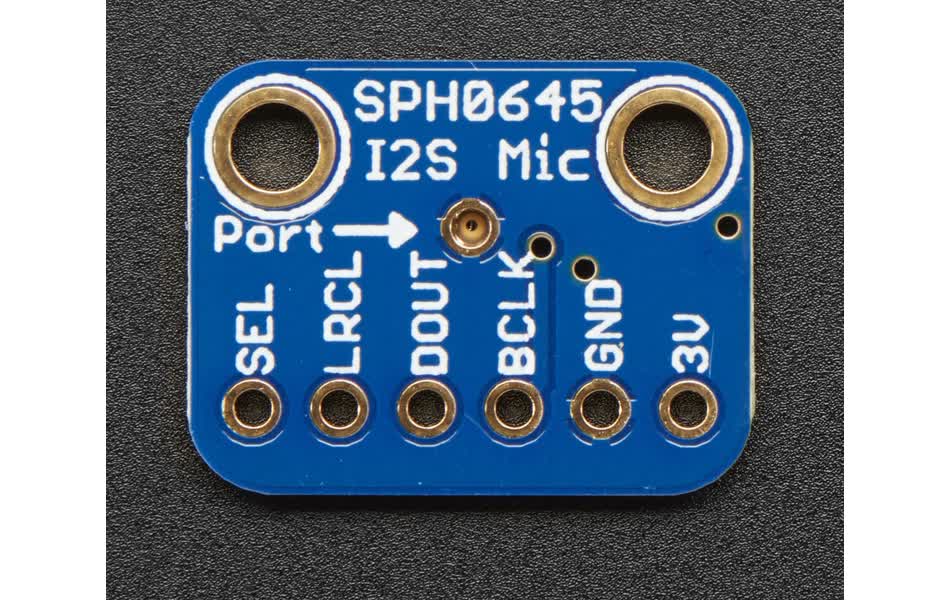

03 · Pin Connections to Pico W

Purpose and Configuration

Purpose: Captures digital audio directly from sound and sends I²S data to the Pico for processing or forwarding to the cloud for speech recognition.

Power: The ICS-43434 requires 3.3 V — do NOT connect it to 5 V or you'll damage it. It draws minimal current (under 1 mA) so it can be powered directly from the Pico's 3V3 pin.

Pin Connections

| ICS-43434 Pin | Pico W Pin | Pin # | Function |

|---|---|---|---|

| 3V3 (VDD) | 3V3(OUT) | 36 | Power supply (3.3V) |

| GND | GND | 28 | Ground |

| WS (LRCLK) | GP22 | 29 | Word Select (Left/Right Clock) |

| SCK (BCLK) | GP26 | 31 | Bit Clock |

| SD (DOUT) | GP27 | 32 | Serial Data Output |

| SEL | GP28 | 34 | Channel select (Low = left, High = right) |

04 · Implementation Challenge: CircuitPython I²S Support

During initial testing of the ICS-43434 microphone, I encountered a critical issue:

AttributeError: 'module' object has no attribute 'I2SIn'

Root Cause: The CircuitPython build initially flashed to the Pico W did not

include I²S audio input support. While the audiobusio module was present, the

critical audiobusio.I2SIn class required for digital microphone input was missing.

Why This Happened

CircuitPython is built with limited flash memory constraints. Not all features can fit in every build:

- ✅ Available:

audiobusiomodule (base audio framework) - ❌ Missing:

audiobusio.I2SInclass (I²S microphone input) - ✅ Available:

audiobusio.I2SOut(I²S audio output for speaker)

Why ICS-43434 Requires I²S

The ICS-43434 is a digital I²S microphone, not an analog device:

- ❌ Not analog → Cannot use Pico's ADC pins

- ❌ Not PDM → Cannot use

audiobusio.PDMIn - ✅ I²S only → Requires

audiobusio.I2SInfor digital communication

The Solution

Flash CircuitPython with I²S Input Support:

- Download the latest CircuitPython build (9.0.0+) from circuitpython.org

- Enter BOOTSEL mode (hold BOOTSEL button, plug in USB)

- Drag the .UF2 file to the

RPI-RP2drive - Verify I²S support with diagnostic script

Verification Test

After flashing the correct CircuitPython build, this import test should succeed:

>>> import audiobusio

>>> audiobusio.I2SIn

# ← Success! Lessons Learned

- Verify firmware capabilities early: Not all CircuitPython builds have all features

- Match hardware to firmware: Digital I²S devices need I²S firmware support

- Create diagnostic tools: Scripts that check for required modules save debugging time

- Document the fix: This issue is common enough to warrant detailed documentation

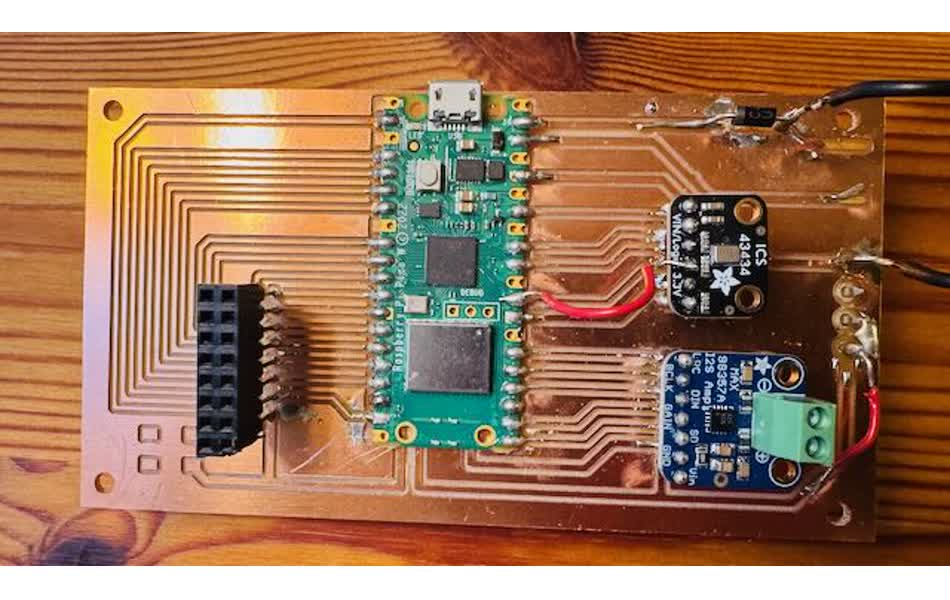

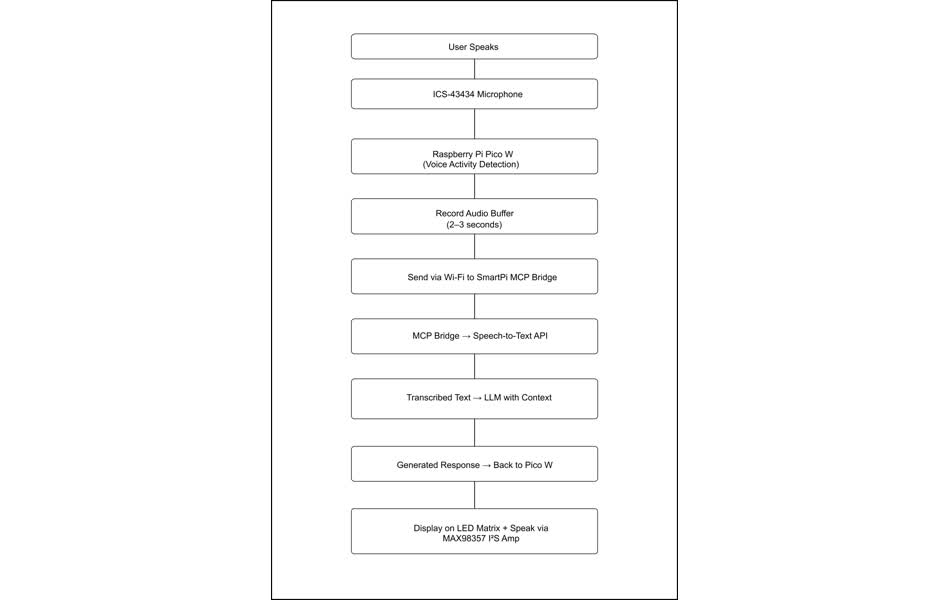

05 · System Architecture: Voice Interaction Loop

With the microphone working, the SmartPi now supports a full voice interaction loop:

- Voice Activity Detection: The Pico continuously monitors audio input, detecting when the user starts speaking

- Audio Capture: Once speech is detected, record a few seconds of audio

- Send to Cloud: Forward the audio to the n8n workflow or directly to a speech-to-text API (Google, OpenAI Whisper, etc.)

- LLM Processing: The transcribed question goes to an LLM that has context about your calendar, email, weather, etc.

- Response Generation: The LLM generates a specific answer to your query

- Display and Speak: The answer is displayed on the LED matrix AND spoken through the speaker

Push vs. Pull: Two Modes of Operation

The SmartPi now operates in two complementary modes:

Push Mode (Proactive):

- The n8n workflow periodically checks your data sources

- Important events trigger automatic notifications

- "Meeting in 10 minutes"

- "Temperature dropping to 20°F tonight"

- Good for ambient awareness and proactive alerts

Pull Mode (Interactive):

- You initiate the interaction by speaking to the device

- Ask specific questions on demand

- "What's my next meeting?"

- "Do I have any urgent emails?"

- Good for context-specific queries and detailed information

Together, these modes create a natural assistant experience — it tells you what you need to know proactively, but you can also ask it questions whenever you want.

Future Enhancements

With the microphone hardware in place, several exciting capabilities become possible:

- Wake word detection: "Hey SmartPi" to activate the assistant (requires on-device ML model)

- Local speech recognition: For simple commands, use on-device recognition instead of cloud APIs

- Voice commands: "Set a timer", "Remind me in an hour", etc.

- Ambient sound monitoring: Detect doorbells, alarms, or other important sounds

- Continuous conversation: Multi-turn dialogue with context retention

06 · Design Considerations

Microphone Placement

The physical location of the microphone matters for voice pickup quality:

- Clear path to user: Mount the microphone facing forward, not blocked by other components

- Avoid noise sources: Keep it away from the switching power supply, LED matrix, and speaker to minimize interference

- Acoustic design: Consider adding a small acoustic port or windscreen to improve pickup

Power Supply Considerations

Digital microphones are sensitive to power supply noise:

- Added decoupling capacitors (10 µF and 100 nF) close to the microphone's VDD pin

- Used the Pico's clean 3.3 V rail, not the noisy 5 V VBUS

- Kept power traces short and wide

PCB Layout

In the SmartPi PCB V3 design, the ICS-43434 is positioned:

- Separate from high-current LED matrix traces

- On the opposite side of the board from the switching amplifier

- With a solid ground plane underneath to minimize EMI

- On header pins for easy testing and replacement

Software Challenges

Voice input adds software complexity:

- Voice Activity Detection: Need to distinguish speech from background noise

- Buffering: Must capture enough audio for recognition without excessive latency

- Network latency: Sending audio to cloud APIs takes time — need local feedback

- Error handling: What happens if Wi-Fi drops during a query?

07 · Conclusion

Adding audio input via the ICS-43434 I²S MEMS microphone transforms the SmartPi Agentic Assistant from a passive information display into an interactive voice assistant. The choice of digital I²S input over analog microphones ensures high fidelity and noise immunity, while the integration with the existing system architecture enables seamless "pull mode" queries alongside the "push mode" notifications.

This implementation demonstrates how input devices fundamentally change the interaction model of embedded systems. By adding just one sensor, we've enabled natural voice interaction, making the SmartPi Assistant truly useful in hands-free, eyes-free scenarios. The device is no longer just telling you things — you can have a conversation with it.