HMM: Hidden Spatial States of Rents in NYC

Update: May 19, 2014

Voila!

Final Presentation here .

Viterbi Algorithm here .

Rand.py here .

-----------------------------------------------------------------------------------

Update: May 9, 2014

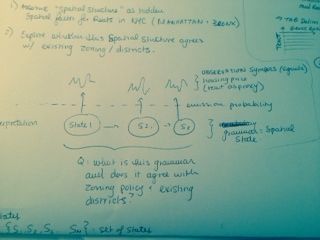

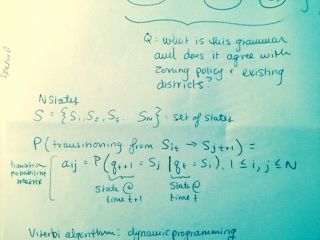

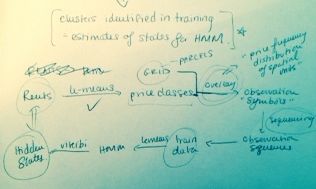

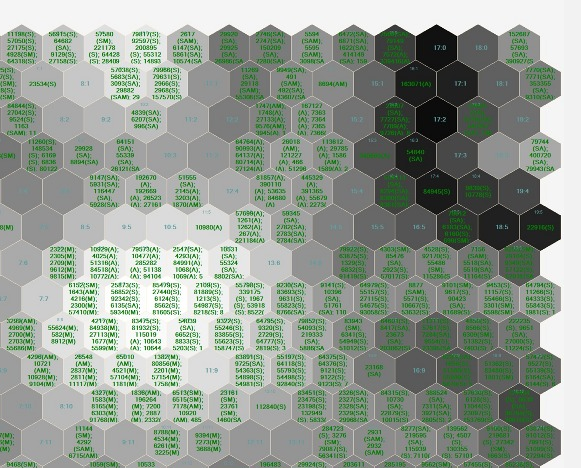

Final Problem Statement: Does rent in NYC have a hidden spatial state?

Using Hidden Markov Modeling, I am investigating hidden spatial states of rental prices in Manhattan.

This process assumes there is an underlying "spatial structure" contributing to rental price patterns

observed as "signals" in the City. If HMM successfully uncovers a grammar of spatial states, does that

spatial pattern agree with existing zoning policy (affordable housing districts, historic districs,

overlays etc.?). Below are snapshots of my design process:

------------------------------------------------------------------------------------

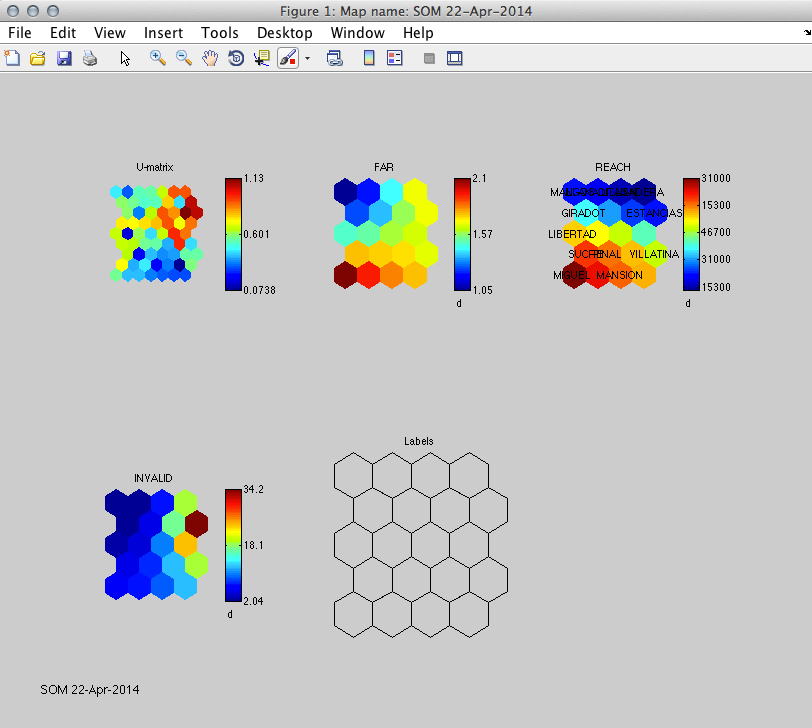

Update: April 22, 2014

Preliminary SOM Visualizations with test data. Great to know the process works. Now I have to

make some decisions about how to format the data in terms of building parcels or neighborhoods.

Also, how to interpret this graph even though it's just test data.

The M file I wrote for the SOM is available here .

--------------------------------------------------------------------------------------

Update: April 14, 2014

SOM 2010, Emily Royall & Vivek Patel

Made some changes to the initial conception of this project after reading up on projection

pursuit. I learned that what I had initially conceived of, Exploratory Factor Analysis,

is more like prior analysis performed in service of some sort of visualization. I'm

really interested seeing urban data in new ways, with particular emphasis on hidden

relationships between socio-economic factors. In my research I found a variety of ways

to do that, notably through Self-Organizing Maps.

I used SOM once before, several years ago, to visualize orthologous relationshps

between model organisms--the orthology-relationship architecture was undetectable

through phenotype observation. I thought it could be interesting to apply a similar method

to some of the urban data I have on Medellin.

I plan on doing this in MATLAB, and have formatted a test data file to feel my way through

the process. I'm using SOM Toolbox for Matlab 5 (Documentation).

Here's the current test file: here

Currently the file displays three variables (Floor-Area Ratio, Reach and Disability) with

neighborhood labels (there are 18 neighborhoods). One conflict is that some variables are

described at the neighborhood level, others at the parcel level. I took an average for Reach

for each neighborhood. Disability, and other dem. factors are collected at this scale.

while this resolves the problem, it reduces the data set to 18 observations, versus

60,000 parcels. I may run it both ways to see what the difference is.

Next steps are to normalize the data. Possibly, preprocess by k-means. If this works well

I may be able to push out a variety of other visualizations. Also wondering if it's possible

to write a Grasshopper plugin...

-----------------------------------------------------------------------------------

Abstract

Informal settlements showcase the successes and failures of organic, unregulated growth. In most contexts, informal

settlement is marked by the absence of hierarchical planning and top-down urban design intervention. Moreover, growth

typologies in informal settlements are particularly robust and resistant to low-resolution interventions that fail to integrate,

take advantage of, or harmonize with existing mechanisms of growth within informal communities.

For the designer interested in improving quality of life or planning for successful city-scale interventions, the complex

relationship between socio-economic factors and the built environment makes informal growth challenging. Some

hypothesize that these demographic factors are themselves manifested in the urban fabric, others argue that

development occurs in direct response to topographical constraints. Regardless, despite their organic and often chaotic

appearance, there is an underlying logic and structure to informal growth. This latent structure is masked by a tangled

web of confounding factors, social, economic, physical and political surrounding urban development in slums.

Part of my research involves exploring the applicability of statistical and modeling tools employed in the bio-computational

and neurosciences to urban systems. As cities generate more and more data, finding new tools for analysis becomes

increasingly important. For their organic properties, informal settlements may especially lend themselves to analysis

using tools derived from biological science. For this project, I propose to run an exploratory, and possibly confirmatory

factor analysis (structural equation modeling or SEM) on perceptions of accessibility in informal settlements. The

idea is to determine if there are structural correlations between a variety of factors at different scales that determine the

rated connectivity of a building. The project is limited by the data available for the region, and so for me this is more of an

exploration of process. I'm mostly interested in whether or not it's possible to use latent variable modeling to describe an

urban system. A successful project for me would be a factor analysis that actually works within the constraints of a typical

urban data environment---i.e., a streamlined processes transitioning a GIS dataset to factor analysis in R.

Methods & Building Blocks

Tools:

-ArcGIS

-Urban Analysis Toolkit

-R

Perceptions of connectivity are determined by the Centrality Tool as part of the Urban Network Analysis Toolkit (open source via City Form Lab). The Reach measure is computed for each building parcel within a district in Medellin, Colombia: Comuna 8. This output describes how many surrounding buildings are accessible within a search radius r, within the road network. Each building is given a rating between 0 and 4,000. 0 representing no accessibility, and 4,000 representing the highest accessibility. Currently there are approximately 60,000 parcels in the dataset.

Reach is calculated by the following:

R^r[i] = || {j E G - {i} : d [i,j] <= r} ||

Where R^r is the Reach Centrality, i and j are buildings, G is the network graph, and r is the shortest defined path distance.

The City of Medellin has collected a variety of demographic factors on Comuna 8. Factors were mined from the City's

archives and joined to GIS shapefiles and include:

-Land Use (UsosSuelo)

-Density

-Activity: populations of students, self-employed, un-employed, invalid, working or retired

-Age

-Income

-Disability

Demographic factors are collected at the neighborhood level, but Reach is measured for individual parcels. There are 18 neighborhoods within Comuna 8, each of which contain several hundred individual building parcels. To format the data for factor analysis in R, I spatially joined the datasets at the neighborhood level to individual building parcels. Thus, each building parcel will be assigned the value for a social demographic collected at the neighborhood level. I'm not sure what kind of affect this will have on the analysis, but it's the only way I can think of to integrate the connectivity metric and demographic data into a single table. The result is an excel table that includes these values for each building parcel, as well as a geographically located shape file containing this data.

Below are links to the current Excel file via the spatial join in GIS, as well as the joined shapefile itself:

Excel

Shapefile

The next step will be to develop a factor analysis in R and import this data. SEM is popular in neuroscience for finding

hidden correlations contributing to specific brain activity. I thought about modifying this code, which is interesting because it works on neuroimaging data. I wonder if it

would ever be possible to analogously import maps and run analyses on them---or even compare results through a crowdsourced network like brainmap.org. In the end I chose R because I wanted to learn it, and because there are some existing tutorials on Factor Analysis in R. Matlab is another potential resource.

To do: Learn how to import data into R. Understand PCA.

Previous Work

This is the only use of Factor Analysis for Urban Planning I've seen---Daniel O'Brien at the Boston Area Research Initiative:

Ecometrics

Helpful work on identifying and quantifying urban growth metrics:

CFL Urban Expansion

Resources

Here are some helpful resources I've tracked down:

FA in R Video

Social Science Example

Description of fractanal function

More Questions

Is it possible to clip ACS census tract data by parcel?

What kind of formatted data inputs would an exploratory factor analysis in R require?

How do I feed my R code real data?

What is PCA?

Is it a better to perform this analysis at the Neighborhood level? i.e., Assign neighborhoods an average connectivity metric at the building parcel level?

What are my options for visualization?

What is varimax vs. promax rotation?

How will I interpret factor loads?