Week 10

Mechanical & Machine Design

Week Highlights

Snapshot of this week's mechanical design, machine building, and midterm review milestones.

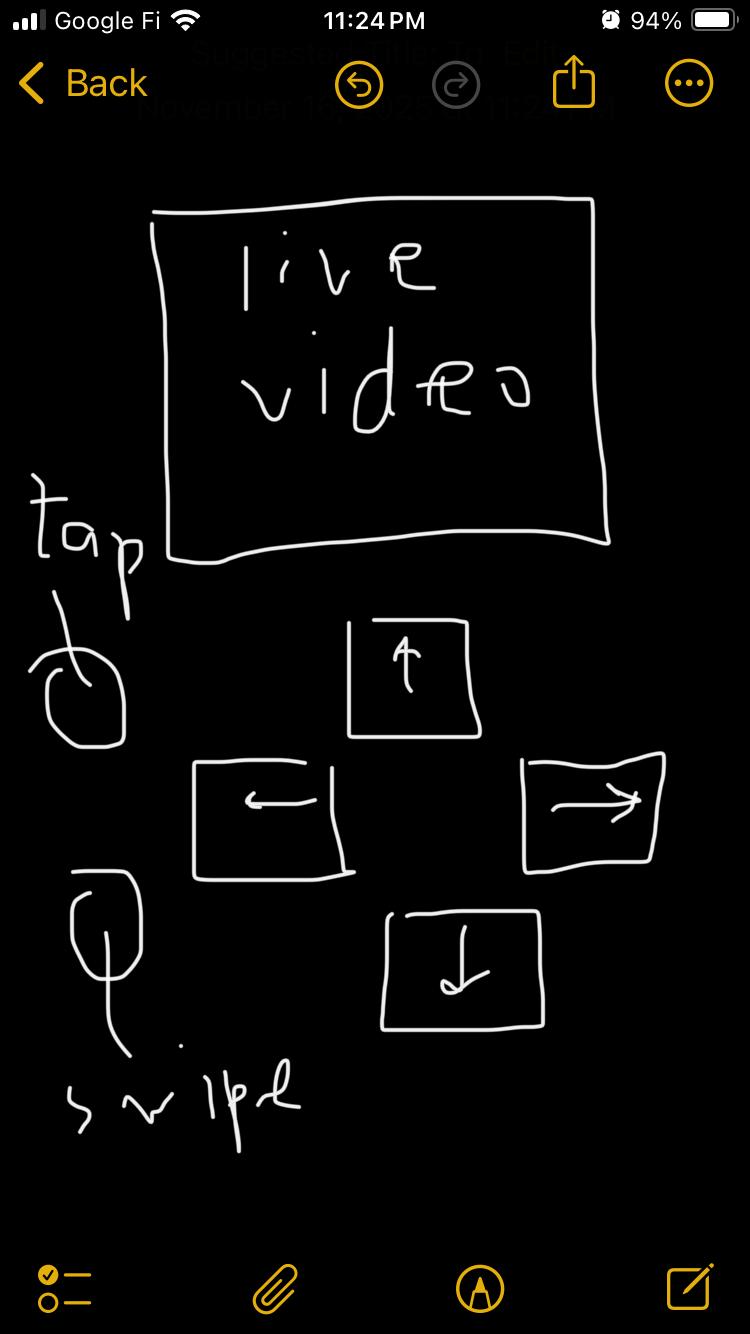

Swiper & Tapping Mechanisms

Swiper mechanism and coordinated tapping/swiping automation for phone interaction.

Person Follower System

Real-time person tracking with following and stop behaviors for interactive machine control.

Full System Integration

Complete actuation and automation system with all subsystems integrated and coordinated.

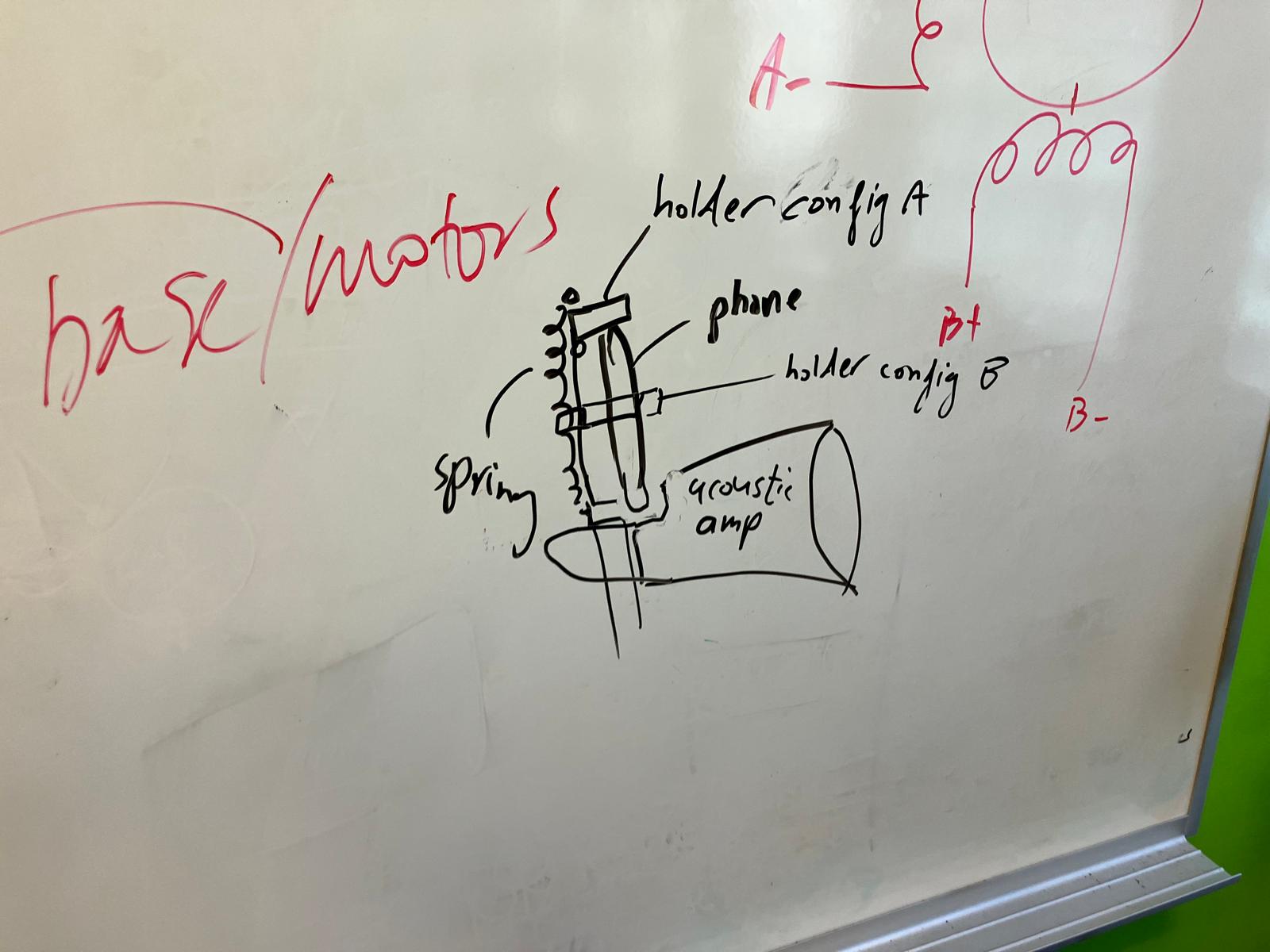

Phone Holder & Amplifier

Spring-loaded phone holder mechanism and 3D-printed components.

Actuation Systems

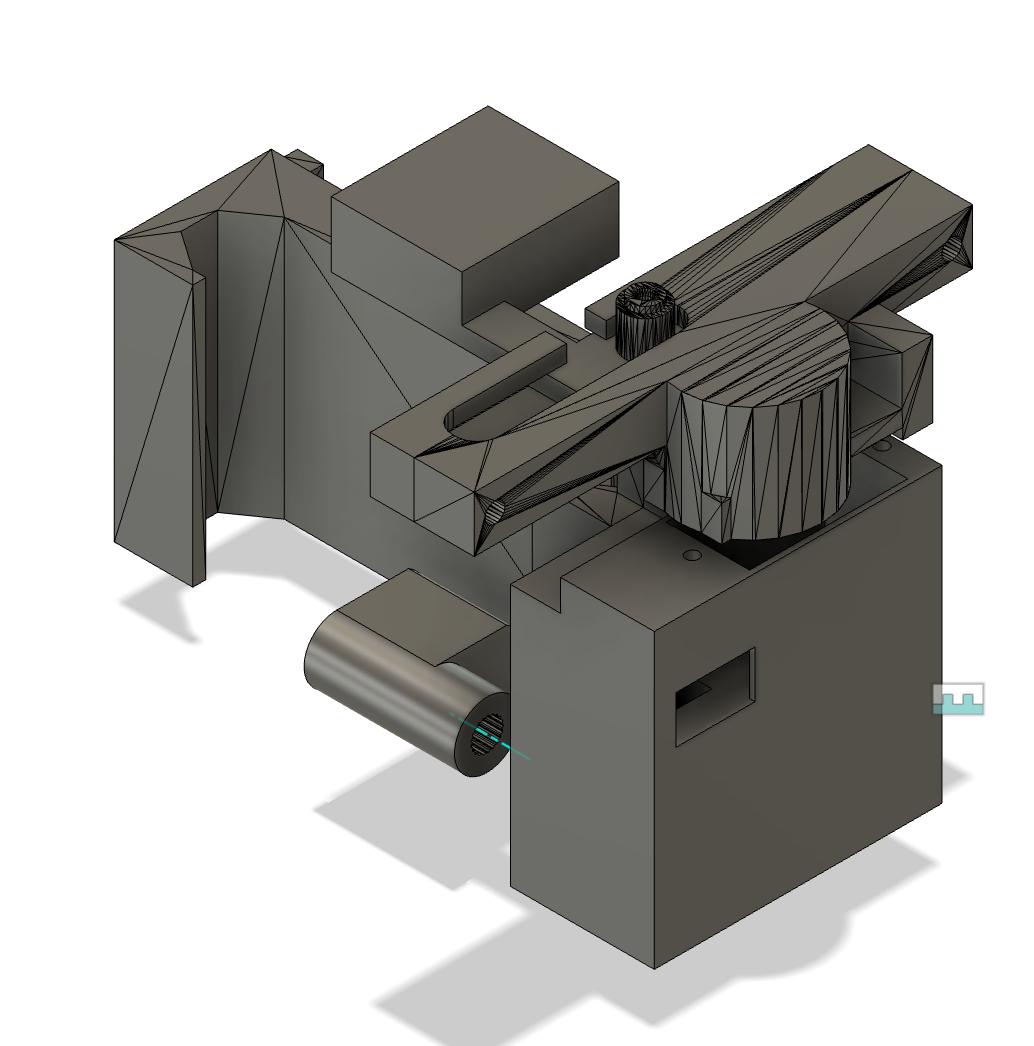

Servo gear system and linear actuator stylus mechanism.

Camera & Edge AI

Wi-Fi livestreaming and on-device face detection with Edge AI.

Servo Motor Controls

Dual servo opposite-direction sweep pattern for synchronized tapping and swiping mechanisms.

4-Step Motion Test

Synchronized 4-step motion pattern (0° → 90° → 180° → 90° → 0°) for coordinated actions.

Tapper & Swiper Enclosures

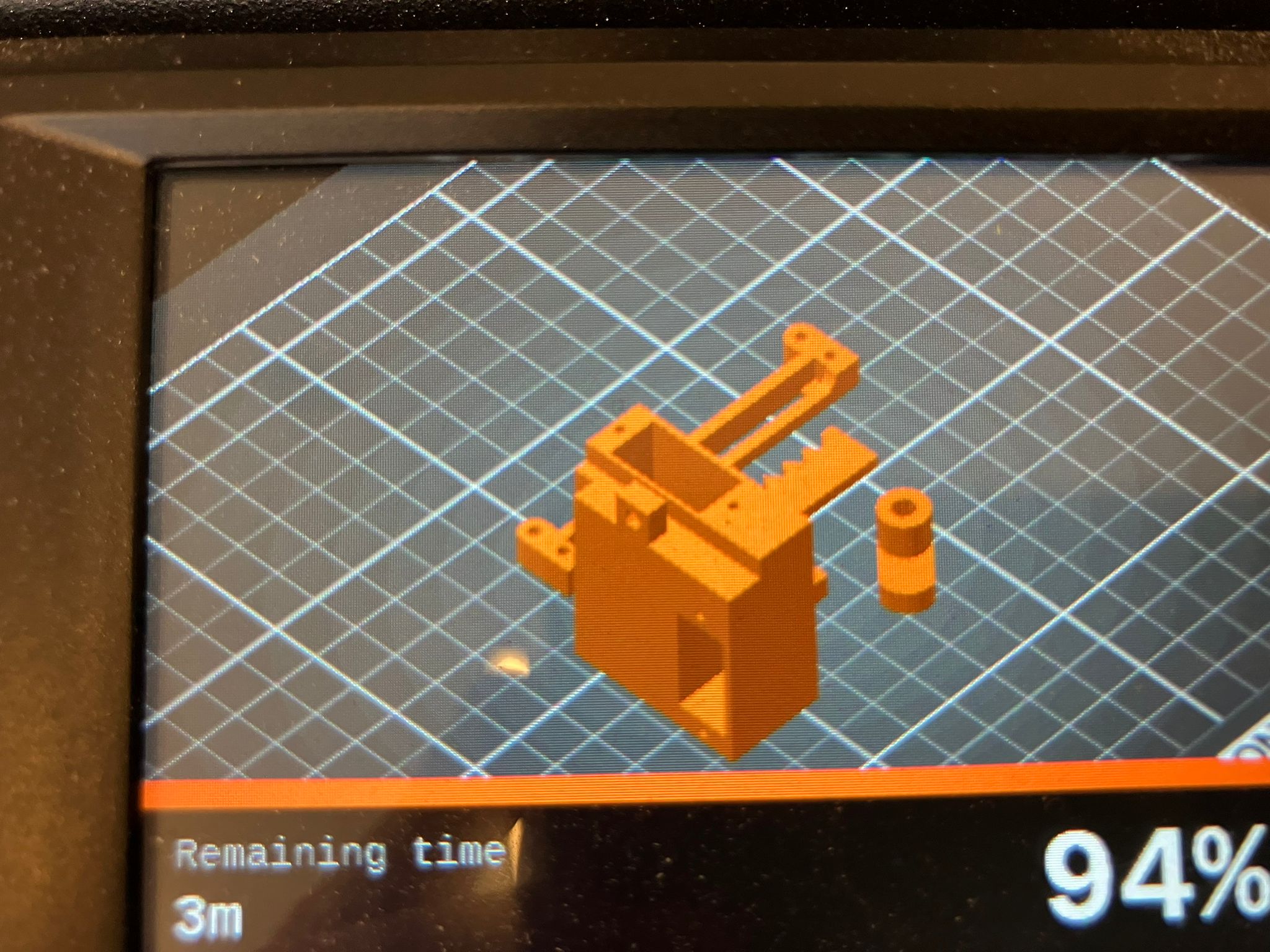

3D-printed tapper and swiper enclosures with integrated servo mounts and motion guides.

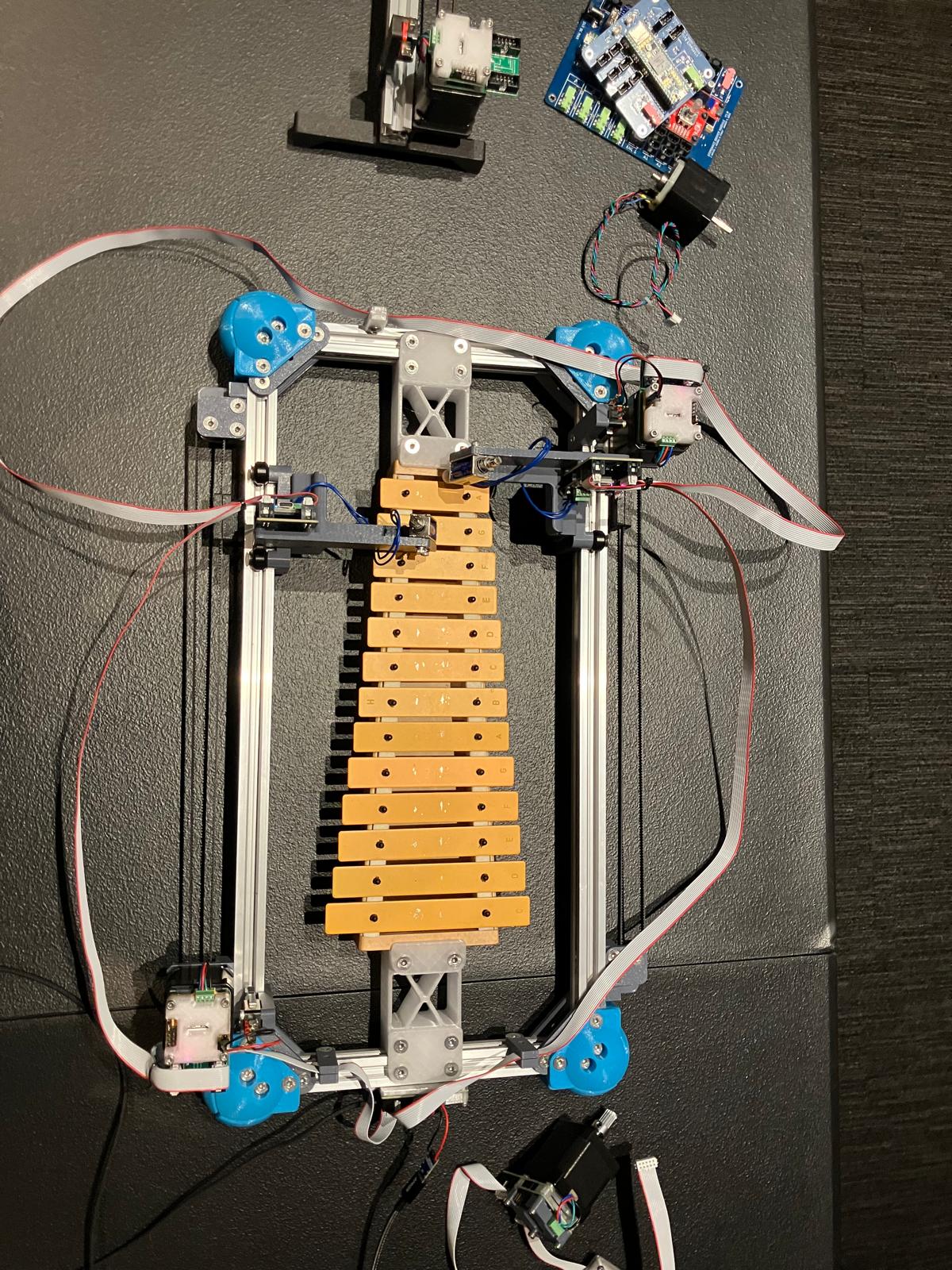

Machine Building Training

Machine building training session with xylophone demonstration.

Midterm Review Documentation

System diagram and development timeline for midterm review.

Injection Molding Training

Injection molding process overview with Dan covering mold design and machine operation.

Week Overview

Machine building principles, injection molding processes, mechanical design fundamentals, and midterm review preparation for final project documentation.

Focus

Design and build a machine with mechanism, actuation, automation, function, and user interface. Prepare comprehensive midterm review documentation.

Key Skills

Mechanical design principles, stepper motor control, real-time motion systems, injection molding workflows, and project planning.

Deliverables

Group machine design and manual operation, recitation notes on machine building kits, injection molding training summary, and individual midterm review documentation.

Table of Contents

Process & Training

Documentation

Core Resources

Primary references for mechanical design, machine building, and midterm review requirements.

Mechanical Design

The MIT Mechanical Design overview covers stress-strain relationships, materials selection (plastic, metal, rubber, foam, garolite, wood, cement, ceramic), fasteners, framing systems, drive mechanisms (gears, lead screws, belts), guide systems (shafts, rails, slides), bearings, and mechanical principles (academy.cba.mit.edu).

- Vendor resources: McMaster-Carr, Stock Drive Products, Amazon Industrial, Misumi.

- Key principles: stiffness, strength, hardness, friction, backlash, force loops, elastic averaging, kinematic coupling.

- Mechanisms: flexures, linkages, pantographs, deltabots, hexapods, CoreXY, and more.

Machine Design

The Machine Design page covers mechanisms, structural loops, sensors, actuators, end effectors, power electronics, motion control (open-loop, closed-loop), control theory (bang-bang, PID, acceleration, model predictive), timing protocols, and machine control systems (academy.cba.mit.edu).

- Control systems: Grbl, grblHAL, Marlin, Duet3D, cncjs, FabMo, and custom solutions.

- Path planning: static and dynamic motion control strategies.

- File formats and design representation for machine control.

Midterm Review Requirements

The Midterm page outlines required deliverables for the final project review (academy.cba.mit.edu).

- Post a system diagram for your project.

- List the tasks to be completed.

- Make a schedule for doing them.

- Schedule a meeting with instructors for a graded review of these and your weekly assignments.

Recitation · Machine Building Kits

Refined notes from Quentin Bolsee's machine building recitation, anchored to the Slack recap (Slack).

Resources

- Main repository: machineweek-2025 — hardware kits and documentation.

- Control system: machineweek-2025-control — networking and control implementation.

- Hardware kits: Available at the bottom of the main repository page, section-dependent boards.

- Modular Things: modular-things.com — stepper modules and components.

Control System Architecture

The control system uses a byte-passing protocol for device communication instead of address hopping.

- Protocol: Seven bits per byte (first bit reserved for networking). If the first bit is 1, the byte is for you—consume and process it, then set the first bit to 0 and pass to the next device.

- Sequence: Number of bytes in sequence equals the number of devices (one byte per device at a time).

- Performance: 1000 packets (n bytes for n devices) per second.

- Example: Acceleration demo uses a socket that takes 20V from USB (requires USB port that can provide it; normal USB ports provide 5V).

Real-Time Control Examples

- Xylophone control: StepDance documentation shows static and real-time control examples. "When you control your machine in realtime, it's a special feeling!"

- Realtime vs synchronous: Understanding the difference between embedded and virtual control systems.

- Flexible vs rigid: Trade-offs in system design for different applications.

Stepper Motors

Stepper motor control involves understanding signals for position, velocity, acceleration, jerk, crackle, and pop. Reference: Stepper Motor Video.

- G-code interpretation: Communication with the computer and step generation/interpolation at 25kHz.

- Blocking operations: Avoid anything blocking in the main loop to maintain real-time performance.

- Control paradigms: Flexible vs rigid systems, embedded vs virtual implementations.

StepDance: Build Your Own (Realtime) Controller

StepDance is a modular real-time motion control system with components for inputs, interfaces, generators, kinematics, recording, outputs, and filters.

Demonstrative Examples

- Realtime control: Step-a-sketch (using StepDance driver module mapping encoder input to stepper motor) and clay 3D printer with both Cartesian and polar coordinates.

- Hybrid motion: Manual + generative mixing (encoders and circular motion) — circle generator demo with pedal control, SVG and live motion integration.

- Modular systems: Pantograph for X (basic module: encoders know direction, tablet but physical), sketch-based 3D stencil printer, pantograph for pen plotter.

Why Modules?

- Modules function as both standalone components and inputs to more complex machines.

- Practically, basic modules (encapsulating input processing logic) plug into machine controller modules (encapsulating machine control logic).

- This modularity enables rapid prototyping and system reconfiguration.

See recitation slides for additional references and detailed examples.

Hardware Kits & Modular Components

- Hardware kits: Available at the bottom of the main repository page; boards are section-dependent.

- Modular Things stepper: Stepper H-Bridge XIAO — programmable with byte-passing protocol.

Wednesday presentation: Bring your machine and prepare a 15-minute presentation per machine. Win the presentation!

Assignments

- Group Assignment 1

Design a machine that includes mechanism + actuation + automation + function + user interface. Build the mechanical parts and operate it manually. - Group Assignment 2

Actuate and automate your machine. Prepare a demonstration of your machines for the next class. - Individual

On your final project site: post a system diagram, list tasks to be completed, make a schedule, and schedule a meeting with instructors for graded review.

Tools & Resources

- Machine Building Kits

Hardware kits available from the machineweek-2025 repository, section-dependent boards. - Control Systems

StepDance, modular control systems, byte-passing protocols for device communication. - Mechanical Design

Fasteners, framing, drive systems, guide systems, bearings, mechanisms. - Injection Molding

Mold blanks, runner systems, gate design, machine operation.

Group Assignment

Design and build a machine that includes mechanism, actuation, automation, function, and user interface. Document the group project and your individual contribution.

Group Assignment 1: Design & Manual Operation

Design a machine that includes mechanism + actuation + automation + function + user interface. Build the mechanical parts and operate it manually. Document the group project and your individual contribution.

[Placeholder: Group assignment documentation will be added here]

Group Assignment 2: Actuation & Automation

Actuate and automate your machine. Document the group project and your individual contribution. Prepare a demonstration of your machines for the next class.

[Placeholder: Group assignment documentation will be added here]

Individual Contribution to Group Assignments

Document your individual contribution to group assignment 1 and group assignment 2.

Individual Contribution to Group Assignment 1: Design & Manual Operation

Initial Concept & Idea Pitch

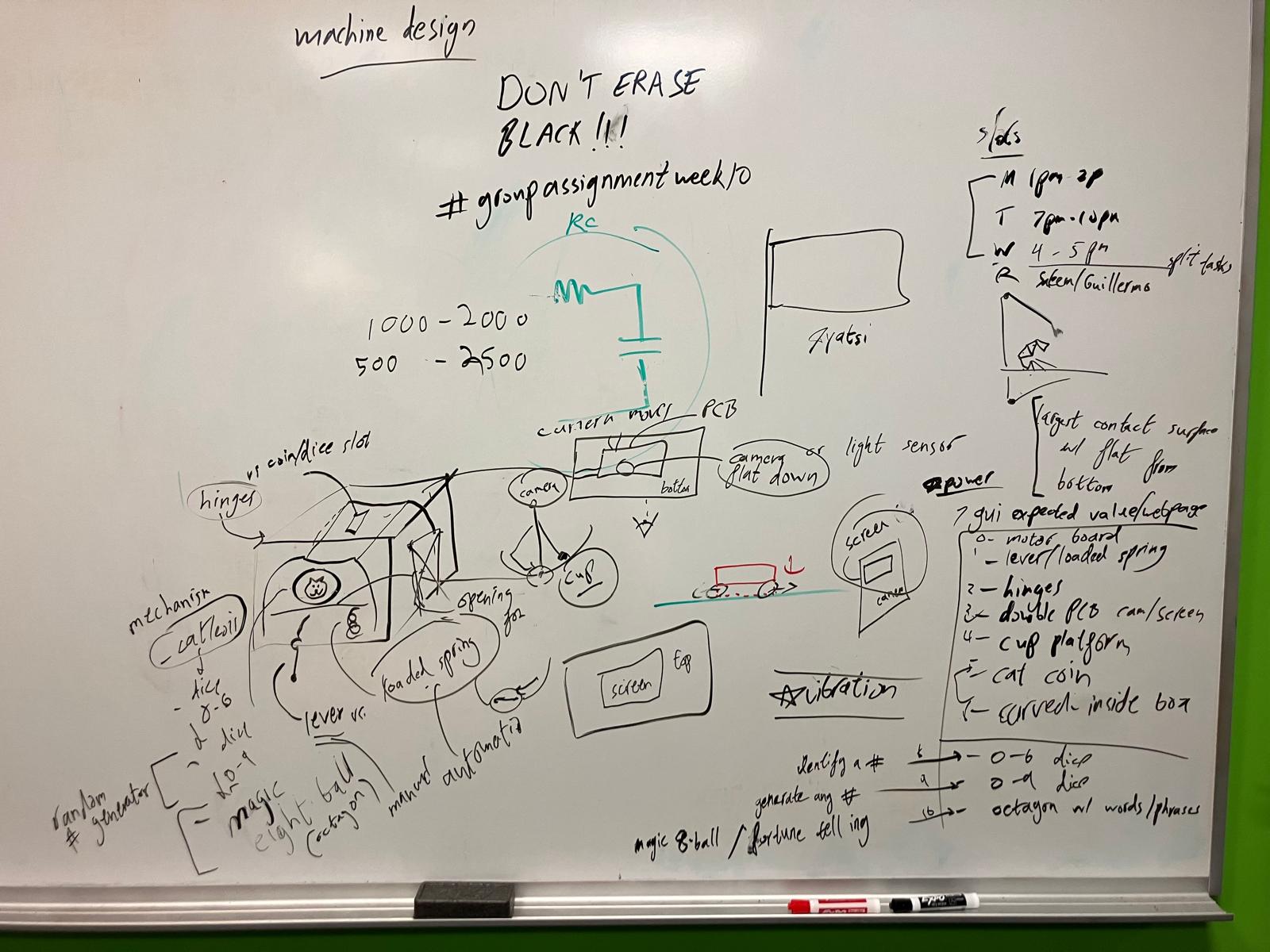

I pitched and developed the initial concept for the group project, which helped initiate collaborative design discussions and whiteboard sessions. The concept evolved from a coin flipper machine to the final BrainrotBot design—a mobile robot that navigates and interacts with smartphones.

Machine Design: Coin Flipper Concept

The initial design concept focused on a coin flipper machine with the following components:

Mechanism

Lever attached to a loaded spring under a platform flips a coin inserted into a curved box.

Actuation

Lever pushes the loaded spring platform beyond a stopper to actuate the coin flip.

Automation

Button activates a motor to push the lever, automating the coin flip actuation.

Applications

Schrödinger's cat coin (minimal), heads or tails, 6-sided dice, 10-sided dice random number generator, magic 8-ball.

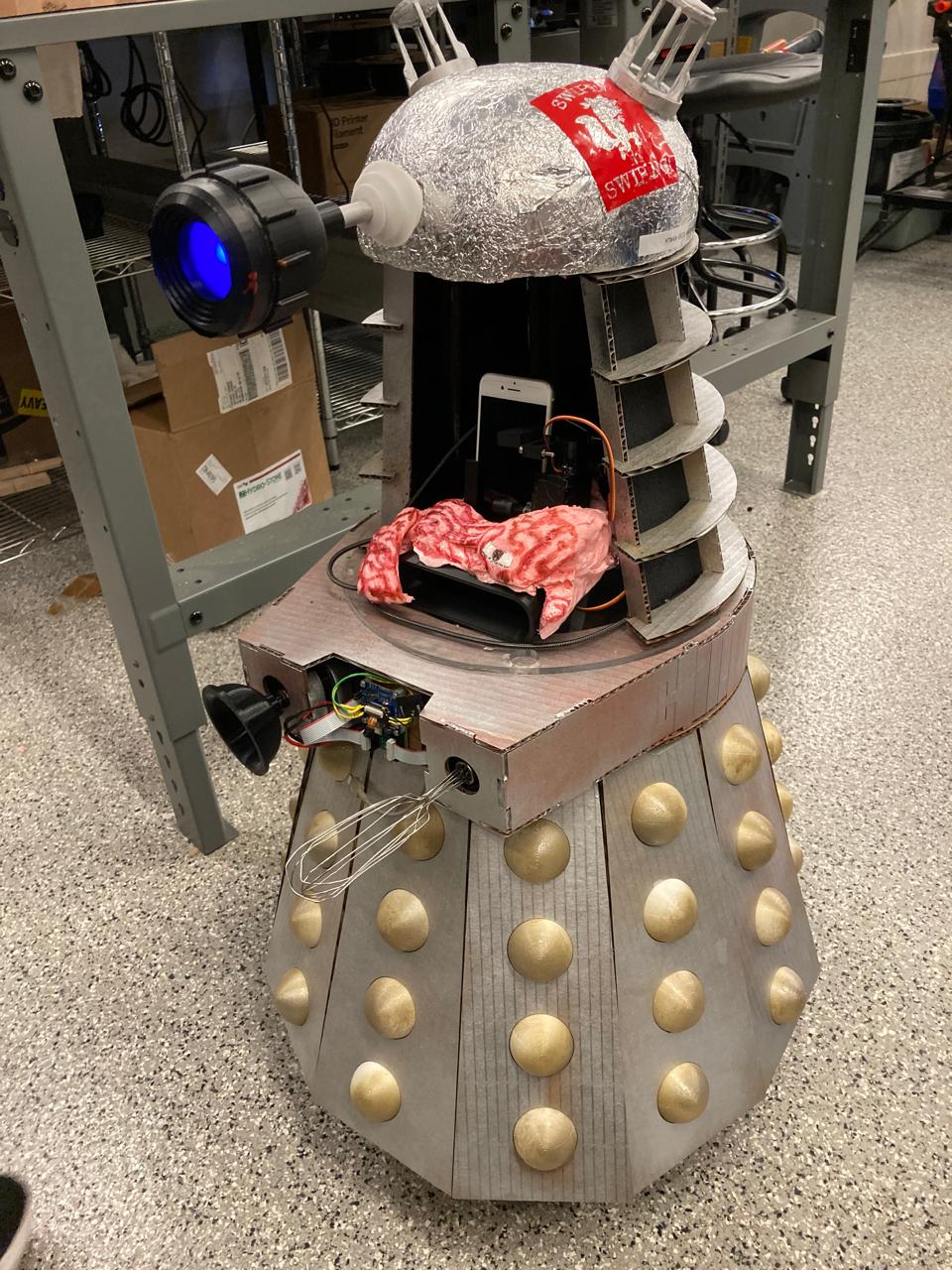

Subsystem Architecture & Interface Design

After the group settled on the BrainrotBot concept, I contributed to splitting the system into modular subsystems with defined interfaces. This modular approach enabled parallel development and clear integration points.

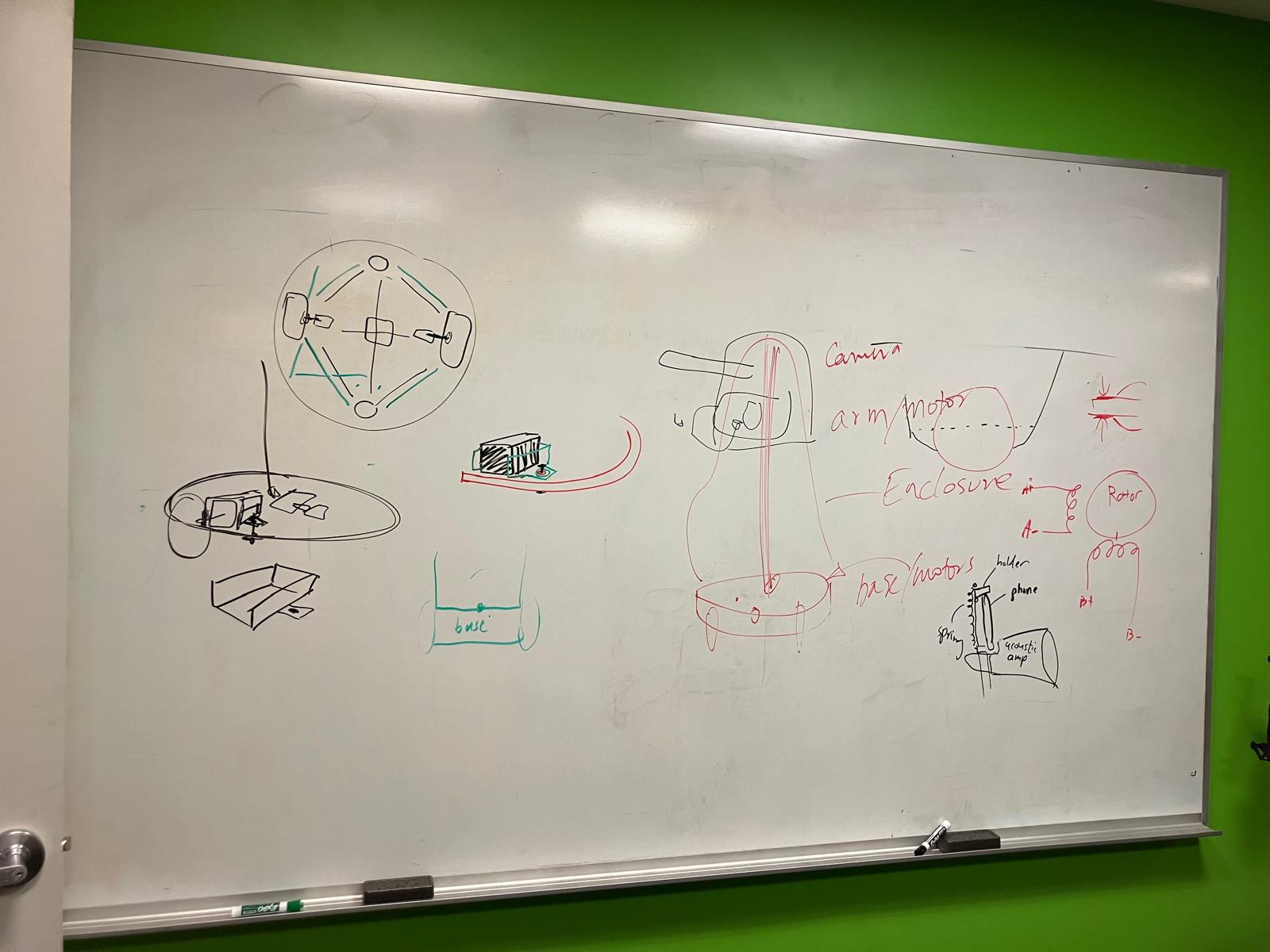

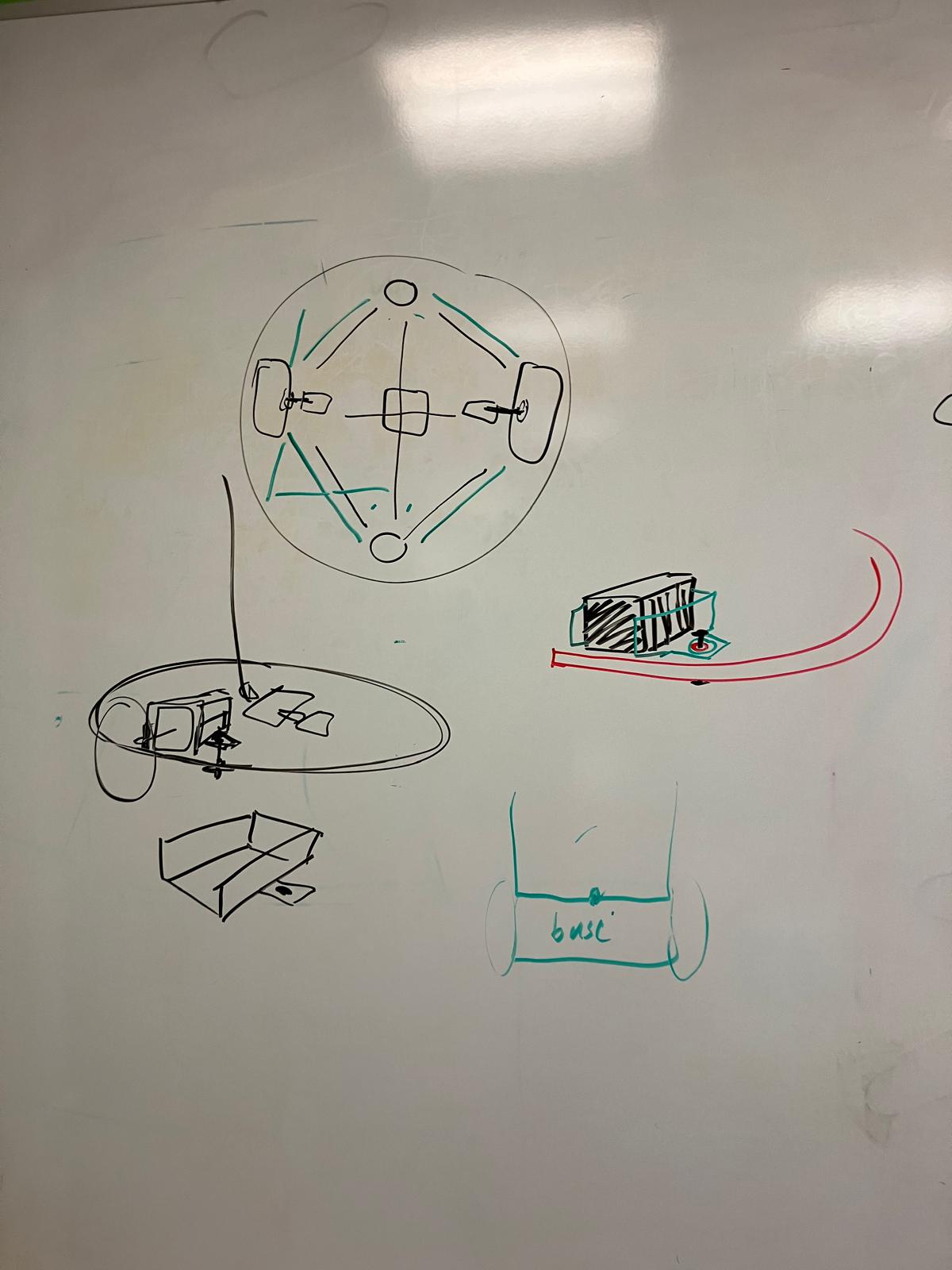

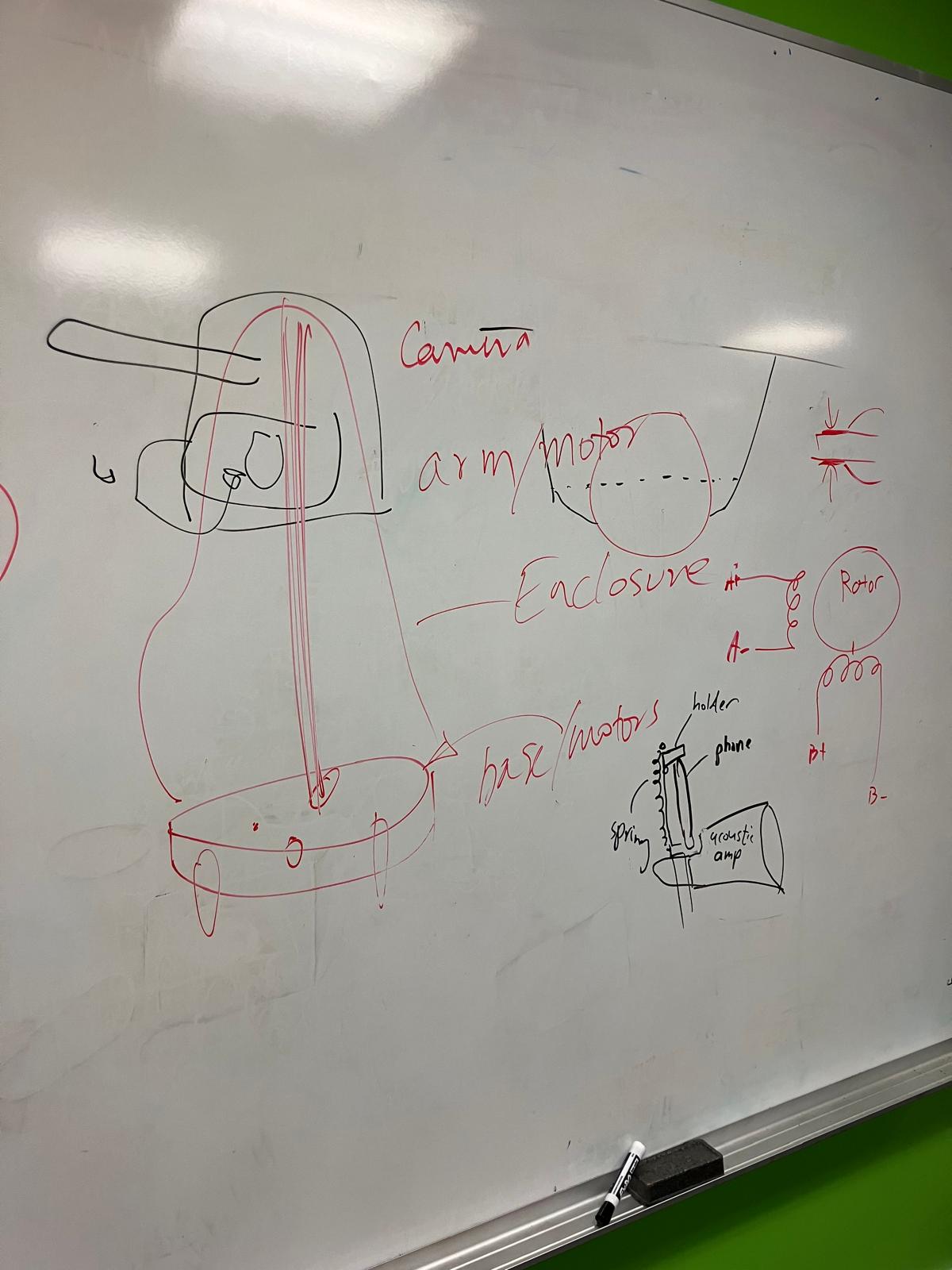

- Subsystem A: Scroller arm design + phone holder — platform for phone mounting with scrolling arm and 3D-printed sound funnel

- Subsystem B: Sensors + Camera (drive control) — camera/sensor system outputting desired position changes

- Subsystem C: Movement/Roomba (drive actuation) — drive train CAD with wheels and motor control

- Subsystem D: Door/outer body — Dalek facade with opening door mechanism

- Subsystem E: Internal column + Roomba base — structural platform supporting all components

- Subsystem F: Audio (optional) — audio PCB and beep library or 3D-printable impedance matching amplifier horn

View subsystem breakdown document → | View subsystem references →

Design Iterations & Architecture Decisions

I contributed to key architectural decisions that separated the base chassis from the body, enabling an upgradeable design that could transition from two-wheel drive to omnidirectional drive.

Component Design Contributions

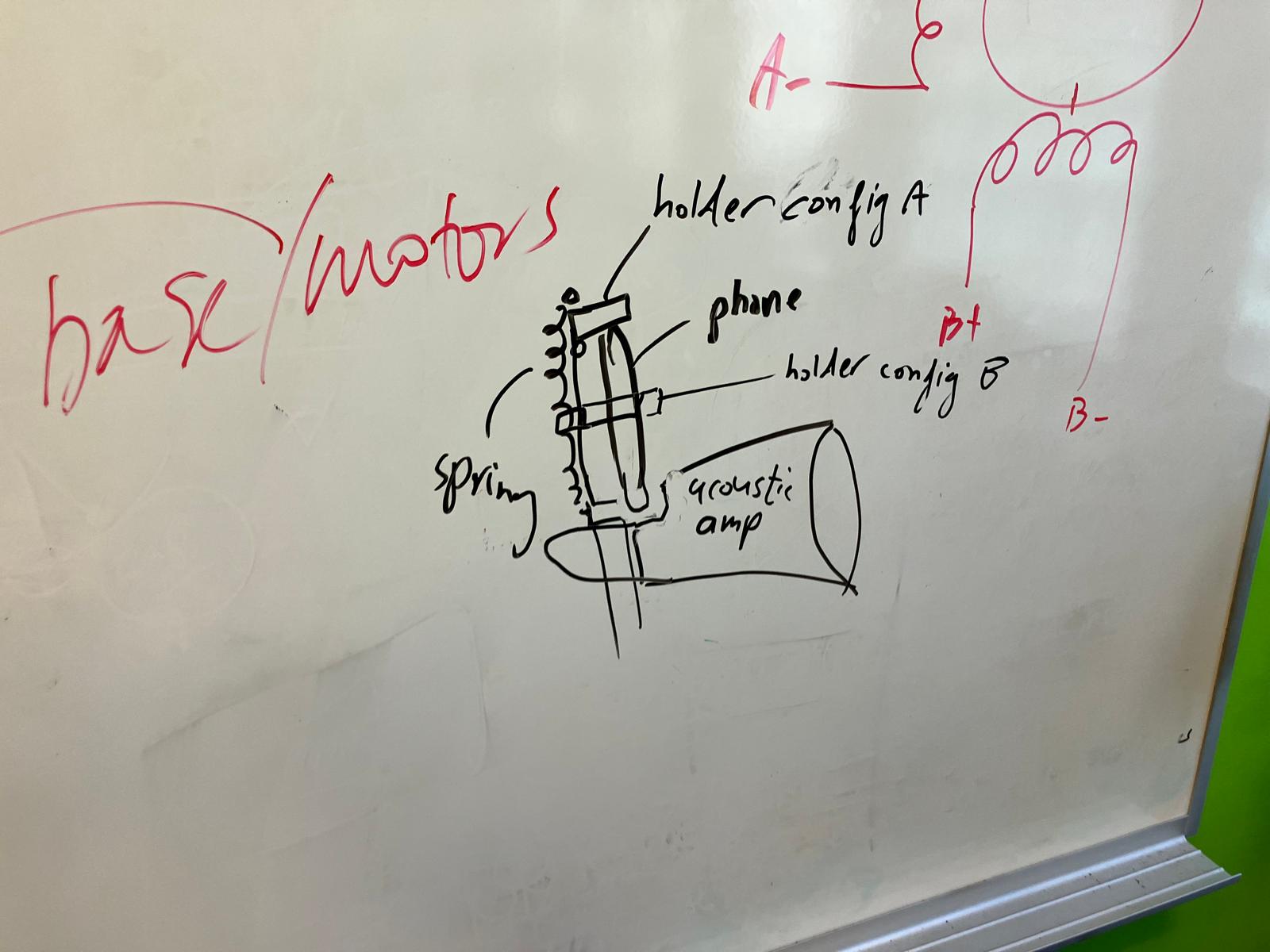

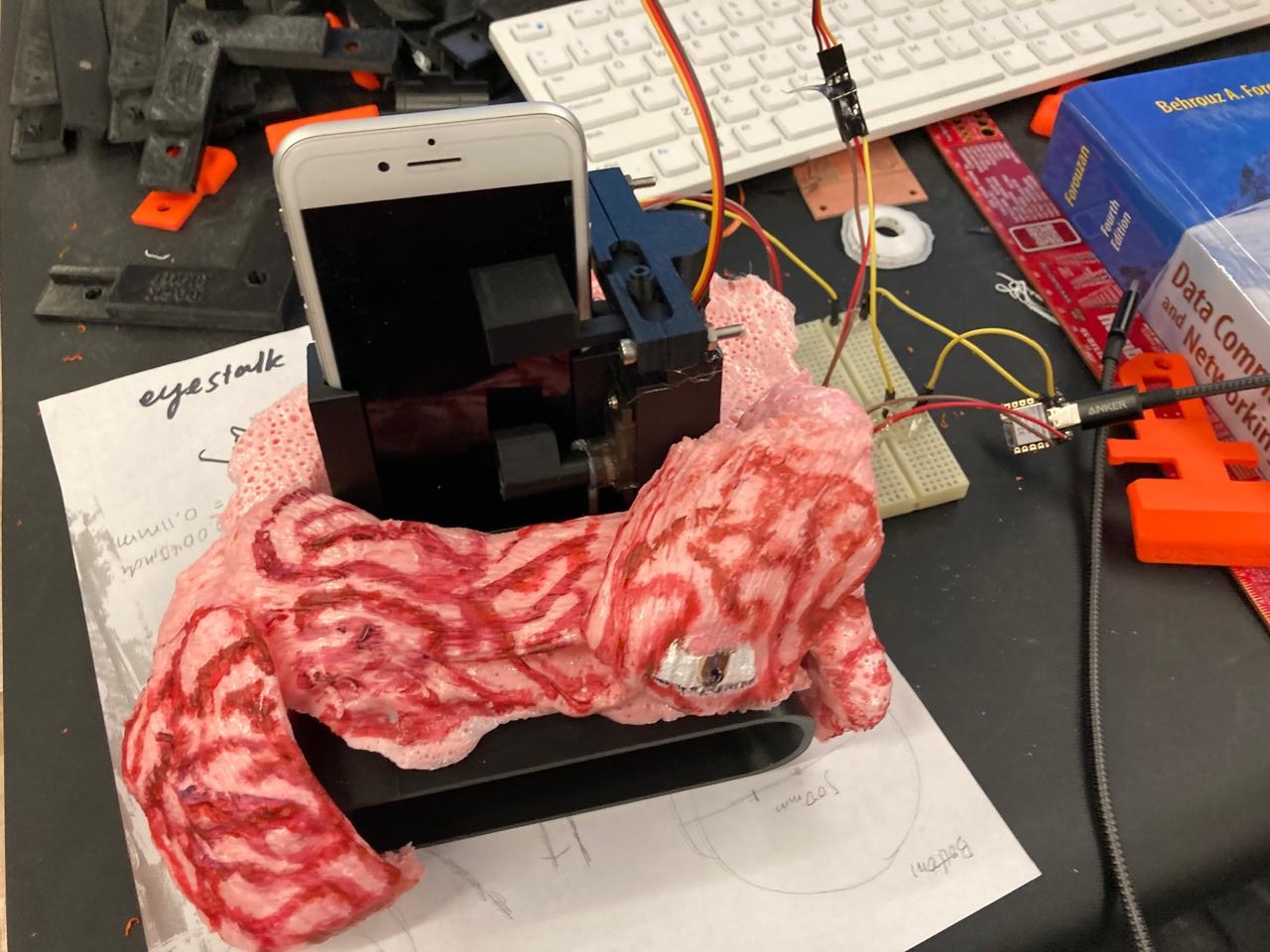

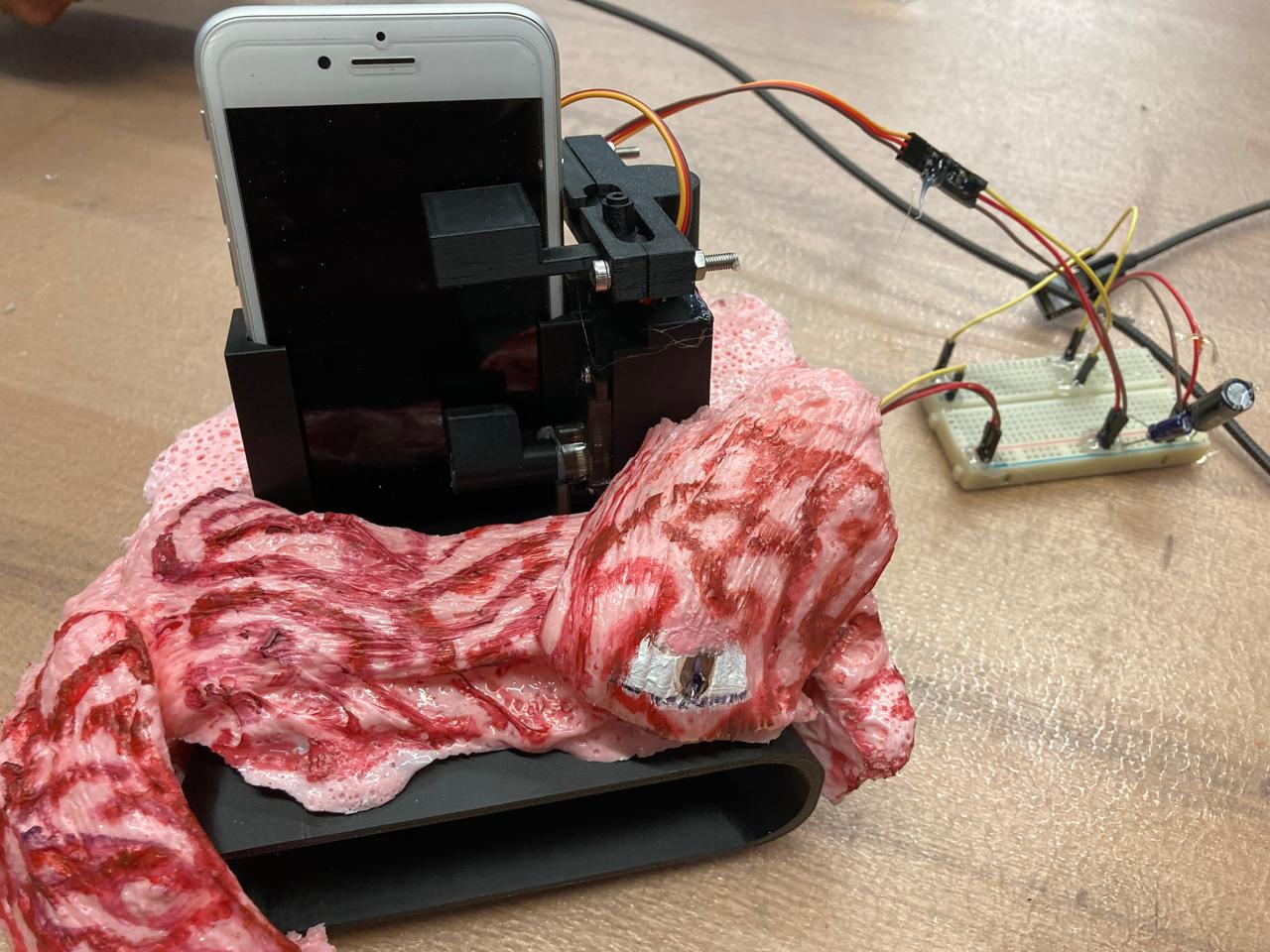

Phone Holder & Amplifier

Designed a phone holder with integrated passive amplifier for audio output. The design incorporates a spring-loaded mechanism for secure phone mounting and a horn-shaped amplifier for enhanced sound projection.

Stylus Design & Development

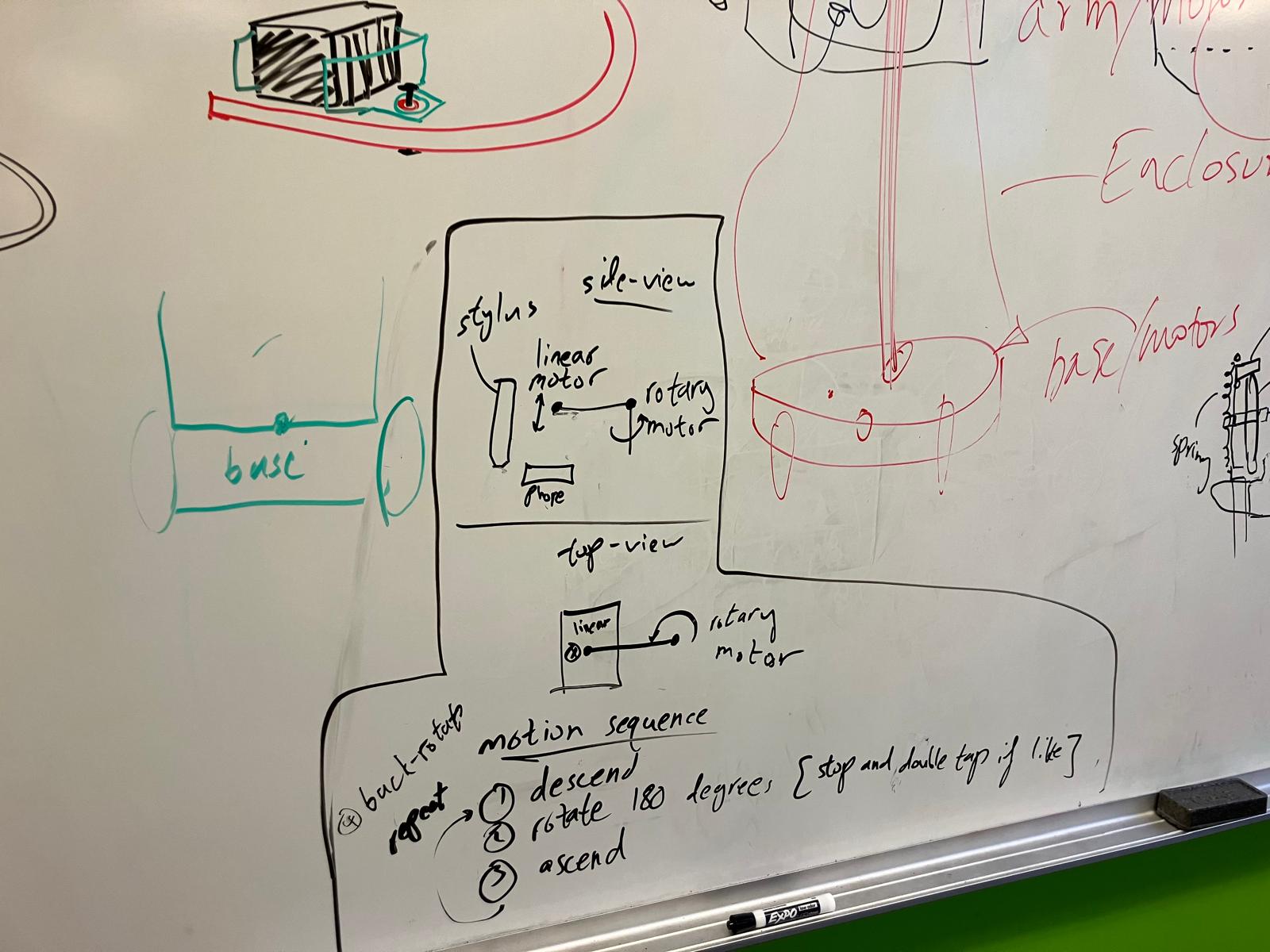

Developed multiple iterations of the stylus mechanism for touch screen interaction, progressing from simple manual designs to a linear actuator-driven system for precise control.

Tapping & Swiping Motor System

Designed a motor-driven system for tapping and swiping gestures using a linear actuator mechanism with servo control for precise horizontal movement.

Camera System & Edge AI Integration

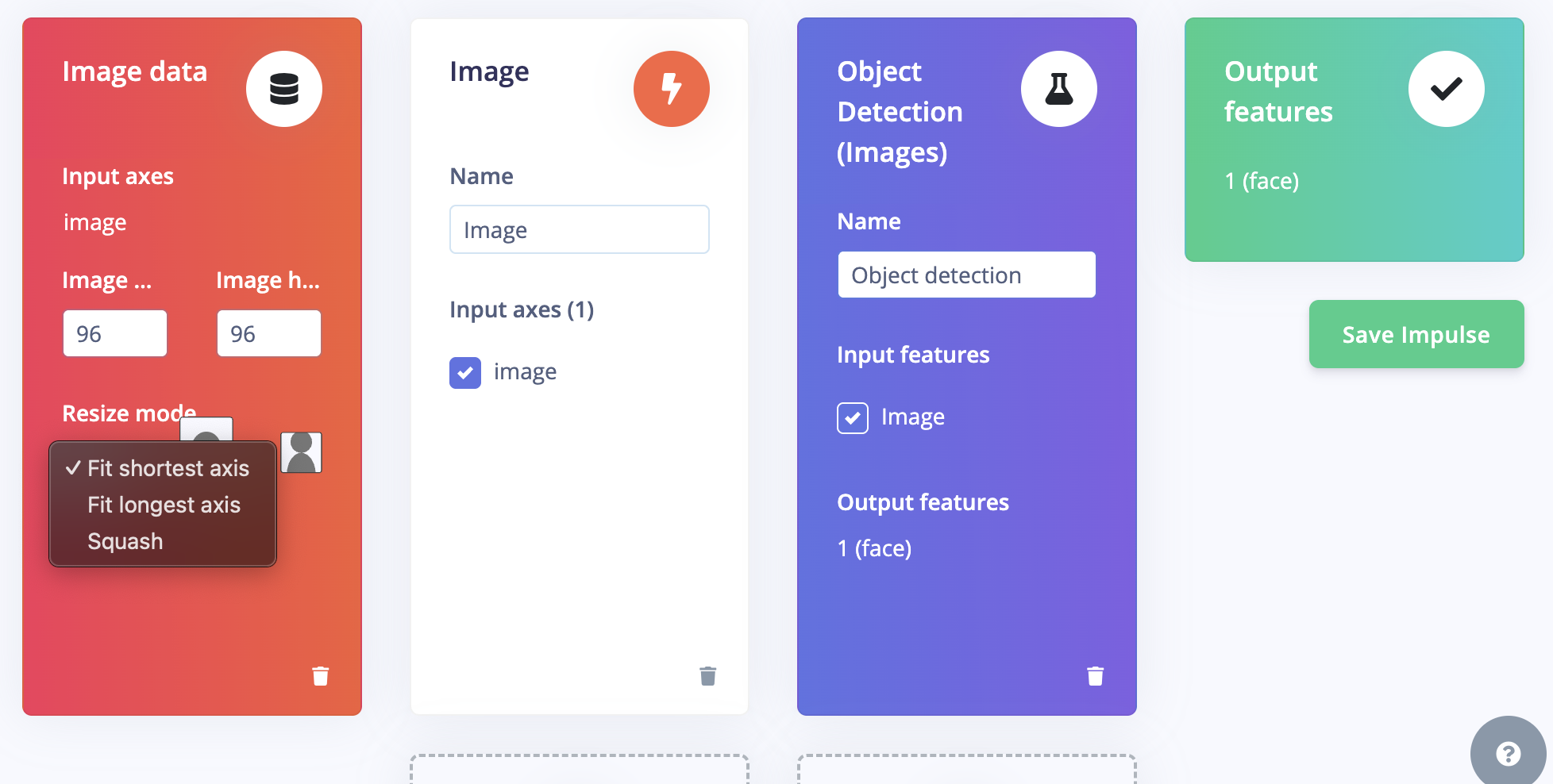

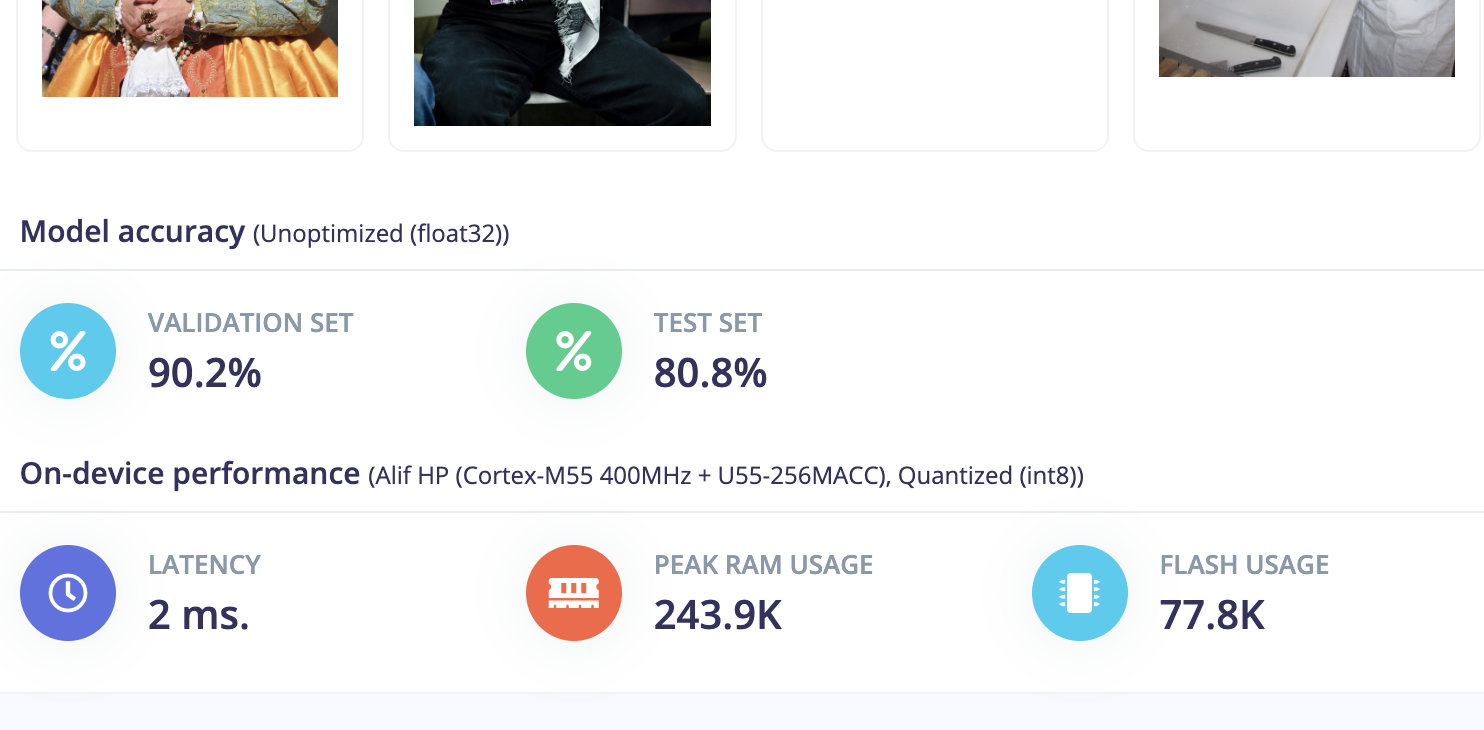

Developed the camera subsystem with Wi-Fi livestreaming and edge AI inference capabilities for real-time object detection and face recognition.

Camera Stream Code

The camera livestream implementation uses ESP32-S3's built-in camera and HTTP server capabilities to stream JPEG frames over Wi-Fi using MJPEG (Motion JPEG) protocol. The system initializes the camera with optimized settings for frame rate and quality, connects to Wi-Fi, and serves a continuous stream of JPEG images via HTTP multipart response.

For detailed pseudocode and implementation, see the Camera Code section in Design Files.

Edge AI Face Detection

The Edge AI system uses a FOMO (Faster Objects, More Objects) model from Edge Impulse for real-time face detection. The model was trained on person/face classification data from the Model Zoo, converted to TensorFlow Lite format, and compiled as an Arduino library for deployment on the ESP32-S3.

The system processes camera frames through the on-device inference pipeline, outputs bounding box coordinates for detected faces, converts these coordinates to distance measurements, and sends byte packets to motor microcontroller boards for control. This enables real-time person tracking and machine interaction based on face detection.

Edge Impulse Model: View model in Edge Impulse Studio →

Development References: ChatGPT Transcript 1, ChatGPT Transcript 2, ChatGPT Transcript 3, ChatGPT Transcript 4

User Interface Design

Designed the v1 GUI for manual control and monitoring of the machine's subsystems.

Design Files

All design files organized by subsystem component:

Phone Holder & Amplifier

Design files for the phone holder with integrated passive amplifier.

phone-holder-print.3mf— Main phone holder 3MF filephone-stand-amplifier-print.3mf— Amplifier horn 3MF file

References: Spring Loaded Phone Holder (Thingiverse), Phone Amplifier Passive Speaker (Thingiverse)

Stylus

Design files for the stylus mechanism.

printable_stylus_with_built_in_stand.stl— Stylus with integrated stand

References: Printable Stylus (Thingiverse)

Tapping & Swiping Motors

Design files for the linear actuator and servo-driven tapping/swiping mechanism.

linear_motor.3mf— Linear motor assemblylinear_motor_stylus.3mf— Linear motor with stylus mountCase_R.3mf,Linear_Case_L.3mf— Motor case componentsGear.3mf,Linear_Rack_RL.3mf— Gear and rack components

References: Linear MG90S Micro Servo (Thingiverse), Linear Actuator Design (Thingiverse)

Servo Motor Controls

Arduino code for controlling two MG90S servo motors for tapping and swiping mechanisms.

Download Files:

two_servo_spins.zip— Complete project for dual servo sweep testtwo_servo_spins.ino— Dual servo opposite-direction sweep controlback_forth_test.zip— Complete project for 4-step motion testback_forth_test.ino— 4-step synchronized motion pattern (0° → 90° → 180° → 90° → 0°)

Vinyl Cutter Designs

Vinyl sticker designs generated using VDraw.ai black-and-white image converter for preparing artwork suitable for vinyl cutting.

VDraw_1763512341238.png— "Swiper No Swiping" sticker design converted from original artworkVDraw_1763514225691.png— "Brainrot9000" logo sticker design generated from Gemini-created artwork

The VDraw.ai converter optimizes images for vinyl cutting by creating clean black-and-white designs with clear edges and minimal detail loss, ensuring successful cutting and weeding operations.

Phone Swiper & Tapper Design

Complete design for the phone holder with integrated swiper and tapper mechanisms, including servo mounts, linear actuators, and motion guides.

phone holder and movement v8.f3z— Fusion 360 design file (v8) for phone holder with integrated swiper and tapper mechanisms

The design includes all mechanical components for the phone holder, servo-driven linear actuators for tapping and swiping, mounting brackets, and protective enclosures for reliable operation.

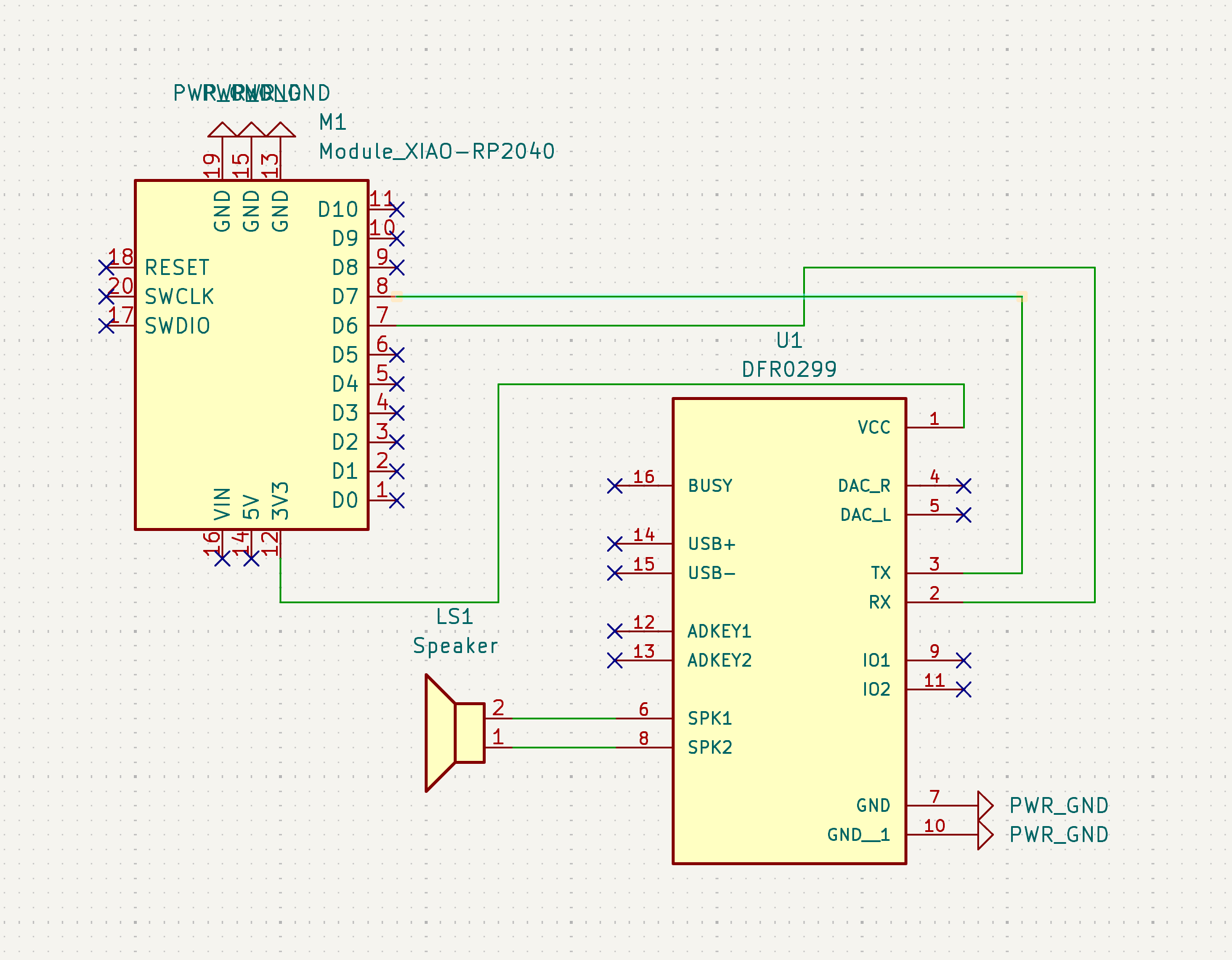

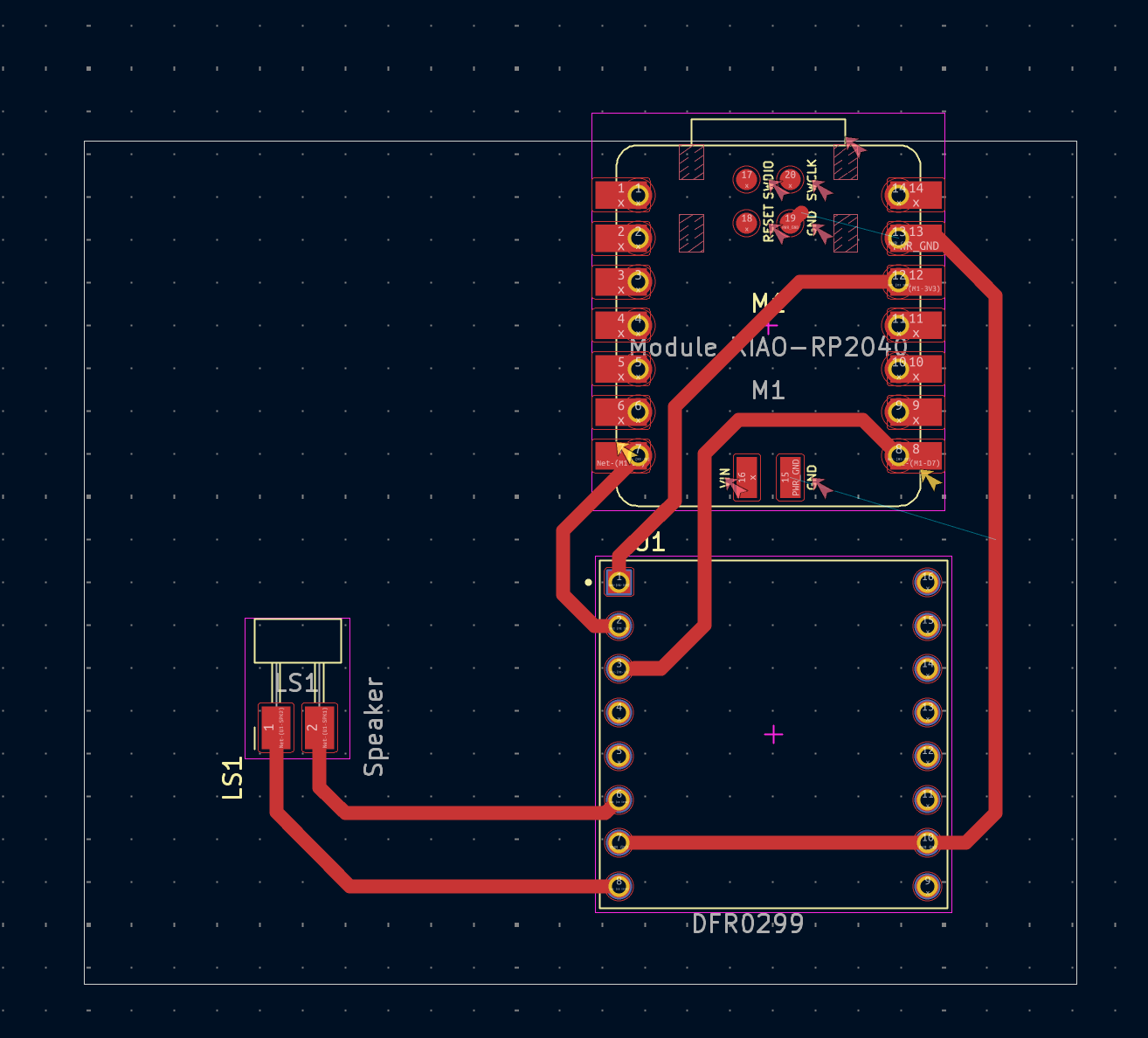

Speaker PCB

PCB design files for the speaker/amplifier subsystem circuit board, including Gerber files for fabrication and design documentation.

DFPlayer-F_Cu.gbr— Front copper layer Gerber file for PCB fabricationDFPlayer-Edge_Cuts.gbr— Edge cuts Gerber file defining board outlinepcb_design.png— PCB layout visualization showing component placement and trace routingpcb_schematic.png— Circuit schematic diagram showing electrical connections and component relationships

The PCB was milled using the Othermill machine following the standard operating procedures documented in Week 5 training documentation.

Camera System Code

Arduino code for ESP32-S3 camera livestreaming and Edge AI face detection.

Camera Livestream Pseudocode

SETUP:

1. Initialize Serial communication (115200 baud)

2. Configure camera pins (from camera_pins.h):

- Data pins (Y2-Y9) for parallel data bus

- Control pins (XCLK, PCLK, VSYNC, HREF)

- I2C pins (SIOD, SIOC) for camera configuration

3. Create camera_config_t structure:

- Set LEDC channel and timer for clock generation

- Map all GPIO pins to camera interface

- Set XCLK frequency to 20MHz

- Set pixel format to JPEG

- Configure frame size (QVGA if PSRAM available, QQVGA otherwise)

- Set JPEG quality to 12 (if PSRAM available)

- Set frame buffer count (2 if PSRAM, 1 otherwise)

4. Initialize camera with esp_camera_init()

5. Connect to Wi-Fi network:

- Begin connection with SSID and password

- Wait until connection established

- Print local IP address

6. Start HTTP server:

- Create HTTP server configuration

- Register URI handler for root path "/"

- Set handler function to stream_handler

- Start server and print access URL

STREAM_HANDLER (HTTP request handler):

1. Set HTTP response type to "multipart/x-mixed-replace; boundary=frame"

2. Enter infinite loop:

a. Capture frame from camera (esp_camera_fb_get())

b. If capture fails, return error

c. Format HTTP multipart header:

- Boundary marker: "--frame"

- Content-Type: "image/jpeg"

- Content-Length: frame buffer length

d. Send header chunk via HTTP response

e. Send frame buffer data chunk

f. Return frame buffer to camera (esp_camera_fb_return())

g. Send boundary terminator "\r\n"

h. If any send operation fails, break loop

3. Return result status

LOOP:

- Minimal delay (10ms) to allow other tasksDownload Files:

camera_stream.zip— Complete camera stream project (includes .ino and .h files)camera_stream.ino— Main Arduino sketch for camera livestreamingcamera_pins.h— GPIO pin definitions for XIAO ESP32-S3 camera module

Edge AI Face Detection Library

Edge Impulse Arduino library for FOMO-based face detection on ESP32-S3.

ei-face-detection--fomo-arduino-1.0.90.zip— Edge Impulse Arduino library (v1.0.90)

Edge Impulse Model: View model in Edge Impulse Studio →

Group Collaboration: All design work was documented in the Slack thread after each working session, ensuring real-time communication and progress tracking throughout the project.

Individual Contribution to Group Assignment 2: Actuation & Automation

Co-Development: Servo Motor Controls & Electrical Connections

Co-developed servo motor control firmware and electrical connections for the tapper and swiper mechanisms with Hayley Bloch. The system uses two MG90S micro servos connected to GPIO pins on the ESP32-S3 for synchronized tapping and swiping motions. Development transcript →

Electrical Connections

| Component | Connection | ESP32-S3 Pin |

|---|---|---|

| Servo 1 (Tapper) Signal | PWM Control | GPIO1 |

| Servo 2 (Swiper) Signal | PWM Control | GPIO2 |

| Servo 1 & 2 Power | VCC (5V) | 5V Output |

| Servo 1 & 2 Ground | GND | GND |

Servo Control Pseudocode

two_servo_spins.ino

SETUP:

1. Initialize Serial communication (115200 baud)

2. Allocate PWM timers for ESP32-S3 (timer 0 and timer 1)

3. Attach servo1 to GPIO1 with pulse range 500-2400μs (MG90S range)

4. Attach servo2 to GPIO2 with pulse range 500-2400μs

LOOP:

1. Sweep forward (0° to 180°):

- servo1: 0° → 180° (incrementing)

- servo2: 180° → 0° (decrementing, opposite direction)

- 10ms delay between steps

2. Sweep backward (180° to 0°):

- servo1: 180° → 0° (decrementing)

- servo2: 0° → 180° (incrementing, opposite direction)

- 10ms delay between steps

3. Repeat continuouslyback_forth_test.ino

SETUP:

1. Initialize Serial communication (115200 baud)

2. Allocate PWM timers (timer 0 and timer 1)

3. Attach both servos to GPIO1 and GPIO2 with 500-2400μs range

MOVE_BOTH function:

- Set both servos to same angle simultaneously

- Wait 120ms for MG90S to reach position (tunable delay)

LOOP (4-step pattern):

1. Move both servos to 90° (center position)

2. Move both servos to 180° (full extension)

3. Move both servos to 90° (return to center)

4. Move both servos to 0° (full retraction)

5. Repeat patternFor complete code files, see Servo Motor Controls in Design Files.

Co-Design & Printing: Tapper and Swiper Enclosures

Collaborated with Hayley Bloch on the mechanical design and 3D printing of tapper and swiper enclosures and actuators. The designs integrate servo mounting points, linear motion guides, and protective casings for reliable operation.

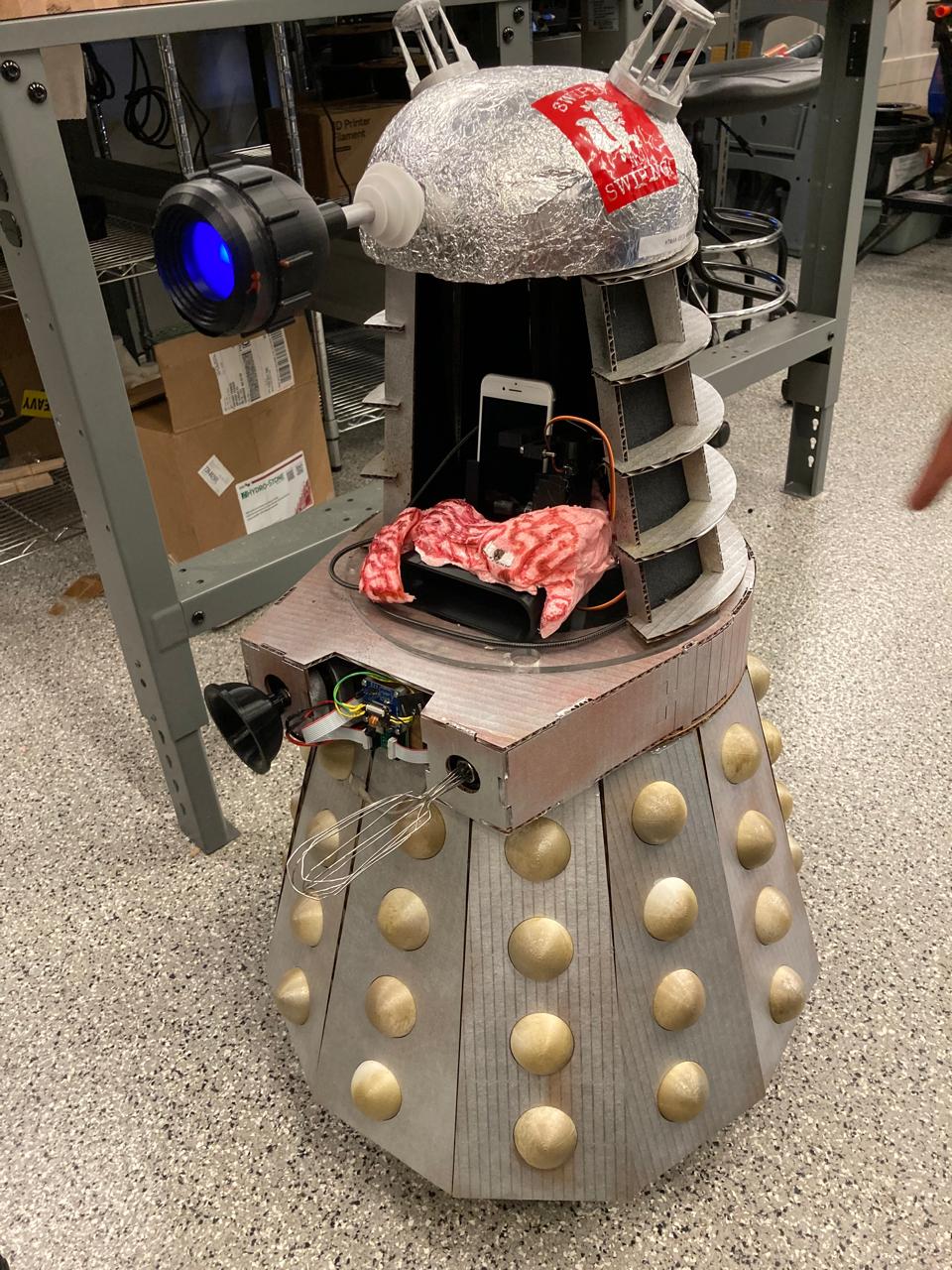

Vinyl Sticker Design & Application

Designed, cut, transferred, and applied custom vinyl stickers to the assembled Brainrot9000 machine. The vinyl graphics enhance the machine's visual identity and provide clear labeling for different subsystems.

Design Process

The vinyl designs were created using VDraw.ai black-and-white image converter to prepare artwork for vinyl cutting. Two main designs were developed:

- "Swiper No Swiping" sticker: Converted from original artwork using VDraw.ai to create a clean, cuttable design suitable for vinyl cutting.

- "Brainrot9000" logo sticker: Generated from a Gemini-created design, processed through VDraw.ai to optimize for vinyl cutting with clear edges and minimal detail loss.

Application Steps

- Vinyl Cutting: Loaded the converted designs into the vinyl cutter software and cut the designs from colored vinyl sheets, ensuring proper blade depth and cutting speed for clean edges.

- Weeding: Carefully removed excess vinyl material around the designs using tweezers, leaving only the desired graphic elements on the backing paper.

- Transfer Paper Application: Applied transfer tape over the weeded vinyl design, using a squeegee to ensure proper adhesion and remove air bubbles.

- Surface Preparation: Cleaned the target surface on the Brainrot9000 assembly to ensure proper adhesion, removing dust and oils.

- Positioning & Application: Positioned the transfer paper with the vinyl design on the target surface, then used a squeegee to press the vinyl onto the surface, working from center to edges.

- Transfer Paper Removal: Slowly peeled away the transfer paper at a low angle, leaving the vinyl design adhered to the surface. Applied additional pressure to any areas that didn't transfer properly.

Tapping & Swiping Automation Development

Co-designed the tapping and swiping automation system with Hayley Bloch, then assembled and troubleshooted the mechanisms to ensure reliable operation. The system integrates servo-driven actuators with precise motion control for synchronized tapping and swiping actions.

Development Process

- Mechanical Design: Collaborated on the design of tapper and swiper enclosures, ensuring proper servo mounting, linear motion guides, and protective casings for reliable operation.

- Electrical Integration: Wired two MG90S servo motors to ESP32-S3 GPIO pins (GPIO1 for tapper, GPIO2 for swiper) with shared 5V power and ground connections.

- Firmware Development: Co-developed servo control code implementing synchronized motion patterns, including opposite-direction sweeps and coordinated 4-step sequences.

- Assembly: Assembled the tapper and swiper mechanisms, mounting servos, installing linear actuators, and securing enclosures to the machine chassis.

- Troubleshooting: Tested motion patterns, identified and resolved timing issues, adjusted servo positions, and fine-tuned PWM signals for optimal performance.

Person Follower Automation Development

Following the tapping and swiping automation, worked on early iterations of the person follower system. Shared references, helped with code logic, provided implementation code from references, discussed technical issues, and collaborated with programmers on the team to develop the face-tracking and person-following functionality.

Development Approach

- Reference Research: Identified and shared relevant references for person detection, face tracking, and camera control algorithms suitable for the ESP32-S3 platform.

- Code Logic Design: Collaborated on the overall architecture, discussing how to integrate Edge AI face detection with motor control for following behavior.

- Implementation Support: Provided code examples from references and developed custom implementations for bounding box processing, distance calculation, and motor control mapping.

- Problem Solving: Worked through issues including camera frame rate optimization, detection accuracy, motor response timing, and coordinate system mapping.

- Team Collaboration: Coordinated with other programmers to integrate the person follower with the overall machine control system and ensure proper communication between subsystems.

Full Actuation & Automation Integration

Assembled and integrated the complete actuation and automation system with other subsystem teams. This involved coordinating the tapper, swiper, person follower, and camera systems into a unified control architecture.

Integration Steps

- Subsystem Coordination: Worked with teams responsible for camera, display, and control systems to establish communication protocols and timing requirements.

- Electrical Integration: Consolidated wiring for all actuation systems, ensuring proper power distribution and signal routing throughout the machine chassis.

- Software Integration: Integrated servo control code with the main machine control loop, ensuring proper sequencing and coordination between different automation functions.

- Testing & Validation: Performed end-to-end tests of the complete actuation system, verifying that all subsystems work together without conflicts or timing issues.

- Calibration: Fine-tuned motion parameters, timing delays, and control thresholds to optimize the overall system performance.

Head Inner Subsystem Assembly

Assembled the head inner subsystem, which houses the camera, display, and control electronics. Integrated this subsystem with other teams' components to create a cohesive machine head assembly.

Assembly Process

- Component Layout: Organized camera module, display screen, and control boards within the head enclosure, ensuring proper spacing and cable management.

- Mechanical Mounting: Secured all components using appropriate fasteners and mounting brackets, ensuring stability and proper alignment.

- Electrical Connections: Routed and connected all cables for power, data, and control signals, using cable management solutions to prevent interference and tangling.

- Integration Testing: Tested the head subsystem independently to verify all components function correctly before integration with the main chassis.

- Cross-Subsystem Integration: Worked with other teams to connect the head subsystem to the main machine body, ensuring proper mechanical and electrical interfaces.

Full Brainrot9000 Assembly

Assembled and integrated the complete Brainrot9000 machine, bringing together all subsystem components into a fully functional automated system. Coordinated with multiple teams to ensure proper integration of mechanical, electrical, and software components.

Final Assembly Steps

- Chassis Integration: Mounted the head subsystem, tapper/swiper mechanisms, and base components onto the main machine chassis, ensuring proper alignment and structural integrity.

- Electrical Consolidation: Connected all subsystem wiring to the main power distribution and control boards, implementing proper cable management throughout the assembly.

- Software Integration: Integrated all subsystem control code into the main machine control loop, ensuring proper communication and coordination between all automated functions.

- System Calibration: Calibrated all sensors, actuators, and control parameters to ensure optimal performance across all subsystems.

- Final Testing: Performed comprehensive end-to-end system tests, verifying that all automation features work correctly together and that the machine operates as designed.

- Visual Finishing: Applied vinyl stickers and completed final aesthetic touches to enhance the machine's visual presentation.

Speaker PCB Milling

Milled a custom PCB for the speaker/amplifier subsystem using the Othermill machine, creating the circuit board that interfaces the audio output with the phone holder amplifier system. The PCB was designed to integrate with the overall machine electronics and provide reliable audio signal routing. The milling process followed the standard operating procedures documented in Week 5 training documentation.

PCB Design

For complete design files including Gerber files for fabrication, see Speaker PCB in Design Files.

PCB Milling Process

- Design Preparation: Prepared the PCB design files with proper trace routing, component footprints, and drill holes for the speaker circuit. Exported Gerber files (F_Cu for front copper layer, Edge_Cuts for board outline) for the Othermill machine.

- Material Setup: Secured the FR-1 copper-clad board to the milling machine bed using double-sided tape, ensuring proper leveling and flatness for accurate milling. Positioned the board left-justified with 1mm buffer from origin.

- Tool Selection: Selected appropriate end mills (1/64" for trace isolation, 1/32" for drilling) following the Othermill standard operating procedures, considering trace width and spacing requirements.

- Milling Execution: Ran the milling program using Bantam Tools software to isolate traces, create pads, and drill component mounting holes with precise depth control. Monitored the process to ensure proper tool engagement and material removal.

- Quality Inspection: Inspected the milled PCB for trace continuity, proper isolation, and clean edges before component assembly. Checked for stray copper strands and addressed any issues with light sanding or utility knife.

- Component Assembly: Soldered components to the milled PCB, including audio connectors, signal routing components, and interface connections, following proper soldering techniques for reliable electrical connections.

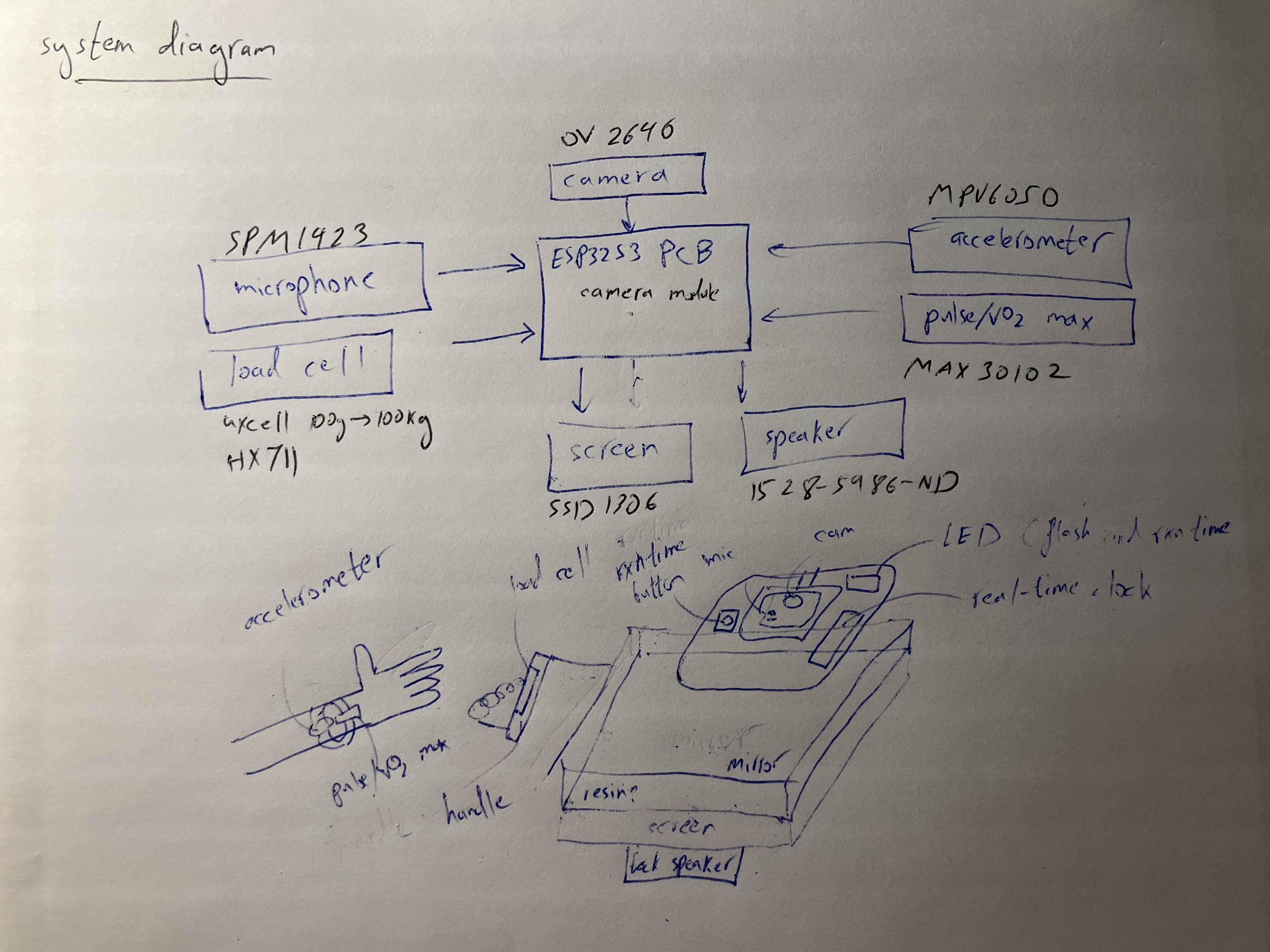

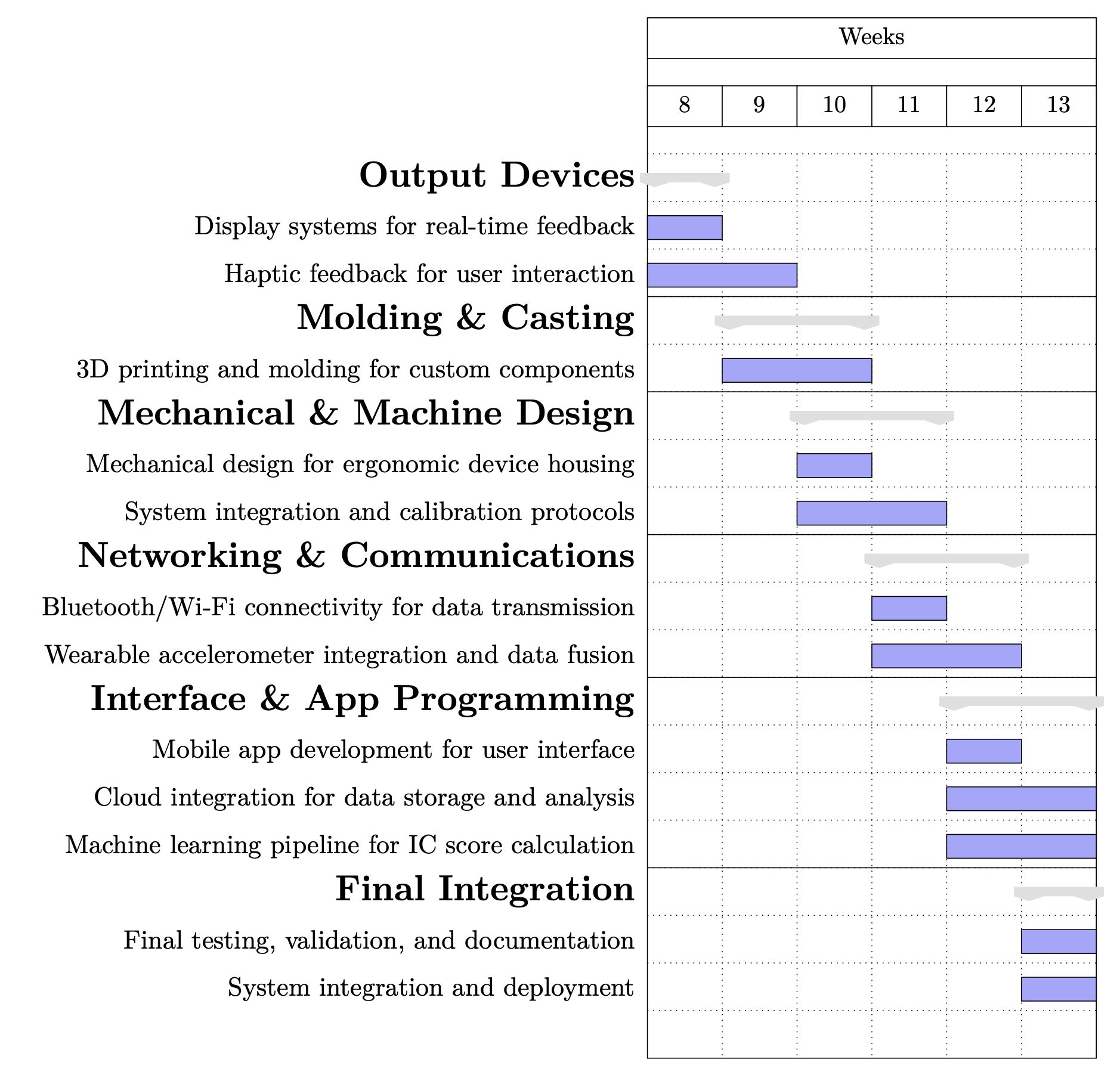

Individual Assignment · Midterm Review

The midterm review was completed. On the final project site: posted a system diagram, listed tasks to be completed, made a schedule, and scheduled a meeting with instructors for a graded review.

System Diagram

The system diagram for the MirrorAge Intrinsic Capacity Mirror project was posted on the final project page, showing the multimodal sensing stack, on-device inference layers, and real-time feedback channels.

Updated block diagram highlighting the multimodal sensing stack (grip, voice, face, motion, wearables), on-device inference layers, and real-time feedback channels that feed the intrinsic capacity score. View full system diagram →

Tasks to be Completed

The remaining tasks for the MirrorAge project were listed and organized into five key areas:

- Hardware Integration: Consolidate grip, voice, camera, reaction-time, and wearable sensor harnesses into the MirrorAge enclosure. Finish molding/casting iterations for the ergonomic housing.

- Firmware & Edge AI: Stabilize onboard inference for SenseCraft vision models and voice-age pipelines. Calibrate grip-force and reaction-time firmware for repeatable sampling.

- Networking & Data Fusion: Bring up BLE/Wi-Fi data paths for wearable accelerometer streaming. Implement the fusion layer that combines per-domain scores into an overall IC metric.

- Interface & UX: Finish mobile/web dashboard mockups for user onboarding and data review. Finalize real-time mirror feedback cues tied to sensor status and IC outcomes.

- Validation & Documentation: Run end-to-end system tests and document calibration procedures. Record the one-minute video and finalize presentation assets.

Development Schedule

A development timeline was created that aligned subsystem sprints with HTMAA milestones from Week 8 through Week 13:

- Week 8 · Output Devices: Figuring out wiring for real-time display states.

- Week 9 · Molding & Casting: Learn how to cast custom housings and refine structural components.

- Week 10 · Mechanical Design: Figure out ergonomic enclosure and calibration fixtures.

- Week 11 · Networking: Program BLE/Wi-Fi telemetry and wearable data fusion.

- Week 12 · Interface/App: Create mobile UI, cloud bridge, and IC scoring pipeline.

- Week 13 · Final Integration: Run validation passes, document results, and prep deployment.

Instructor Meeting

A calendar hold was sent for Thursday, Nov 12 at 10:00 AM ET (38-501 conference room) per the shared HTMAA scheduling sheet. The meeting was held and the agenda covered subsystem demos, weekly documentation spot checks (Weeks 0–9), and next-sprint alignment.

The meeting slot was referenced in the midterm review schedule.

Feedback from Midterm Review

Documentation Improvements

- Week 2: Fixed video viewing perspective for better clarity and documentation quality.

- Week 4: Removed empty video training section to streamline content and improve page organization.

Potential Enhancements

- Mirror Angle Control: Considering adding a motor to control mirror angle to follow face if time permits, enhancing user interaction and tracking capabilities.

- Wearable Band System: Exploring molding and casting a band with rigid circuit integration for pulse/VO₂max monitoring and accelerometer data collection. Potential additions include a display with clock functionality and a second camera/microphone module. This can be implemented in 2D with a cross-sectional snap-on design for modular assembly.

Midterm Review Completed: All required elements (system diagram, task list, schedule, and instructor meeting) were documented on the final project page midterm review section, which included featured subsystems, completed tasks, execution schedule, and review logistics.

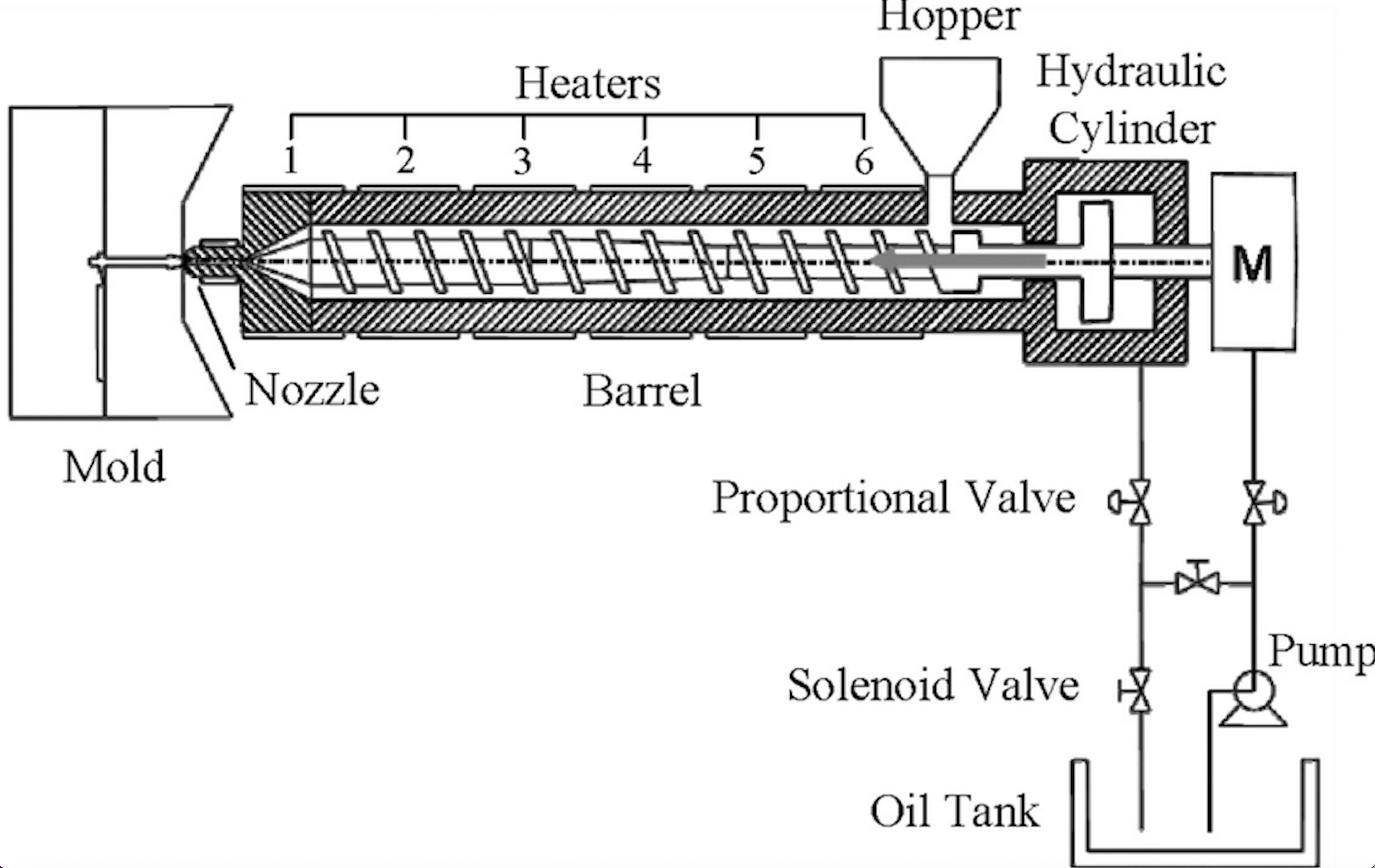

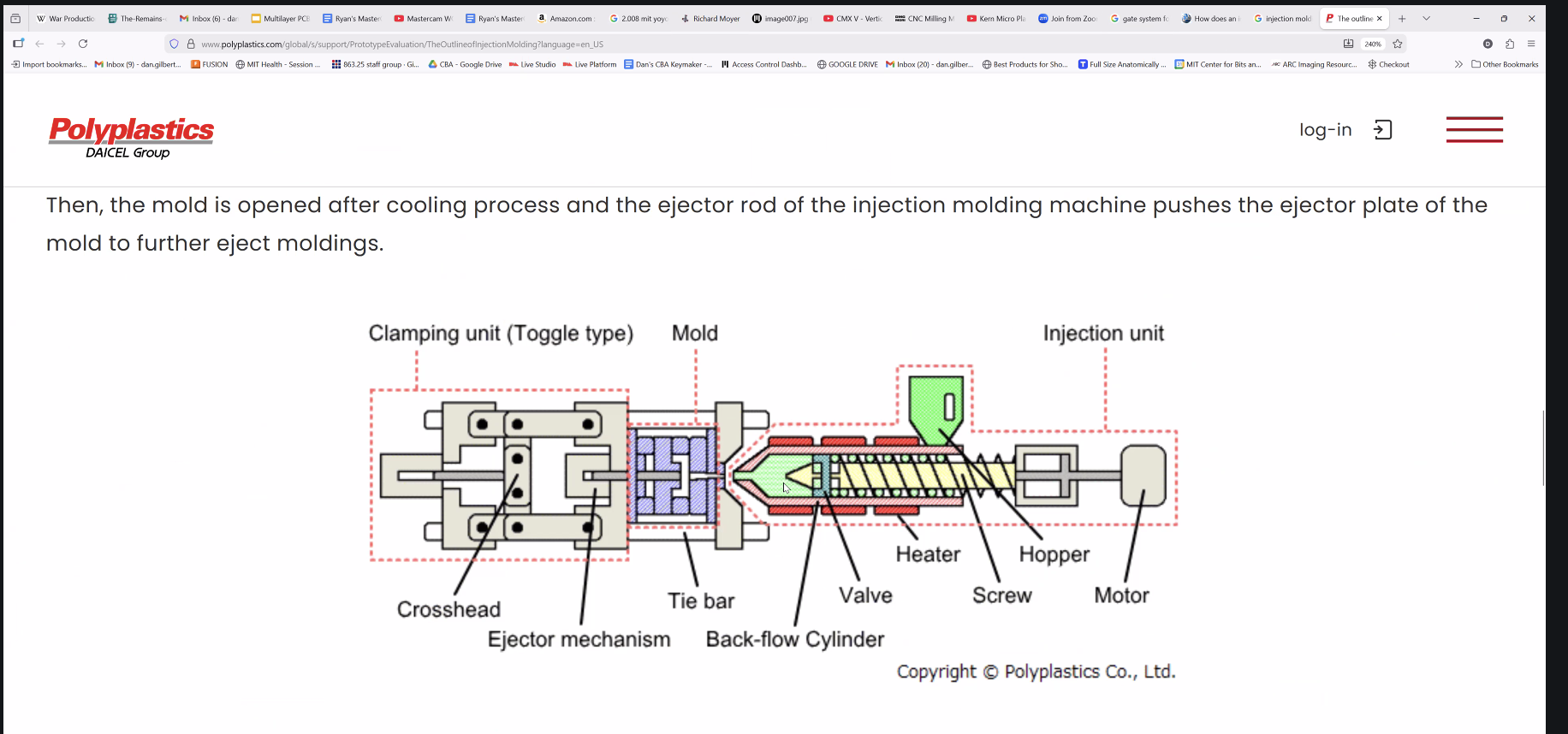

Injection Molding Training with Dan

Key concepts and processes from the injection molding training session, anchored to the Slack recap (Slack).

Injection Molding Fundamentals

Injection molding is a manufacturing process for producing parts by injecting molten material into a mold. Reference: Schematic diagram of an injection molding machine.

- Pressure limitations: Desktop injection molding machines cannot achieve the same pressure levels as industrial systems.

- Additives: Plastic additives are typically 1-3% by weight for performance tuning (colorants, fillers, stabilizers).

- Process overview: Plastic pellets are heated, melted, and injected into a mold cavity under pressure, then cooled and ejected.

Mold Design for Students

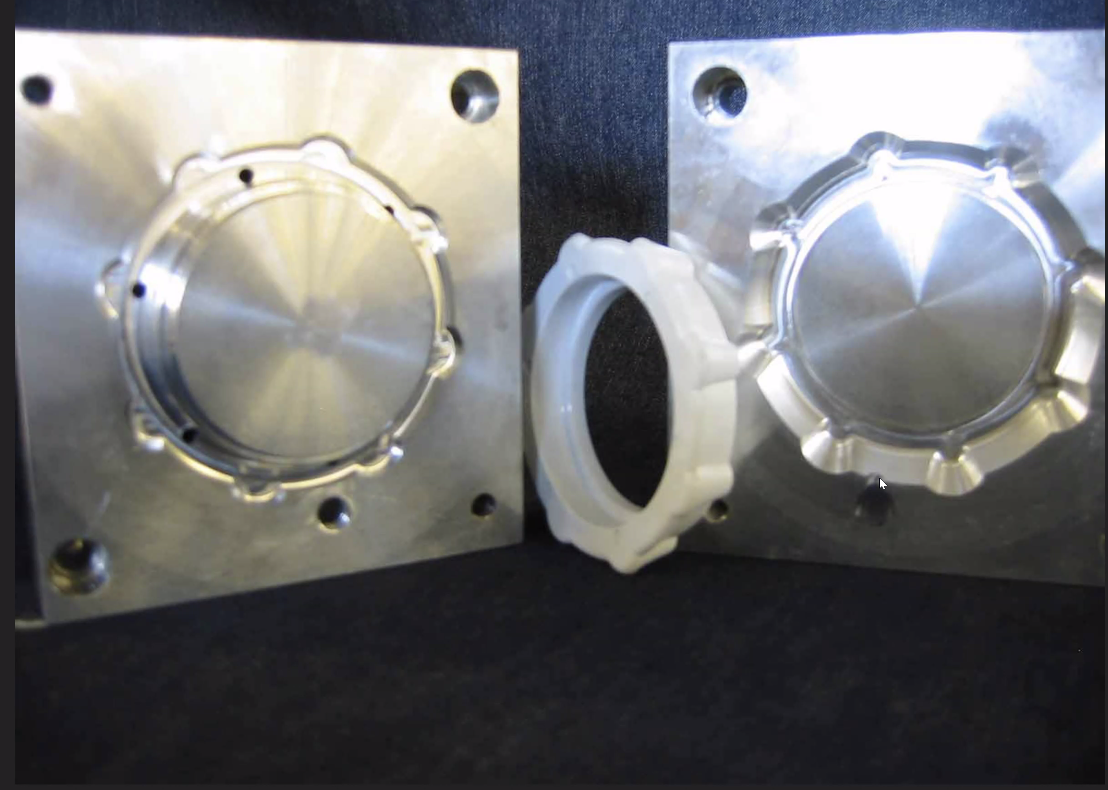

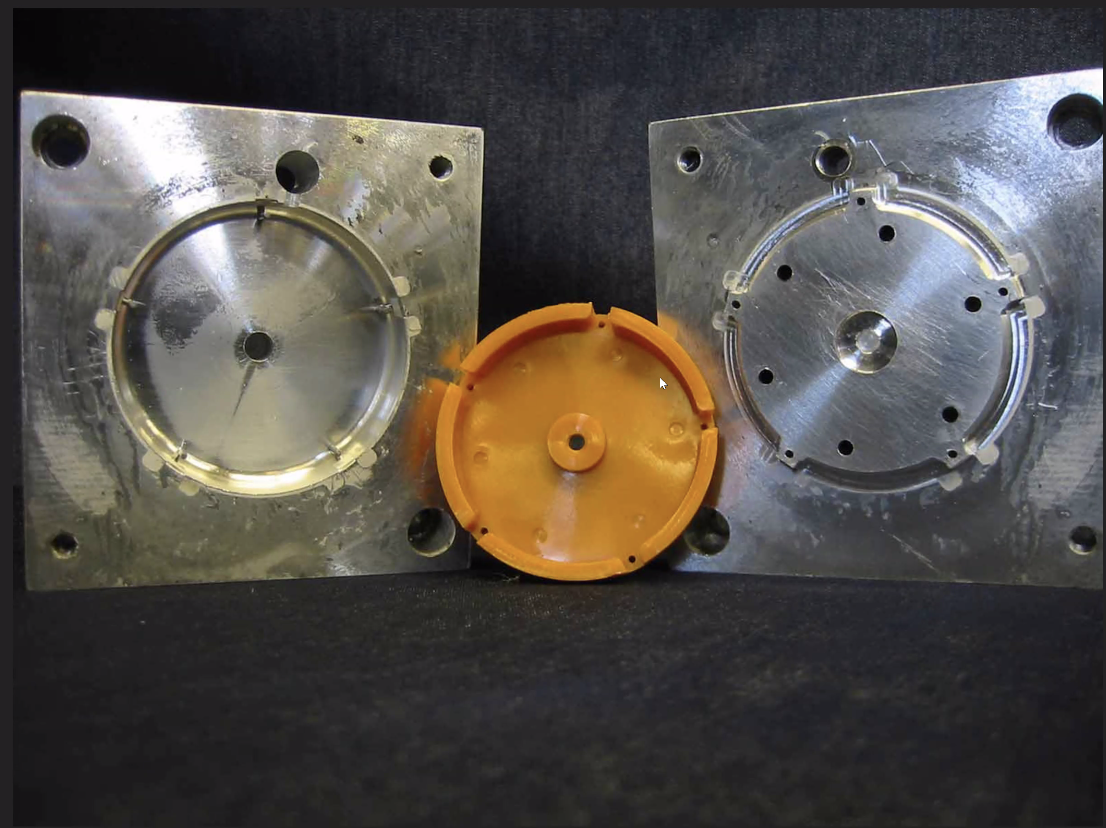

Students can create injection molds using generic mold blanks with core and cavity components.

- Mold blanks: Core and cavity components made of aluminum with alignment features and holes for plastic flow.

- Runner system: Simplified runner system with a gate to the part. Plastic flows in through the sprue, gets sheared and becomes liquid as it flows.

- Weld lines: As plastic flows, you'll see weld lines where flow fronts meet (does not need to be complicated).

- Example: Murakami yo-yos demonstrate successful injection molding workflows.

- Gate system: Reference gate system designs for injection molding.

Injection Molding Machine Components

Injection Molding Process

Reference: Injection molding animation — think of yourself as the plastic pellet traveling through the process.

- Two-bar vs four-bar: Different machine configurations affect clamping force and part quality.

- Statistical process control: Monitoring and controlling process parameters for consistent part quality.

- Ejector pins: Sometimes visible on finished parts; often milled or ground out after molding, or the ejector pin marks are removed during post-processing.

Machine Types & Applications

- Vertical injection molding machines: Electric/servo-driven, energy-efficient systems, mostly for two-part injection molds.

- Injection mold complex: Advanced mold designs with multiple cavities or complex geometries.

- Injection mold collapsible core: Specialized molds for parts with undercuts or complex internal features.

- Common issues: Press-fit in injection molded parts requires careful design—no taper (even though tapers are common in injection molded parts for other applications).

Injection Molding vs 3D Printing

- Speed: Injection molding fills the mold almost instantly, much faster than 3D printing which touches every point.

- Surface finish: Injection molded parts typically have better surface finish without post-processing tools for polishing.

- Hand polishing: Injection molded parts may still require hand polishing for high-gloss finishes, but the base surface quality is superior.

- Production volume: Injection molding is ideal for high-volume production, while 3D printing excels at prototyping and low-volume custom parts.

Designed a phone holder with integrated passive amplifier for audio output. The design incorporates a spring-loaded mechanism for secure phone mounting and a horn-shaped amplifier for enhanced sound projection.