Passive sensing

Photogrammetry

Photogrammetry is the collection and organization of reliable information about physical objects and the environment through the process of recording, measuring and interpreting photographic images and patterns of electromagnetic radiant imagery and other phenomena.

Photogrammetry was first documented by the Prussian architect Albrecht Meydenbauer in 1867. Since then it has been used for everything from simple measurement or color sampling to record complex 3d motion fields.

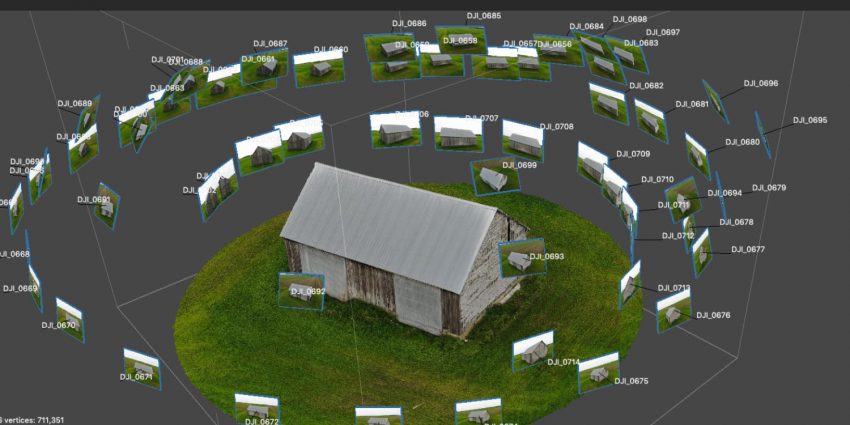

A typical medium resolution aerial photogrammetry scan of a barn. With 50-100 images a reasonably accurate model can be produced. Such models are often used in surveying and restoration projects from the scale of hand helf objects to cities.

Stereo matching and photogrammetry

Early uses in stereophotogrammetry, the estimation of 3d coordinates from measurements taken from two or more images through the identification of common points. This technology was used throughout the early 20th century for generating topographic maps.

stereophotogrammetry is now being used for capturing dynamic characteristics of previously difficult to measure systems like running wind turbines.

Photogrammetry is useful for outdoors settings, where all you need is a handheld camera and some patience. In this example, note the loss of quality towards the top, as pixel resolution becomes problematic:

Key Benefits

Geometry and texture/color in one workflow.

Affordability and flexibility. Depending on the end use application almost any camera will work given there is enough light and your post processing software is robust.

Real time feedback and processing as models improve. Ingenuity Drone

Key Challenges

Precision is improving but can still be completely thrown off by certain light conditions in much the same way LiDar struggles with smooth surfaces.

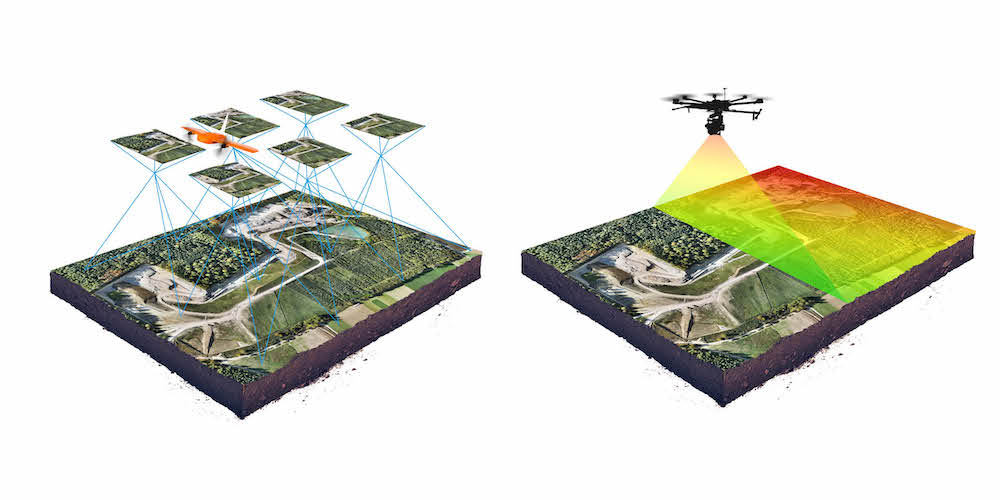

Increasingly industry pairs vision systems for photogrammetry with laser systems to balance the benefits of both.

Tools

Apps

iPhone and Android apps for photogrammetry and now LiDAR scanning have multiplied over the last 2 years. The primary driver is product placement, however some open source options are making way for both art and art preservation

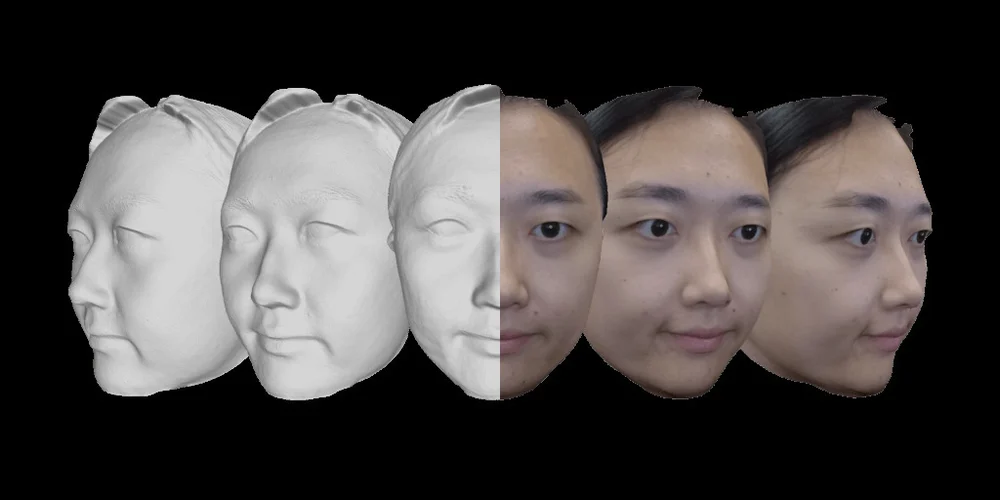

Bellus (Creepy, but fast and accurate face scanning)

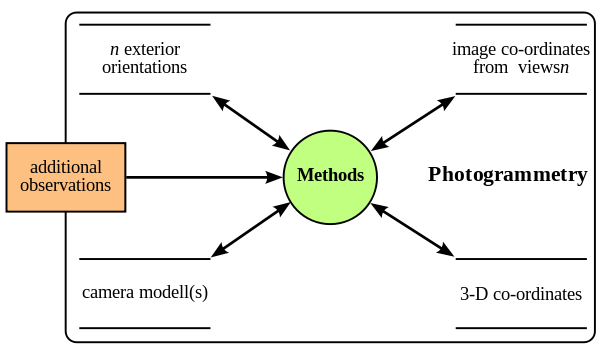

Camera equations

Intrinsics and extrinsics parameters

Levenberg–Marquardt algorithm or damped least-squares algorithm (dls) are used to minimize the error across 3d coordinates. This procedure is typically called bundle adjustment.

Groundwork camera properties and standards for USGS photgrammetry surveys.

ML for Photogrammetry

Improving accuracy while scraping information on the contents of photogrammetry data sets.

Light Field

Light Fields are a new study field in photography. The objective is to capture the full plenoptic content of the scene, defined as the collection of light rays emitted from it, in any given direction. If the ideal plenoptic function was known, any novel viewpoint could be synthesized by placing a virtual camera in this space, and selecting the relevant light rays.

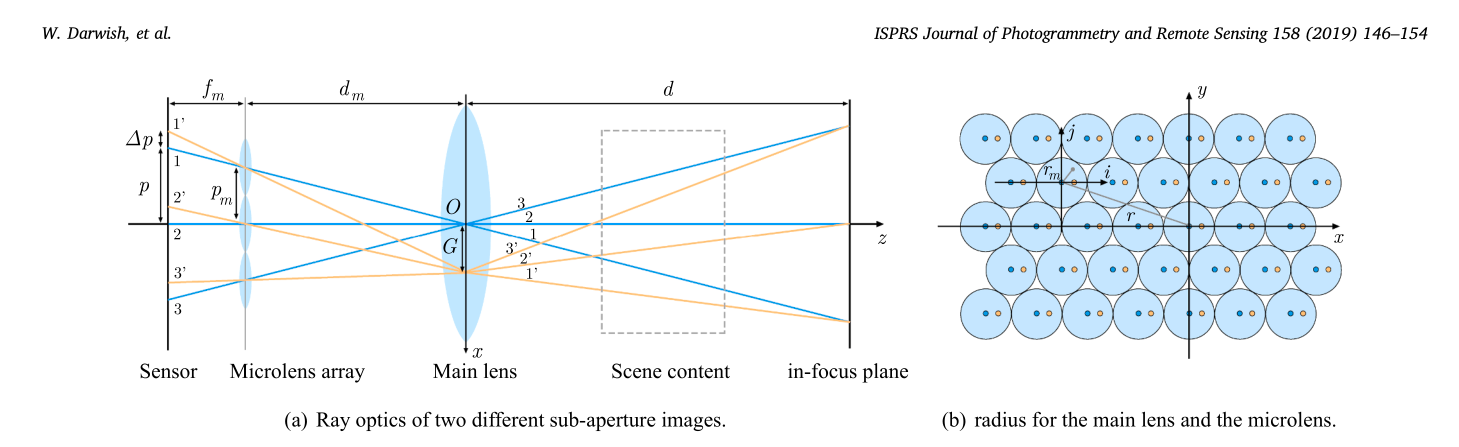

In practice, we can only sample light rays in discrete locations. There are two popular optical architectures for this:

- Multi-camera systems: simply shoot the scene from several locations using an array of camera (or a single moving one).

- Lenslets: a single CMOS sensor with an array of lenses in front.

In the lenslet approach, each pixel behind a lenslet provides a unique light ray direction. The collection for all lenses is called a sub aperture image, and roughly corresponds to what a shifted camera would capture. The resolution of these images is simply the total number of lenslets, and the number of sub-aperture images available is given by the number of pixels behind a lenslet. For reference, the Lytro Illum provides 15x15 sub-aperture images of 541x434 pixels each, which is a total of ~53 Megapixels.

The most efficient layout for lenslets is hexagonal packing, as it wastes the fewest pixel area. Note that some pixels are not fully covered by the lenslet and receive erroneous or darker data. This means some sub aperture images cannot be recovered.

Light Fields have gotten a lot of traction recently thanks to their hight potential in VR applications. One impressive work was shown by Google in in a SIGGRAPH 2018 paper:

Depth estimation on Light Field data is an active domain. For now, algorithms are commonly tested on ideal, synthetic light fields such as this dataset. Here is one example of point cloud obtained from a stereo matching method:

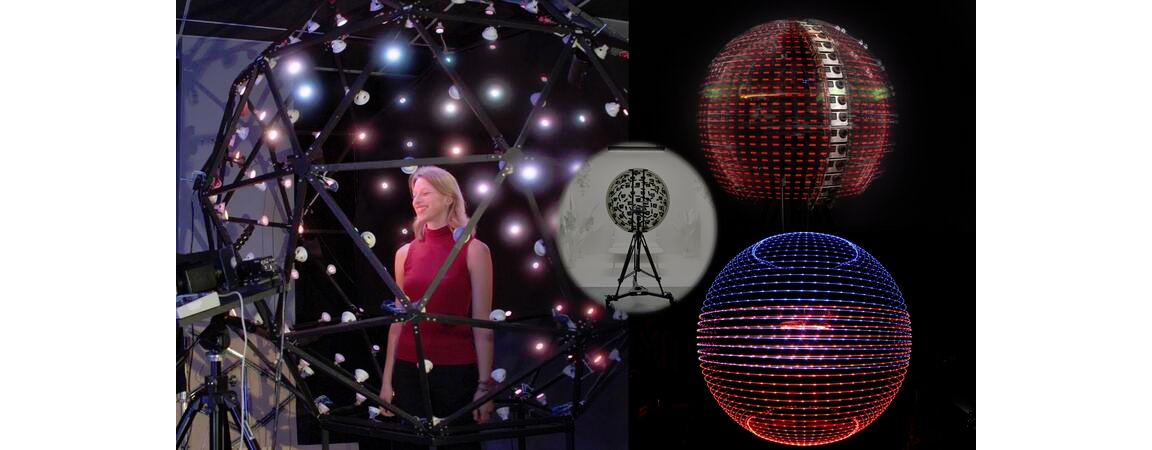

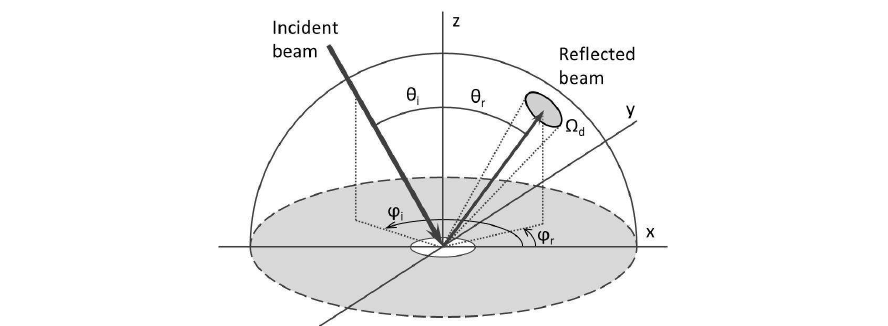

Light stage

This impressive device was built for capturing the Bidirectional Reflectance Distribution Function (BRDF), which can describe the material’s optical properties in any direction and any illumination conditions. Thanks to the linearity of lighting, we can decompose the total illumination based on its direction. The viewing angle also plays a role for reflective or special materials (e.g. iridescence).

In the most complex case, objects need to be captured from several locations and illuminated from as many directions as possible.